How Not to Buy a Digital Camera

Early this year, a peculiar confluence of events induced me to replace my cameras and lenses. Like any intelligent consumer, I studied camera-testing sites on the Web. Alas, those sites did not help me decide what to buy. I found myself unable to extract significant information from the reviews. In this article I am going to explain why I felt obliged to discount them, and how I chose what to buy.

Be It Resolved — A digital camera is an image sensor built into a box with a lens and a computer. The sensor is the limiting factor, so camera reviewers concentrate heavily on sensors.

Most tests of image sensors look at resolution before anything else, yet for 50 years lens designers have been trying to convince photographers that to the human brain, minute details matter less than the clarity of those details that are easily seen. See, for example, this article (PDF) that Zeiss first published in 1964.

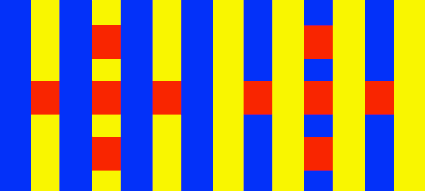

You can see this in the figure below. The picture on the left contains finer detail – it resolves lines about one-half as thick – but the picture on the right looks better, especially if you back away a bit from the screen. This truth holds even for enormous enlargements. Indeed, what you are looking at is the centre of a blow-up that would be 40 inches by 60 inches (1 meter by 1.5 meters) at the resolution of a 100-dpi display.

The picture on the left came from a conventional Bayer sensor. Bayer sensors require the image to be softened optically, to avoid coloured artifacts. Since a digital camera requires processing by a computer, it ought to be possible to sharpen the digital image to compensate for that blurring. I did this in the comparison below, as well as I could. The sensor on the right had no blurring filter, but any lens always blurs an image slightly, so I sharpened it a hair as well. As you can see, the picture on the left is greatly improved but if you back up to a normal viewing distance for a 40 inch by 60 inch picture hanging on the wall, the extra detail disappears and the picture on the right still looks a little crisper.

Which of these images is preferable will depend on your taste but frankly, I think the differences between them aren’t worth worrying about. One wins on the curves, the other wins on the straightaways. The picture on the left came from a full-frame professional DSLR with a professional lens. Its image sensor has 22 million cells. The picture on the right came from a DSLR with a smaller Foveon sensor with 4.7 million cells. These approximate the extremes of resolution available nowadays. A picture from any modern camera using a smaller Bayer sensor would probably show detail somewhere between the two and be softer than either.

(Note that to maintain the sharpness of these images I enlarged them not in Photoshop but with PhotoZoom Pro. For a discussion of PhotoZoom Pro, scroll toward the bottom of “Digital Ain’t Film: Modern Photo Editing,” 29 April 2010.)

The Olden Days — Before the days of digital image processing, the quality of a lens used to limit the quality of an image, so photographers worried a lot about optics. Usually reviewers test lenses by plotting a piece of mathematical esoterica called a “modulation transfer function” or MTF. The MTF charts in a review show how clearly a lens images details of various size, photographed from a flat test chart. These charts ignore depth. MTF charts are a fundamental tool of lens designers, but lens designers do not use simple two-dimensional MTF charts, they plot MTFs in three dimensions. Also, when lenses bend light they can also modify its phase, so lens designers examine MTF tests in conjunction with a

similar chart of a phase transfer function. If this sounds like gibberish, think of it this way. Using a two-dimensional MTF to compare lenses is like deciding on a path through mountains by distance alone, ignoring steepness and whether a route traverses peaks or valleys.

I recently saw how misleading a simple MTF test can be. I just replaced a wide-angle zoom lens with a newer and costlier model. After I bought the new lens, I happened upon a comparison of MTF tests showing it to be less sharp than the older one. The centre was comparable but the corners were worse. Much worse. Now, the corner of a lens can never be so sharp as the centre, even in a theoretically perfect lens, because light travels farther to the corners than to the centre, so that the blurry disc representing a point of light becomes larger and pear-shaped in the corners. With this new lens, however, the discrepancy seemed extreme, and I saw this myself when I photographed a flat wall. However, when I photographed the whole room, the

corners were as sharp as I would expect for a lens of its angle of view. Apparently this lens does not project a flat field, it projects a curved field, so that across the image, objects at slightly different depths are in best focus. The lens is sharp enough, it just has a curved field of focus. I confirmed this by photographing the wall again, this time changing the focus slightly. This curvature of field stands out on a simple lens test but is not noticeable in normal use.

Moreover, digital images require processing by a computer, which permits the cleanup of optical aberrations. It is easy to remove most chromatic aberration, and Photoshop also allows a kind of optical sharpening with its Smart Sharpen command. After I correct the colour fringing and go to Smart Sharpen, I find that images from my new lens need 40 percent less sharpening than images from my old lens. Thus, my new lens looks worse in a simple MTF test but actually takes sharper pictures.

The design of a lens is an intricate set of compromises. With my new lens, the designer decided to compromise flatness of field and reduce more perceptible problems instead. Flatness of field is essential for lenses used to copy documents but it matters little otherwise, since few other photos are taken of entirely flat surfaces. Thus, the poor showing of this lens in a simple MTF test does not show that the lens is bad, it shows that the manufacturer decided to make improvements that hurt the product in simplistic reviews.

Living Colour — Colour tests are even more problematic than tests of lenses, because there is virtually nothing about colour that can be measured in the physical world. Colour is not a physical phenomenon, it is a perception formed by and within the brain. A colour is the response of the brain to various mixtures of wavelength at different intensities within a context, a context of other mixtures of wavelength at different intensities, and the further context of a history of what you have recently seen and what you have learned.

Look at the image below to see an example of this. The reds are identical physically but our perceptions of them are affected by the other colours nearby. This example is simplistic but it is not a trick. Effects of context on colour are ubiquitous. Every colour that we see is affected by its physical context.

A colour’s historical context is just as important – i.e., the context of what you have learned to expect. Thus, you see brown bark and green leaves on the tree in front of you largely because you have come to expect bark to be brown and leaves to be green, yet if you take some bark and a leaf into a lab, you are likely to find them matching paint chips labelled red and yellow.

The idea of comparing colours for accuracy is appealing but nonsensical, especially when it comes to subtle colours like skin tones. In any picture the “best” skin tone will depend upon the other colours in the picture, plus the lighting and surroundings of the room you are seeing the picture in, and the appearance of your family and friends.

No camera on the market is capable of capturing accurate colours, because the notion of accurate colours is a chimera. Engineers devised a set of definitions and tests to form a common standard for manufacturing products, but these are largely arbitrary. They are useful, but they bear little relationship to how the brain sees colours.

On the other hand, every camera on the market is able to capture the full range of visible wavelengths, so every camera on the market can capture the information needed to produce pleasing colours. Colours are controlled by digital processing, and with products like the Asiva plug-ins it is possible and practical to convert any colour to any other, within the physical limits of your computer’s display

and printer’s ink. (Again, see “Digital Ain’t Film: Modern Photo Editing,” 29 April 2010.) If your camera produces JPEGs, a computer in the camera will take a first pass at this and you may not always like the results, but you cannot possibly expect the camera’s computer to balance colours blindly as well as you can balance them with a computer on your desk using your eyes. If you are fussy about colours, there is no point in worrying about the camera’s capability to record them, you must expect to balance them yourself.

Dynamic Personalities — As a practical matter, what limits photographic quality today is the dynamic range that an image sensor can record, the range of tones from light to dark. Nobody will notice picayune detail like the stitching of a hem, but people will be upset if a bride’s gown washes out to shapeless white in the sun, or if the groom’s suit disappears in a shadow.

To a first approximation, the dynamic range of sensors is proportional to the surface area of the light-sensitive cells. Among today’s sensors, this varies 40-fold. Point-and-shoots have tiny sensors and, in consequence, minimal dynamic range.

Dynamic range is difficult to measure because noise differs qualitatively from one device to another. The most common objective test is to photograph an even tone, which ought to generate an even image, then measure how much the pixels vary. That variation is the noise. A certain proportion of noise is deemed to represent the weakest background that can be detected, and this defines a sensor’s dynamic range. To see how problematic this can be, consider two car radios. One is staticky, the other has a clear signal but the bass booms badly, making announcers difficult to understand. If you measure the noise as deviations from a constant background, the staticky radio is noisier, yet the resonant boom of the second radio is as much noise

as the static is, and unlike the static, the boom prevents you from hearing the news.

The only sensible way I know to compare the dynamic range of image sensors is to compare their images. Photograph a subject that runs from too bright to show detail to too dark to capture, then pull apart the detail in the highlights and shadows, to see what the sensor has recorded. I like to do this in my living room. I photograph a wall with a studio flash aimed in such a way that the exposure at the sensor ranges from too much on a light oil painting at one side to too little on a dark oil painting on the other. Next I convert the raw images to 16-bit TIFFs in Adobe Camera Raw, with all adjustments at zero save two: I set both Recover and Fill Light to 100. These expand the brightest highlights and darkest shadows about as much as

they can be expanded. Finally, if the sensors being compared are different sizes, I resample the smaller image to the size of the larger. In addition, for the example I am going to show later in this article, I also lightened the dark pictures overall by boosting Photoshop’s Exposure setting. I did this because the shadow detail in the upper image does not show up on the 6-bit LCD displays that many people use.

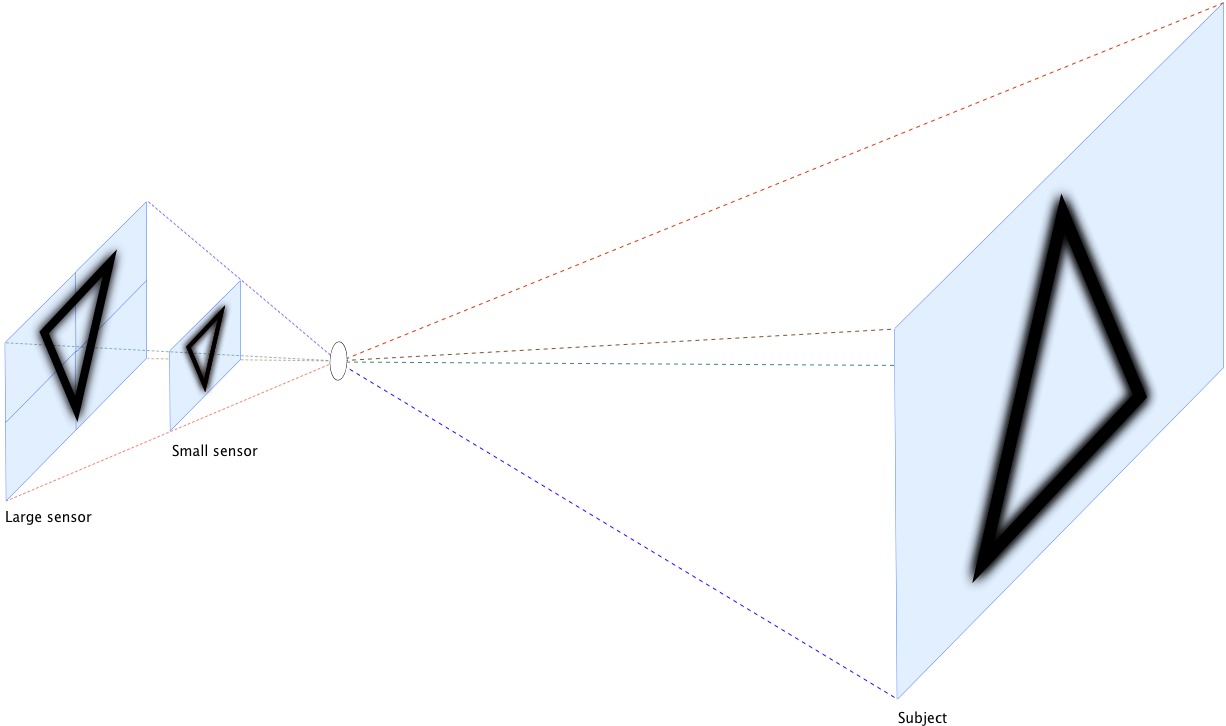

If the sensors being compared are different sizes… well, that brings up an interesting problem. It seems natural to compare ISO 100 of one sensor to ISO 100 of the other but for most photography, this is not appropriate. To see why, consider the diagram below. It shows an imaginary camera that can use either of two sensors, with a lens (the circle in the middle) that can focus the image on either. It is obvious that the images on both sensors will be scaled perfectly. The larger sensor is twice the size of the smaller one in each dimension, so every line will be twice as broad. Where the lens blurs lines, the blur will also be twice as broad, and if the shutter speeds are the same, any blur from a moving subject or camera will be

twice as broad as well. However, one factor will differ: the amount of light reaching each spot on the sensor. The larger sensor has four times the area, so the light hitting any one spot will have only one-fourth the intensity.

To compare these sensors we now have a choice. For the larger sensor we can enlarge the aperture of the lens by two stops to admit four times the light, or we can keep the shutter open four times as long to admit four times the light, or we can quadruple the sensor’s sensitivity (i.e., increase its ISO speed by two stops). Enlarging the aperture is fine for taking pictures of a test chart but it changes the image optically so that less of a three-dimensional subject is in focus from front to back (i.e., it reduces depth of field). If we slow the shutter speed, the subject is more likely to move while the shutter is open and we are more likely to move the camera. Thus, to create an image of the world that is comparable optically, we need

to increase the sensor’s ISO speed.

In short, to compare the dynamic range of sensors for ordinary picture-taking, if the sensors are of different sizes, then it is appropriate to compare them at the ISO speeds that give comparable depth of field at similar shutter speeds. Of course, for pictures taken when conditions are optimal – when the camera is on a tripod and the subject is stationary – it is also appropriate to compare the best ISO speeds of each.

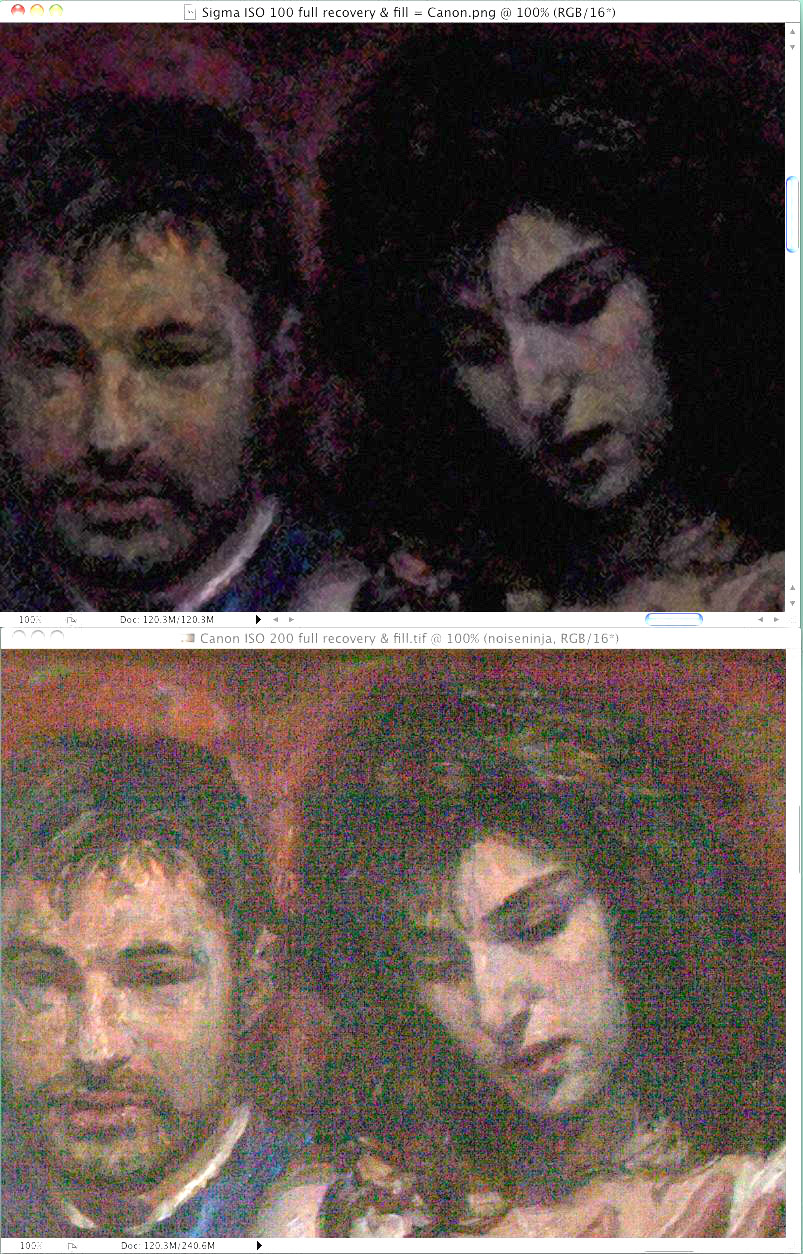

Comparing dynamic range as I do does not yield simple numbers, but unlike tests that do yield numbers, it is meaningful. For example, consider the two sensors I compared at the beginning of this article. They have just about the same difference in size as the sensors in my diagram. It happens that the best ISO speed on the smaller one is 100 and the best on the larger is 200, so let’s compare these. The image from the smaller sensor is on top, the image from the larger one is on the bottom. The lower image is brighter but it is also noisier. If you look at the dark details that you can just distinguish from black in the upper picture, or from the noise of the lower picture – look at the splotches of grey in the black hair – they are

just about the same, although the lower photo may show a hint more detail.

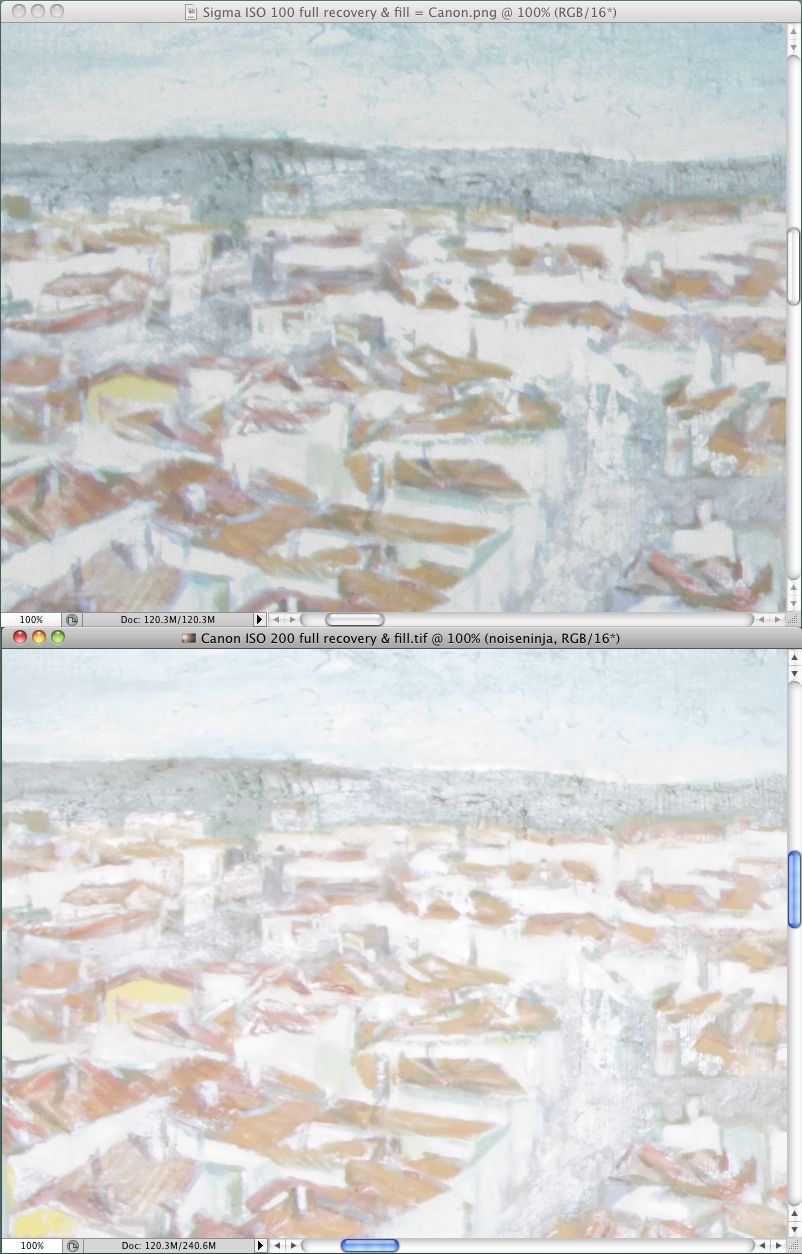

The light details in this next image are also almost the same, but in the light areas, the upper photo may show a hint more detail. In short, overall it’s a wash. At their best ISO speeds, the dynamic range of these sensors is the same.

(Incidentally, the larger of these sensors produces a 14-bit image, which is 2 bits more than the sensors in most DSLRs, including the smaller one here. This means that the voltages produced by the sensor are converted into digital numbers with greater precision. However the noise of the sensor is in its voltages, so the only effect those extra bits have on the noise is to digitize it more precisely.)

It turns out that the larger sensor’s ISO 200 and ISO 400 are indistinguishable in dynamic range, so the smaller sensor’s ISO 100 is also comparable to the larger’s ISO 400. Neither sensor is quite so good when stopped down to ISO 200 or 800, respectively, but the difference is slight and once again they are roughly comparable. At ISO 400/1600 the larger one is better, and above that point the difference is so great that the sensors cease to play in the same ballpark. The larger one seems almost able to take usable pictures in the dark.

Ignoring the Obvious — Although no conventional tests of image quality are meaningful, they still provide some information, and you might think that some information ought to be better than no information. Unfortunately, some information is not always better than no information. These conventional tests strike me as equivalent to blind men feeling parts of an elephant and describing it as like a wall, a tree, a snake, and a rope. If a reviewer measures something simple like the delay of a shutter, I am willing to believe it, but I see nothing credible about simple assessments of a phenomenon so complex as image quality. I am willing to delude myself about many things – my favourite delusion comes in the

shower, when I am convinced that I can sing – but I see no reason to delude myself that my Blink-o-Flex camera is better than my friend’s Wink-o-Flex because it gets better reviews.

Neither do I believe assessments of cameras’ mechanical quality. I do not know any way to examine a camera and tell how well built it is or how long it will last. It used to be that to use a camera you wound a spring to cock the shutter, twisted a ring to focus, pressed a button that released the shutter, heard various parts whirr and click and bang, and then twisted a knob or cranked a lever to advance the film. You could feel and hear that some cameras were better than others. Also, to withstand the pressures of your paws, parts were machined from brass and aluminum, so weight was also a clue. However, nowadays miniature motors replace fingers, so the parts need little strength and must have minimal mass. This means that quality no

longer comes from brass, it comes from being the right sort of plastic. A digital camera may need a metal case to dissipate heat from the electronics but any solid mass you feel is likely to be just a heat sink; it will not indicate mechanical quality.

Even play in lenses may not matter. It used to be that lenses were designed with the assumption that they would be manufactured perfectly, but today many engineers figure that it is more efficient to assume that there will inevitably be slop in manufacturing, especially with complex lenses, so they often design optics that will tolerate some misalignment. (Most people don’t appreciate how complex lenses have become. Professional lenses used to have 4 to 8 spherical elements that were ground from ordinary optical glasses, then cemented into 3 or 4 groups, which is what were mounted in the lens. Today, a lens sold in a modestly priced DSLR kit may contain 17 elements cemented into 13 groups, and 3 of the elements may be formed

aspherically from an exotic material.)

I don’t have any insider or specialist knowledge about makes and models of camera, so I scratched my head a lot last winter, deciding what to buy. I make big enlargements – my snapshot size is 11 inches by 17 inches (A3) – so I am after the best image quality I can get, yet I did not want a full-frame sensor, now matter how good it might be. That is because the ultimate limitation to image quality is failing to obtain an image in the first place because the camera is too heavy to bring along. Deciding not to lug the lens you need comes next. With my smaller Foveon-based cameras I am already beyond the limit of what I am able to haul on my back. I already need to leave at least one lens at home.

The next size down from full-frame is not standardized, but a number of sensors are similar enough to use the same lenses. I wanted one of those. Some of them do not have optical viewfinders and are temptingly light, but all of the lightweight models that have interchangeable lenses are based on Bayer sensors, and among sensors of this size, I prefer the devil I know to the devil I don’t. I know from experience that the Foveon has an excellent dynamic range, and I prefer the nature of the Foveon’s noises and artifacts to those of a Bayer sensor. The darkest Bayer tones are covered by coloured specks while the darkest Foveon tones lose their saturation. When we look at a natural scene with great dynamic range, usually we see little

saturation in the deep shadows, so the Foveon’s noise is more naturalistic.

Also, when the detail of a scene exceeds the sensor’s resolution, the Foveon records hints and suggestions of detail that are slightly larger, while the Bayer records moiré patterns. Since the finest detail the eye can make out usually adds nothing to a scene but hints and suggestions, the Foveon’s method of breaking up is more verisimilar. Finally, I dislike the blur that is intrinsic to a Bayer image. Although a Bayer image can be sharpened, sharpening tends to bring out artifacts, so I prefer to sharpen an image as little as possible.

I decided to buy Foveon-based cameras again but the architecture of the Bayer sensor offers one considerable advantage: it can be more sensitive to light. A Bayer sensor as sensitive as the one I tested above would be wonderful for taking candid pictures indoors, or to allow fast shutter speeds for sports and wildlife. If I photographed news or sports or a lot of wildlife, I would have been prepared to trade some image quality at low ISO speeds for better image quality at high ISO speeds. In this case I would have compared some cameras’ dynamic range inside a camera shop. At each ISO speed I would have taken a dozen pictures of the same scene one f-stop apart, then I would have compared the underexposed and overexposed pictures on a

computer.

Assessing Value — Among today’s cameras, dynamic range is far and away the most important factor limiting image quality. It is also the only optical factor that is practical to test yourself. However, a few features of a camera are also important. If you cannot see the image clearly in the viewfinder or LCD display, your pictures will be poorer. If your camera or lens does not stabilize the image optically, your pictures will be poorer. If the camera operates too slowly, you will miss pictures altogether.

You will also lose pictures if you do not have a lens with the appropriate focal length. In the days of film, sensible advice was to buy lenses with a fixed focal length rather than zoom lenses, and to buy fewer lenses rather than cheaper lenses, because you were stuck with optical imperfections. Nowadays I think the opposite is sensible. With a little time, most optical imperfections can be cleaned up. This makes cheap zoom lenses practical even for high quality work.

Of course, better lenses still make better images, which require less time to clean up, so it is still nice to have better lenses. Unfortunately, how to tell which lenses are better is a problem. The best you can “learn” from a manufacturer’s propaganda is that their Super line is perfect for everybody, this Duper line is doubly good, and their Extreme line is ideal. Price lists are usually the only intelligible guide. However, I do not know any sensible way to compare lenses from different manufacturers, and the correlation of price to quality is anything but perfect. Costs of production decrease exponentially with the quantity produced, so lower-priced lenses may be dramatically cheaper for little difference in quality, and the

highest-priced merchandise often sells not because it is better but because it more expensive. For example, as I write this you can buy a point-and-shoot made by and labelled as a Panasonic for $320, or you can buy the same Panasonic point-and-shoot labelled as a Leica for $700. Economists call such products Veblen goods, after the fellow who wrote a classic book on conspicuous consumption.

The only apparent indicator of value is the number of features a camera offers, but I don’t think this is a sensible indicator either. To my mind, every camera on the market is embellished with useless features that get in the way. I have missed any number of pictures by getting lost in a maze of menus or misinterpreting some hieroglyph and pushing the wrong button. On my cameras I would like to eliminate every menu option dealing with image size, image quality, exposure mode, aspect ratio, rotation, sharpening, colour balance, white balance, slide-show presentations, sound, and video. I would especially like to be rid of a button on one camera that I often push accidentally, the button that moves the location of the auto-focus sensor

away from the centre to some other part of the field, where I can figure out no reason for it to be. I never found any manual camera I ever owned to be so complicated to use and awkward to control as a digital camera, even my wife’s point-and-shoot, because digital cameras all try to do work more sensibly done by a desktop computer. This is daft. I want a simple camera that will save images in a raw format without any processing, then let me process the pictures in a desktop computer that is easier to control.

If my view of the photographic market seems jaundiced, well, it is. However, I really cannot be jaundiced about the cameras that are available today, once you get beyond the gadgetry. I get better enlargements from my DSLRs than I used to get from 2.25″ x 3.25″ film.

Among snapshot cameras, one model will have a larger LCD display than another, or a longer zoom, or a smaller size, but to me all of them look similar under the hood. All of them have tiny sensors that trade off dynamic range for superfluous megapixels, and all have a long list of useless features printed on the box. From what I can see, their prices are determined not by quality but by the stage in the product’s life cycle. To buy my wife’s last point-and-shoot I just visited a couple of local shops and bought the model with the fewest megapixels that had image stabilization, an LCD display that was easy to see, and a zoom lens.

If you want to buy a camera that is better than a point-and-shoot but smaller than an DSLR, then you need a sensor with a greater dynamic range. You will not get this with the “prosumer” models that look like small DSLRs but do not provide interchangeable lenses. These have the same tiny sensors as snapshot cameras, so they take no better pictures. You need a model with a four-thirds or an APC-sized sensor. Nowadays sensors of this size can be had in bodies that are hardly larger than a point-and-shoot. (I myself have one by Sigma that uses a Foveon sensor, the DP2s. It can take pictures every bit as good as my Foveon-based SLRs but I can recommend it only for skilled photographers because it lacks a zoom lens, it lacks image

stabilization, its LCD display is dim, and it has a remarkably awkward user interface.)

If you want to buy a DSLR, I think it’s more sensible to look for cheaper models than costlier ones. With a DSLR the sensor and viewfinder matter, as does image stabilization, but not much else. Like other computerized gadgets, digital cameras are constantly improving in quality and coming down in price. If you find yourself bumping into a modest camera’s limits, you will probably not be worse off selling it and buying something fancier tomorrow than you would have been buying something fancier today – and bumping into its limits is unlikely anyway.

And finally, do keep in mind that an inextricable part of any digital camera is a computer. Cameras come with built-in computers that work surprisingly well, but for top-notch pictures, no built-in computer can do enough. To get full value out of any digital camera, you need software that can optimize the digital image. Once you get beyond point-and-shoot cameras, better images do not come from better cameras, they come from better software and knowing how to use it, as I explained in “Digital Ain’t Film: Modern Photo Editing” (29 April 2010).

[If you found the information in this article valuable, Charles asks that you pay a little for it by making a donation to the aid organization Doctors Without Borders.]

“ To compare these sensors… we can enlarge the aperture of the lens by two stops to admit four times the light, or we can keep the shutter open four times as long…, or we can quadruple the sensor's sensitivity… .”

I'm no expert on optics, but this sounds wrong. The F-number is the *ratio* of the aperture to the focal length, so to get the same field of view, as your diagram shows, the larger sensor has a longer focal length; a constant "F8" exposure for the bigger sensor means a bigger aperture—in fact, its area grows exactly proportionately to the sensor's size. The result will have the same depth of field characteristics, etc. There's no need for fancy dancin' with ISO or time.

In exchange for more size and weight, the larger lens/sensor combo admits more photons, so less quantitization noise, especially valuable for low-light areas, but useful for high quality in moderate-light areas.

No?

I'm afraid not. Depth of field is determined by the actual aperture, not the relative aperture, so the larger f/8 of a longer lens creates less depth of field than the smaller f/8 of a shorter lens.

Question on snapshot cameras: Given that the sensor isn't that light sensitive, if I drop the image resolution by a factor of 4, will it combine the light from adjacent sensor pixels to increase light sensitivity? Is there some way you can think of that I could test this?

(I use a Canon PowerShot 870-IS, which takes 8 megapixel images. I bought it because it fits in my pocket, which means I'll use it. I never enlarge my photos - they usually go to email or 4x6 printouts. Right now I take 5 megapixel images, just to keep the file sizes smaller.)

If a sensor combines the output of four adjacent cells into one at a sufficiently low level that the four cells as a single, larger cell, then one kind of noise will be reduced: random fluctuations in the density of photons across space. If this is the primary source of noise, then the sensor will be a little more sensitive to dim signals, which means the dynamic range will be a little greater. Foveon sensors can combine cells' output like this, but every cell of a Foveon sensor is identical to every other. In contrast, Bayer sensors involve a checkerboard of coloured filters, so adjacent cells always differ. It might still be possible to combine nearby Bayer cells of the same colour but I don't know if any manufacturer does this.

Thank you, Charles, for another remarkable dissertation.

Most camera purchasers decide on a camera to buy for reasons unrelated to your wise criteria. And most photographers make pictures that won't benefit from superior technologies.

In the real world of non-professional photography, people are well advised to improve the design and composition of their photos (serious or casual), and then determine if digital editing is worth the effort. There is no correlation between quality of of equipment and quality of images.

Perhaps in a follow-up essay you can explore (or refer to prior articles on) ways of seeing, and selective shooting, to bring photographers' percentages up and stress levels down. Good picture making can be learned, independently of the equipment being used.

Photographers: try for one month taking one good photo each day. Only one! Use whatever camera is handy, and let your subject and lighting motivate you, not the feature set of your gear.

Nemo--Photo Instructor

This is a good read. I've been reading and following various reviews and technology but it seems to me that I'm just searching for truth.

One thing I want to see in the future, are cameras with lower resolution but higher quality pics such as from the Foveon sensors or Canon 5d.

Nice article. When I am asked for camera advice, my advice boils down to this: "Go to a good camera shop and try out a few cameras in your price range. Choose the one that feels good and is easy to use. If you are looking for a brand recommendation, stick to C and N for dSLR and add a few other names for P&S, you're not going to make a bad decision--unless you don't like using it and it stays home." I relate the story of how when I first started out in dSLR, I went into the shop intending to buy one brand based on specs and walked out with another because I liked how it felt.

The quality of the image has so much more to do with what goes on between the ears of the photographer than it does the specs of the instrument that I personally don't find specs and camera reviews very helpful. But then I'm also invested in an SLR/dSLR path such that the switching cost is nontrivial, and I'm hoping there won't be an occasion to have to evaluate my gear choices, so I'll admit my bias.

Rather than commenting on anything substantive, I just want to note that the articles from this author in TidBITS over the years have been among the most useful and well-written articles I've ever read, on any topic.

Thanks so much for the kind words, Brian! Charles's articles are unusual, to say the least, which is why I like publishing them so much. He thinks about the topic differently than most people, and his examples do a good job of supporting his arguments.

All the points in this article seem right, but at the end I'm no closer to picking a camera than when I started. Ken Rockwell (http://www.kenrockwell.com/tech/recommended-cameras.htm) seems to follow a similar approach, but he actually points you to good choices.

Ken's page is indeed quite nice. Charles explicitly didn't want to get into recommending specific models in part because they changes so frequently that the article would have a very short lifespan. (I also think he didn't necessarily have a lot nice to say about many models. :-))

Personally, I've been very fond of my Canon point-and-shoots, so I'm happy to see that Ken Rockwell likes them too. For me, it's about what fits in my pocket.

One other point buyers of point-and-shoot cameras should bear in mind: they'll be buying another one in two or three years anyway because the motor that opens out the lens will have jammed on a grain of sand, or the internal gears will have become misaligned, or the spring-loaded shutter will have unsprung.

... which raises an important point that buyers might like to consider beyond the finer points of image quality - survivability. My daughter took a nice little point-&-shoot on a wilderness excursion, and it _just_ survived, dying a week after she returned home (dust, probably). My brother wasn't so lucky; the extreme humidity and mist on a once-in-a-lifetime trip killed his mid-level DSLR midway through. Knowing this, I'd now equip her with a waterproof p-&-s, him with a DSLR a notch or two higher in the same range, with more effective weatherproofing.

Smart image-processing can deal with a lot of issues, as Charles points out, but there's not much you can do with the photo you didn't take because your camera had died.

That's an excellent point - you absolutely have to take the conditions in which you shoot into account when buying a camera, or at least protective accessories.

Great article. But I'm very curious as to which camera you finally decided on.

I recent got the Olympus E-PL1 micro four thirds camera with the VF-2 viewfinder (would not have gotten the camera if the viewfinder was not available) and the Panasonic 20mm f/1.7 and Olympus 14-150 lenses and an quite satisfied. The size and capabilities (good ISO 1600 pictures) make this a great travel camera. A number of people who have seen me use it, especially with the f/1.7 lens, are going to buy it themselves.

I really enjoy using the viewfinder and f/1.7 lens for available light pictures - almost never need the flash.

For my use, I usually take 5mp pictures - don't need 12mp.

The main problems I have are precise focus in dim light, accidentally pushing an unintended button (pushing the video button can be really annoying), and having to adjust white balance.

Overall, I am quite satisfied.

Been professionally shooting with Foveon based DSLRS since they were introduced. One of my foveon images now resides in the permanent collection of the Frost Museum, as smithsonian affiliate museum and is 5x7 feet in size. Imagine that coming from a foveon camera and it is really sharp. There is more to resolution than lines of resolution that these lab folks do. I've always said that the foveon captures soft image detail better than Bayer sensors. I also have a Canon 5D MK II and have to sharpen the hell out of it to get an image even near the sharpness that naturally comes right out of the foveon sensor. You are right on in your assessment of resolution, but people are so megapixel fixated, that they ignore lesser brands like Sigma's new SD15, thinking they will get better images with a big name Canon or Nikon. That has not been my experience. Thanks for the article. Very interesting.

Charles, on consumer-level cameras, you don't mention the difference between so-called "back-lit" CMOS sensors (such as the kind included in the iPhone 4) and the traditional CCDs. They make a difference in terms of low-light sensitivity, don't they? As for your comments on the relative uselessness of reviews, what do you think of dpreview.com?

For those of you concerned about the "life" of a camera in harsh conditions:

There are a number of manufacturers that provide "covers" for most cameras. My personal favourite is ewa-marine, http://www.ewa-marine.com/ who make water resistant "bags" as well as excellent rain capes. I photograph underwater so these "bags" are looked at somewhat dubiously by the likes of me (they have inherent problems at depths in excess of, say, 5 metres) but they're pretty good for rafting, rain forests and the like.

As for water resistant (there is no such thing any more as water proof) cameras, yes, they will be more robust but, just like the housing for my dSLR, they can, AND WILL, drown. This is mostly, almost inevitably, because of the stupid cackhandedness of people like you and me.

Charles Maurer has produced a fascinating article on key aspects of choosing a digital camera, but there is one aspect very relevant to picture quality that he hasn't dealt with - focussing. Digital cameras with automatic-focussing are notably less adept at accurate focussing the than manual focussing systems on good SLRs. I have yet to find a digital camera that has manual focussing that can match the ease of use, and accuracy, of my old Olympus OM2 35mm SLR film camera which has an optical focussing system that splits the image into two halves and at the point of the image where the two halves coincide the image is in focus. Another point is that because the OM2 used its battery only for light measurement and shutter/aperture control when in auto mode it would last for thousands of photographs. The auto exposure feature on modern digital cameras eats batteries and is one more electro-mechanical component to wear out or break down. Also, manual focussing can be done while the picture is being framed, thus speeding the camera's response when pressing the shutter. Digital cameras' lagardly response times can mostly be attributed to the time spent focussing. If digital cameras were designed by photographers rather than computer designers they would have decent manual focussing systems.

I refer, particularly, to your last sentence.

My first point would be that digital cameras are designed by computer type people is because, why, that is exactly what they are. Digital cameras are NO LONGER cameras. Just as Charles said, actually.

As you, I have issues with these digital thingies and shutter lag is one of them. As well, and seemingly increasingly, viewfinders are getting smaller and smaller. That's very much an issue for myself because I commonly look at that viewfinder from a long way away, through a mask and through a housing. Trust me when I say that that makes me squint.

Shutter lag is, and will remain, a problem (in extreme situations) just as long as film continues to give instantaneous results - and digital doesn't.

It will eventually but I have absolutely no idea when digital will do just that.

"The larger sensor has four times the area, so the light hitting any one spot will have only one-fourth the intensity."

No, that's not at all true. That assumes that if the larger sensor is 4x the size it has 4x the 'spots'. If the larger and smaller sensors both have the *same* number of pixels then the amount of light falling on each pixel will be the same. In reality the bigger the sensor the larger [and more efficient] the pixels. [See D3 vs. D300]

That's not correct. The area of a sensor is directly related to its sensitivity if all other factors are the same. (The same technology involved in making it, etc.)

If you have reference area X and make it four times larger in area, the amount of light that falls on the area is the same but each "point" on the sensor receives one-quarter as much light.

The bigger the sensor with the same fundamental technology, the longer exposure you need.

Smaller sensors receive a greater intensity of light than larger sensors; or sensor elements that have been engineered to be more sensitive (generation over generation, say) can make more use of fewer photons.