ARKit: Augmented Reality for More Than Gaming

Apple has a lot of kits. Not model airplanes, but software frameworks with which developers can create apps more easily. You’ve heard of HomeKit, which developers use to create home automation apps, and we’ve previously mentioned ResearchKit, which helps programmers write medical research apps. But those two are just the tip of the iceberg — Apple has ClockKit, CloudKit, GameplayKit, MapKit, PhotoKit, and ReplayKit, to name just a few, and the company announces more at every WWDC.

We seldom mention these frameworks because they’re usually of interest only to developers. But Apple’s upcoming ARKit is going to be a big deal for everyone once apps that incorporate it start appearing in iOS 11 (see “iOS 11 Gets Smarter in Small Ways,” 5 June 2017). And I’m willing to bet that we see a flood of them on day one.

The AR in ARKit stands for Augmented Reality. In contrast to virtual reality, which aims to encase you in a virtual world, augmented reality blends the real and virtual worlds by overlaying digitally generated images on live video. The most famous example of AR is the smash-hit Pokémon Go app, which I wrote about a year ago in “What the Heck Is Pokémon Go?” (17 July 2016).

How ARKit Works — Augmented reality isn’t new, and it wasn’t new when Pokémon Go hit the scene. Examples of AR have been around since the dawn of smartphones, but it has long been a relatively crude technology. For instance, the Amazon app can show you what a TV will look like on your living room wall, but you have to tape a dollar bill to the wall so the app knows where to position the TV. The InkHunter app can give you a preview of how a

tattoo will appear on your arm, but it needs you to draw a smiley face where you want it to go.

Those apps need the dollar bill and the smiley face as placeholders because they can’t recognize the three-dimensional objects in your photos. That’s what Apple hopes ARKit can fix.

ARKit analyzes camera and motion data to recognize surfaces and construct planes with which digital objects can interact. Additionally, ARKit can leverage all that data to apply the correct lighting to digital objects.

In short, ARKit makes it so digital objects can interact with real-world surfaces and their appearance will vary based on changing lighting conditions so they blend in as naturally as possible. Let’s look at some examples.

In this video, a robot dances around a living room, landing perfectly on the floor with each step. Note how the reflection from the lamp light follows the robot around.

Is your mind blown yet? Hold on, there’s more. A developer used ARKit to create a portal between virtual and real worlds. In the video, the developer walks through the virtual door, around a virtual world, and looks back out at the real world.

One last example. Check out this video. It’s just two guys playing basketball, right? Look closer. The players have been inserted into the scene digitally.

Remember that we’re in the earliest stages of ARKit testing and development. Developers are just getting started.

Maybe you’re impressed, but you may also be thinking, “That’s neat, but what use is it?” While many of the initial applications will be for gaming, I anticipate that we’ll see plenty of useful applications.

ARKit in the Real World — After Apple releases iOS 11, tape measure apps will be the new flashlight apps — expect a bunch of them. There are already several working concepts, but this video shows the most impressive I’ve seen yet.

But the concept of using AR to measure the real world can be taken further. Here’s a video of an app that can measure the square footage of an entire room.

Speaking of rooms, as someone who has spent all summer moving furniture around, I’m excited by this video of a concept app by developer Asher Volmer, which lets you use AR to place furniture in a room.

Playing with ARKit — If you’re an Apple developer, you can experiment with Apple’s ARKit demo app, which lets you place a few simple objects in AR. You’ll have to use Xcode to compile the project and install the app, but it’s not too onerous if you know what you’re doing. I’m no programmer, and I was able to get it working.

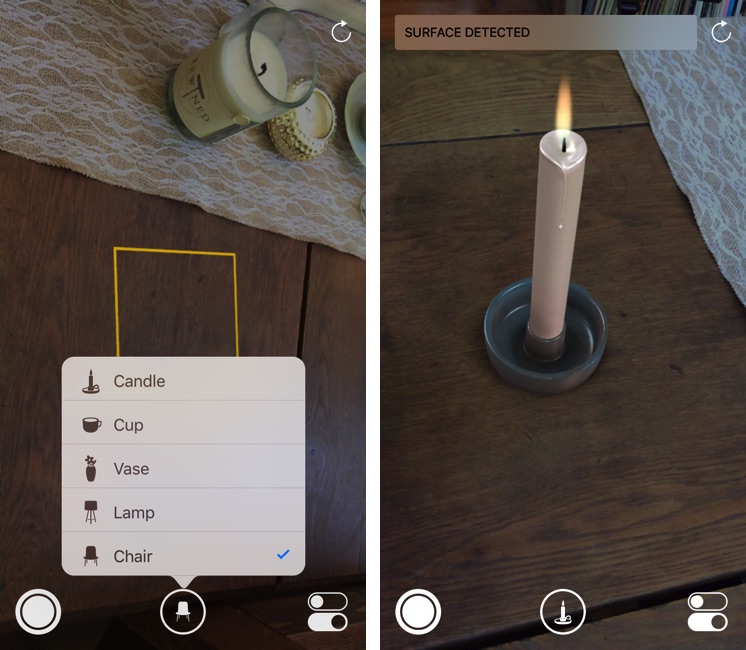

If you don’t want to fuss with the ARKit demo app, I’ll give you a taste. It works a lot like the Camera app. The app scans for surfaces onto which it can place objects, and when it recognizes one, it puts a yellow square on it. The center button lets you choose an object to place: a candle, cup, vase, lamp, or chair. Once you’ve positioned an object like the candle in the screenshot below, it looks and acts like a 3D object in real space.

The virtual chair makes for a better demonstration. I can walk around it just as though it were a real chair.

I can even put my foot under it, and it was an odd feeling to see my foot disappearing under something that I knew wasn’t really there.

ARKit apps have many potential uses around the house. Instead of moving all your furniture around to figure out where it fits best, you could use ARKit to place objects around the room, saving yourself a lot of work and back pain. Or, an ARKit app might help you visualize what paint colors or carpeting will look like in your house as the lighting changes throughout the day.

Likewise, ARKit will appear in all sorts of shopping apps. Imagine being able to use AR to place a sofa in your living room before ordering it. One app shows what this could be like, using a pillow as an example.

Alper Guler shows how ARKit could assist in helping us decide what to order at a restaurant. Ever glanced over at other diners to see what their dishes looked like before making your choice? What if you could see every item on the menu right in front of you at the table? Here’s a video showing that.

ARKit will also prove useful when traveling in an unfamiliar city. This demo video shows how an app can pair ARKit with CoreLocation to identify points of interest on a skyline, as well as AR direction lines you can follow as you walk down the street.

The more I use ARKit, the more I believe that Apple has to be thinking about taking it into products that go beyond the iPhone and iPad. Walking down the street holding an iPhone in front of your face to see how your real world has been augmented will be awkward (as we know from Pokémon Go players). It’s begging to be integrated into a pair of glasses.

We’ve had a taste of that, with Google Glass. While the initial technology was promising, it suffered from bugs and somewhat overblown privacy concerns (it’s not like people weren’t always taking pictures and recording video with their phones). However, Glass’s second life in the industrial world (see “Google Glass Returns… In Factories and Warehouses,” 19 July 2017) may indicate that the initial release of Glass simply lacked a killer app. With the kinds of augmentation possible with ARKit backing it up, Apple may be able to create electronic eyewear that would be both functionally compelling and socially acceptable.

Great article! Informative with just the right amount of examples to illustrate what is being discussed.

I can imagine many great uses for this ARKit. For example, we are in the process of planning a remodeling of our kitchen and being able to visualize what we are thinking about would be very helpful.

I'm looking forward to the apps that are created using ARKit!

Very exciting -- as Josh notes, AR has been around for a long time, but finally the development barrier is being lowered, with the prospect of availability of many more useful apps coming to us soon.

[Can't help being bugged by all the vertical-format videos. Have these folks never been to a movie theater?]