CES 2018: Tech Trends from the Consumer Technology Association

Welcome to CES 2018, which started for me 18 minutes before registration, when I read this serendipitous tweet by @fro_vo:

ROBIN: the batmobile won’t start

BATMAN: check the battery

ROBIN: what’s a tery

This Bat-channel will likely be replete with coverage of Bat-teries and other charging technologies, as well as anything else under the sun that can be appified, cloudified, or smartified.

(And some things that shouldn’t; as per usual, I have bookmarked several booths on the basis that their products are Bat-poop crazy. This year’s hot baffling trend appears to be adding blockchains to things for no reason.)

CES starts with two days dedicated to press briefings and events, and I always start my show with the generally excellent presentation from the Consumer Technology Association, the people running this show, on the statistics and trends of the industry. This year the speakers were Steve Koenig, Senior Director of Research, and Lesley Rohrbaugh, Senior Manager of Research.

5G Cellular Networks — They started with an update on the forthcoming 5G network, about which I was largely bullish last year but skeptical about aspects of its implementation (see “Ideas from CES 2017: 5G in Your Future,” 19 January 2017). The first networks will be operational (but not yet available to consumers) this year, and work is proceeding on the 5G New Radio standard that will be its underpinning.

5G will be blazingly fast — a 2-hour movie download will take under 4 seconds — and with that, new applications and technologies will come along which no one has quite thought of yet. It’s just how humans use computers and gadgets: you act differently when something takes long enough that you can go make a pot of coffee, versus so quickly that you only have time to take a sip of coffee.

The physics behind this is millimeter wave spectrum, a radio wave frequency between 12 and 120 times higher than what’s underneath standard 2.4 GHz Wi-Fi — millimeter wave uses 30 to 300 GHz. Radio waves in that part of the spectrum allow for faster speeds and low latency. On the downside, they’re more easily blocked by structures, so cell phone networks will need additional tiny towers called “small cells” to cover everywhere. Mentioned in passing by the speakers: 5G will bring “new business models,” which is what I was skeptical about last year; U.S. carriers won’t pass up such an opportunity to raise our monthly service

fees.

Artificial Intelligence — Artificial intelligence is also making waves, embedded as it is in everything from Siri to the technology driving major companies. It increasingly relies on a strategy called “deep learning,” in which an AI mines huge amounts of data for trends and patterns and invents AI procedures that humans not only can’t program themselves, but whose outcomes can’t even be predicted. Instead, we set parameters for these outcomes, and the AI decides how to achieve them.

Sometimes we get it wrong, like the time Microsoft accidentally invented a Nazi Twitter chatbot. One of the presentation’s slides rather amusingly said that AI “will generate societal impacts,” which is an understatement. Done right, AI might create undreamed-of capabilities, and done wrong, it could hand over huge amounts of human autonomy to computers. (Just imagine what happens when AI decisions become company policies that can’t be altered. “Sorry, it’s policy, nothing I can do.”)

The most common way people interact with an AI is when they talk to smart speakers, or with Siri or Google Assistant on their phones. Those are just two of the voice options; you might also interact with Amazon’s Alexa, Microsoft’s Cortana, or Samsung’s Bixby. (Perhaps a company known for exploding phones shouldn’t have named their assistant after this guy.)

This situation has the advantage of competition — the engineers developing Siri can see what everyone else is doing and act accordingly — but the problem is that these standards are not interoperable. If you want to smarten up your house with voice-controlled devices, it’s a good idea to pick one ecosystem and stick with it. That could be frustrating if you prefer Siri and you’re still waiting for a HomePod, and more frustrating still if you regret your choice in 2019 when a different ecosystem gets a feature you really want. (Some third-party speakers support multiple standards; those devices could migrate with you if you decide to switch horses midstream or if you want to use

different AI assistants for different things.)

Coming soon, perhaps, will be true conversational capabilities. So long, “Hey Siri, set a timer for 30 minutes,” and hello, “Siri, if I don’t leave in 30 minutes, my spouse is going to kill me.” This raises interesting questions about how we interact with these devices; many people in the U.S. already think of Siri as a “her” instead of an app, and when you think a program is a person, weird things can happen.

Consumer electronics developers love this idea and are rushing towards it, calling the trend “conversations to relationships.” They want you to have warm, fuzzy feelings about the apps you’re talking to. Put the app’s voice into a stuffed animal or a cute robot, and the emotional stakes get raised even higher.

Researchers are also working on how to build trust between humans and their assistant AIs. Rachel Bellamy at IBM predicts that within five years, you’ll be able to ask your AI why it’s making a particular recommendation, and it will be able to tell you.

The dark side of this brave new world came from a slide saying that voice AIs are “the fourth sales channel,” which to my mind brings thoughts of Siri and Google Assistant mixing advertising with their information. Arguably, this is a key feature of Alexa, which was mentioned on the slide.

One particularly interesting moment in the presentation came when Koenig was discussing AI implementations and showed a slide of someone cuddling with a “sleep robot.” Rohrbaugh quipped, “Steve, would you sleep with a robot?” Koenig replied something to the effect of probably not, to audience laughter. That laughter is the interesting part: a roomful of technology enthusiasts and journalists thought “sleep with a robot” was funny.

But there are companies developing sex robots (which I don’t cover, but some of those technologies are here), and while it sounds strange in 2018, ask someone in 1998 how they’d feel about going on the Internet and paying to get into a stranger’s car. In the meantime, perfectly G-rated technologies that intrude on private and sensitive spaces, like the bedroom, have hurdles to overcome. You can’t sell many units when your most likely early adopters burst into laughter about using the product.

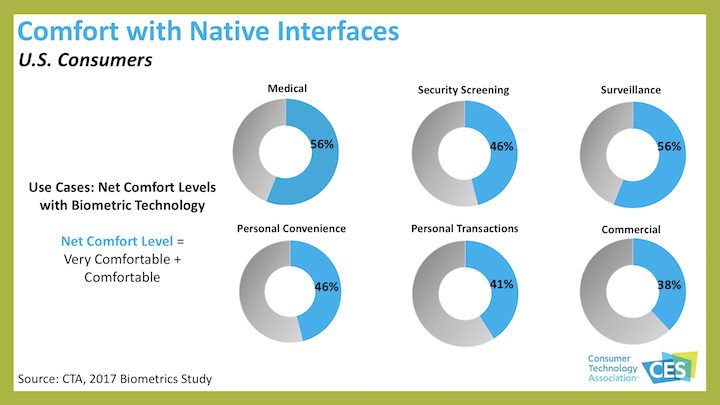

Biometrics — Next up: more biometrics, which is part of what CTA calls “digital senses.” In just the last few months, some people were up in arms about trading fingerprint sensors for facial recognition on the new iPhone X. The question isn’t whether we’re using biometrics, it’s where and how often.

That said, a surprisingly large number of people aren’t comfortable with the idea in concept — about half, depending on where it’s used. I find it odd that only 46 percent of respondents are happy with personal convenience biometrics, though I wonder if they even realize this includes Touch ID. I’m also unhappily surprised that 56 percent are cool with biometrics being used for surveillance.

AR, VR, and MR — The other half of CTA’s “digital senses” category is “realism redefined,” which means augmented reality, virtual reality, and mixed reality (abbreviated AR, VR, and MR). AR is adding information to a real scene, as with a phone showing you the restaurant its camera is pointing at and overlaying the menu. VR replaces your surroundings with a created environment, such as a Star Wars video game. MR is a term I’m first hearing this year; it’s putting virtual objects into real environments, like the game demoed

at WWDC last year which put a futuristic space colony onto a tabletop. We’ll see if the term catches on, or if people just consider it another form of AR.

In my opinion, AR and MR are going to be huge in ways we don’t yet comprehend, in much the same way (and perhaps as transformative) as what happened in the 1990s when everyone realized this Internet thing let you send email to anyone in the world, for free.

I’m confident that AR is on the same path. After it’s in common use — probably with some kind of glasses — eventually ones indistinguishable from today’s normal eyeglasses, people will think of our current unenhanced reality with the same lack of comprehension that we have of the time before cell phones or answering machines. (I can remember it, but I’m not sure how I survived it.)

Just imagine having Google Maps as a heads-up display, in your glasses, and it’s always and instantly on when you want it. If you want to find your friend in a large crowd, you’ll have X-ray vision. In the meantime, as Koenig said, right now everyone is walking around looking down at their phones; how much better will it be when people are looking through their phones and interacting more with their surroundings?

I’m less certain of VR being transformative outside of limited applications. It has exciting possibilities in medicine, such as current experiments allowing people to recover from post-traumatic stress faster than with traditional approaches. Sports fanatics may explode with joy when they can watch a game from on the field, and of course, VR has applications in gaming. Facebook is already exploring group VR experiences, which to me look like a mix between Second Life and an episode of Black Mirror.

Beyond Consumer — Meanwhile, the reality we’re augmenting is likely to get smarter as well. One of the reasons that CES changed its name from the Consumer Electronics Show (it’s now just “CES,” technically no longer an acronym) is that the technologies here are not just personal.

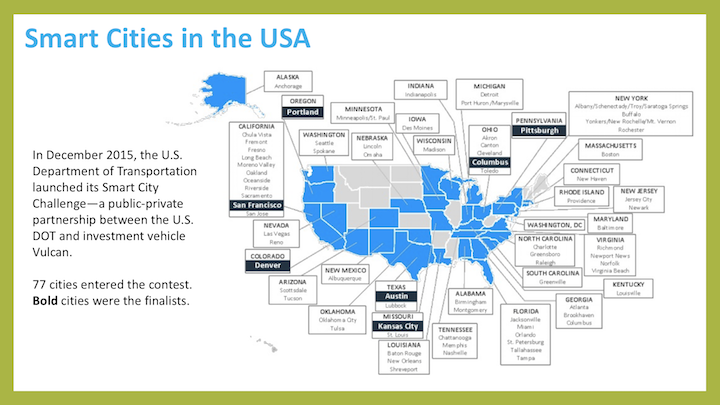

The term “smart cities” is moving from buzzword to at least test implementation; the idea is to make an urban area into a big data soup, which can then be mined and processed for much better management. On the one hand, consider potholes that are scheduled to be fixed while they’re still small, because ubiquitous urban cameras spot them. On the other hand, ponder the advantages and disadvantages of having your city government able to watch you 24/7 and get summaries of what you’re doing.

Then further consider what happens when police start integrating smart house data into public safety. How many politicians will be able to resist calls for “better safety” by handing over smart speaker data to the police? Amazon fought a police department’s request for Alexa data, but that was in a jurisdiction where no law said Amazon had to turn it over. We’ll see this tested again in the future.

For now, the “smart city” term is highly elastic; it includes both the somewhat Orwellian future I just mentioned, but also a small first step from the U.S. Department of Transportation called the Smart City Challenge. Yes, improving urban transportation is highly worthwhile, but it’s just a tiny part of the overall concept, and it leads to overblown slides like this one, which make us look much further along than we are. (Only the cities in black were accepted to the program, and that means they’re doing studies and making plans, not actually building stuff.)

That’s your taste of the future. In my next article: the first look at the actual gadgets that are already here.