The Case for ARM-Based Macs

Persistent rumors suggest that Apple will switch from the Intel x86 processors in current Macs to ARM processors like Apple’s A series of chips that power iOS devices. Apple has said nothing about such a transition, but that’s par for the course for Apple. Recently, however, Mark Gurman at Bloomberg wrote about Apple moving to ARM-based Macs in 2021 (“Bloomberg Reports Apple Will Start Transitioning the Mac to ARM in 2021,” 27 April 2020) and he followed it up this week with another piece suggesting that an announcement might come on 22 June 2020 at Apple’s Worldwide Developers Conference. While Bloomberg has its problems (see “Apple Categorically Denies Businessweek’s China Hack Report,” 8 October 2018), Gurman is known for reliable sources and accurate reporting.

ARM is by far the most popular processor family in the world. While there are several billion Intel PCs in the world, there are over 100 billion ARM devices. When Apple designed Intel-based Macs, they were the first major products Apple had ever made with x86 chips. But Apple has lots of experience with ARM chips. The first Apple device to use an ARM processor was the Newton in 1993. Since then, Apple has put ARM processors into the iPod, iPhone, iPad, Apple Watch, and Apple TV.

Apple has successfully switched the Mac’s processor twice before. In 1994, Apple moved from the Mac’s original Motorola 68000 processors to IBM PowerPC processors. And in 2006, the company ditched the PowerPC in favor of Intel x86 processors. Both transitions were fairly smooth due to years of testing—Apple maintained a version of Mac OS X running on Intel chips years before the first Intel Macs shipped. Apple almost certainly has a version of macOS running on ARM right now, in some secret lab.

I don’t have any inside information on whether Apple is working on ARM-based Macs. But let’s look at the pros and cons of switching from Intel to ARM.

The Obvious Win: Reduced Power Consumption

The most commonly cited advantage for ARM processors is lower power consumption. It’s true that ARM processors use less power than Intel’s x86 processors. Part of this advantage comes from ARM’s relatively clean, modern design, as set against the years of baggage that Intel has accumulated since the original 8086 processor. Perhaps even more important is the way ARM would allow Apple to add the specific support it needs into its own ARM chip designs, instead of relying on off-the-shelf parts that Intel has designed for generic PC implementations.

Lower power consumption would lead to better Macs in several ways. Most obviously, the battery on your laptop would last longer. Instead of 8 hours, a new ARM-based Mac laptop in the same form factor might get 12 hours from a single charge. But Apple is always trying for thinner, lighter laptops. Apple might decide that 8 hours of battery life is fine for most users and instead use a smaller battery in an even skinnier, featherweight laptop design.

Reduced power usage would also result in less heat generated by the processor, which would mean smaller heat sinks and less fan noise. That would bring benefits to both laptops and desktops. Computers that run close to their thermal design limits, like the iMac Pro, could get more powerful processors in the same design or smaller cases with the same processing power.

But lower power wouldn’t be the only benefit of switching to ARM, or even the main benefit.

Apple’s True Motivations: Control and Profit

Apple wants to control its own destiny, and the best way to do that right now is to control the processor roadmap. Roadmap refers to future development plans: what features are added, in what order and on what schedule, which fabrication plants and processes are used, how many processors are produced, and how many units are allocated to each manufacturer. Apple doesn’t want to depend on Intel for these key decisions. As Tim Cook has famously said, “We believe that we need to own and control the primary technologies behind the products we make.”

The other reason for switching from Intel to ARM is profit. Intel processors are high margin products, and Apple wants to capture that lucrative margin for itself rather than paying it to Intel.

In short, Apple’s main reasons for switching from the Intel x86 architecture to the ARM architecture are business, not technical. Let’s look at these and related business decisions.

Roadmap

Controlling the processor roadmap lets Apple better control its products. Rather than being stuck with the components Intel puts in a particular chipset, Apple can custom design a System On a Chip (SOC) specifically for the Mac, just as it has for iOS devices for years now. Apple could control the number and type of cores, the digital-signal processor media cores, the size of the data and instruction caches, the memory controllers, USB controllers, Thunderbolt controllers, etc. Apple would control not just a single chip, but the entire direction of the processor line.

Unlike PC vendors, who license Windows from Microsoft or ChromeOS from Google, Apple also controls the operating system. This gives Apple a huge advantage over its competitors. Apple’s latest iPhone SOCs include both fast and slow cores, which the company prefers to call “performance” and “efficiency” cores. The advertised speed for a computer, like “3 GHz processor,” is the speed of the fast cores. When you do something processor-intensive, like rendering video in Final Cut Pro X or compiling an iPhone app in Xcode, those tasks would spin up all the fast cores. When you’re writing an email message or reading a Web page, the Mac doesn’t need to do hardly anything. Right now, all macOS can do is run the main Intel processor at a slower speed. With a custom ARM-based SOC with fast and slow cores, macOS could switch to slower, more energy-efficient cores. Dynamically switching cores depending on the task is key to saving energy.

In its A series chips for iOS devices, Apple also has custom-designed media cores for tasks like decoding video for a movie, audio for a podcast, and encryption. While Intel chips have similar features, with a custom chip, Apple could optimize for the media formats and encryption algorithms most common on Macs. And since Apple also controls macOS, it can ensure that macOS algorithms and processor cores are perfectly matched, again ensuring that they consume less power for any given task. When Apple engineers improve their algorithms, they can update their next-generation media cores to perfectly support the improvements, without those improvements also becoming available to competitors.

Much of the code in a modern Mac app just glues together macOS API calls to accomplish a task. For many apps, the bulk of the processor-intensive work happens in macOS. This means Apple can optimize much of the work that apps do for the new ARM processors, even before third-party developers become expert at exploiting the new ARM processors themselves. For instance, playing a movie mostly consists of calling on macOS, which does the heavy lifting of decoding the video using Apple’s optimized media cores.

Intel’s Production Problems

Over the last few years, Intel has suffered a series of production problems. Many of them were the result of moving to smaller processes, that is, etching smaller parts onto the silicon. Smaller processes create smaller chips that use less power and generate less heat. Although we’ll never know for sure, one likely reason Apple had a long dry spell releasing new Macs was Intel’s tardiness in providing the new chips Apple needed. This had to impact the number of Macs sold. Although Apple never complains about partners publicly, it obviously isn’t happy with Intel.

Buying Intel chips makes Apple dependent on Intel chip fabs. When Apple designs its own chips, it can use whatever fab it likes. Apple currently relies on TSMC and Samsung for its A series chips, but if those companies have problems meeting Apple’s needs, Apple can use another fab, assuming it has equivalent capabilities. Apple prefers having multiple sources for components.

Sometimes production problems are technical, like Intel’s were. But they can also be political, like tariffs applied to Chinese goods, or natural disasters like the floods that closed Thai hard disk factories in 2011 and caused worldwide shortages. Multiple sources mean that problems with one vendor won’t halt production.

Profit

Next to the screen, the processor is one of the most expensive parts in a computer. The processor isn’t just expensive; it’s also high margin. In high production volumes, processors are sold for much more than they cost to build. Relying on Intel processors means Intel earns those rich margins instead of Apple. Designing and building its own processors would let Apple capture those margins. Apple can keep selling computers at the same price and book the additional profit, or it can sell the same computers for less, without compromising the company’s famously fat margins.

Although it might seem as though Intel and ARM are competitors, they actually have utterly different business models. Intel designs the processor and all the associated support components like memory controllers. It integrates them into a System On a Chip. It manufactures the chips in its own fabs. And while it sells the chips directly to computer manufacturers, it also markets its brand to the public (“Intel Inside”). Intel makes most of its money selling high-end processors. The fastest processors have the fattest margins but are quickly obsoleted by even faster processors, so Intel is always pushing the envelope. Intel has a few rivals like AMD, but for the most part, Intel is the dominant vendor of high-end processors.

ARM (previously known as Advanced RISC Machines, now Arm Limited) works completely differently. ARM designs processors and licenses the designs. ARM doesn’t supply supporting components or build its own chips. The licensee integrates the ARM processor and supporting components into a SOC—this is what Apple does for its A series of chips. ARM processors are inexpensive and have low margins, so ARM makes money on volume. There are many, many more ARM processors than Intel processors—I heard someone say that, to a first approximation, all processors in the world are ARM processors.

Intel makes a lot more money than ARM because Intel CPUs are expensive high-performance chips, and Intel designs, builds, and sells the processors. ARM just licenses its designs, and most of those are inexpensive low-power designs.

But Apple is successfully scaling up the performance of its ARM-based processors to compete with Intel’s processors. The ARM business model lets Apple make competitive parts much more cheaply and capture that margin itself.

Related Savings

Using the same processor in all Apple products would be more efficient company-wide. Apple’s hardware teams would have to support only one processor architecture, one memory controller, and one I/O system. Most apps written in a high-level language like Swift or Objective-C wouldn’t need a lot of modification. Low-level software like boot code and device drivers could be shared. Development tools and the App Store would save work targeting a single instruction set architecture.

Of course, these savings will take several years to materialize. In the meantime, Apple will support Intel-based Macs for customers and developers for a few years.

What Would the Transition Look Like?

Apple’s transition from IBM’s PowerPC architecture to Intel x86 was fairly quick—the entire Mac line switched in less than a year. While Apple could switch to ARM that quickly, the company might proceed more slowly. The most obvious customer advantage comes in smaller laptops like the MacBook Air. An ARM-powered MacBook Air could be more powerful than its Intel predecessor, with longer battery life, while simultaneously being thinner and lighter: a winning package. The ARM SOCs in the current iPads are already more powerful than the Intel processors in most of Apple’s laptop line, so transitioning all Apple laptops to ARM makes sense.

The Mac mini isn’t any more powerful than a high-end MacBook Pro and could be powered by the same ARM processor. Users of the iMac, and especially the iMac Pro, probably want a more powerful processor than any ARM chip shipping now, since the goal isn’t just to match currently shipping products, but to surpass them. An ARM chip powerful enough for an iMac seems well within Apple’s immediate roadmap.

The issue is the Mac Pro, which relies on high-end Intel Xeon processors. It’s certainly possible for Apple to develop a competitive ARM processor—the only question is how long that would take. Also, the sales volume of the very expensive Mac Pro is probably fairly low, meaning that a custom ARM SOC developed for it is unlikely to ever sell in high enough quantities to amortize the cost of its development on its own. Apple will have to consider it part of the overall cost of moving to ARM.

As long as Apple sells and supports any Intel Macs, it must build, test, and maintain two versions of macOS, two copies of every app, and two sets of Xcode development tools and App Store infrastructure. This effort will come at a significant cost. Once the transition starts, Apple will want to move past the Intel era as quickly as is practical.

In past transitions (Motorola 68000 to IBM PowerPC, then PowerPC to Intel), Apple included an emulator in the operating system that ran apps written for the previous processor family. It’s logical to assume Apple would include an emulator for running Intel apps on the new ARM Macs. Previous emulators worked with near-perfect fidelity, and an Intel emulator on ARM ought to have excellent fidelity too.

But to take full advantage of the new ARM chips, third-party developers would have to recompile their Mac apps for ARM and submit updates to the App Store. Some minor changes may be required, but it shouldn’t be too much work. The App Store accepts apps encoded in the LLVM intermediate language, which allows developers to submit one compiled version of their app, which the App Store then translates for iPhone models with slightly different ARM processors. But the LLVM intermediate language isn’t robust enough to translate an Intel app into an ARM app.

What About Windows?

One casualty of an ARM transition may be Microsoft Windows compatibility. Current Macs use the same Intel processors as Windows PCs, letting you run Windows and its apps at full speed. Apple makes it easy to boot your Mac as a Windows PC with Boot Camp, and third-party virtualization products like VMware Fusion and Parallels Desktop run Windows inside macOS. If Macs no longer have Intel chips, they won’t be able to run Windows, at least the mainline version compiled for Intel processors, natively.

There are several other options. Apple’s Intel x86 emulator might support running Windows too. There were Windows emulators for PowerPC Macs, but they were never as fast as Windows running on a real PC. The performance may be good enough for occasional tasks that require Windows, but it will probably be unsatisfactory for gaming or other hardcore use.

Plus, Microsoft released Windows for ARM for its Surface Pro X. But most third-party Windows software isn’t available in an ARM-compatible version. While there may be a vocal minority of Mac Windows users, they are probably too few for Apple to care about.

ARM in Your Future

The case for ARM Macs is compelling. Long term, it doesn’t make sense for Apple to support two processor families, so the entire Mac product line will probably move to ARM eventually. If the transition to ARM goes as smoothly as the transitions to PowerPC and Intel did, customers have much to gain and little to fear.

A few things:

Apple doesn’t use ARM processor designs. They only license the instruction set; the A-series chips are from-scratch Apple designs. This is what makes it possible for iPhones to so completely outperform contemporary Android phones.

One possibility I haven’t seen mentioned anywhere in the backward compatibility department is that early ARM Macs could include an X86 processor to handle running software written for the old system. This could be built in, or it could be in an external peripheral, functioning in much the way an eGPU does. The Mac Pro could even have such a thing on a PCI Express card or MPX module.

In the past fifteen years, a lot of developers have moved to Mac because it provides an X86+unix environment, which is a huge boon when developing software which will eventually deploy to a cloud environment, where Linux on X86 is king. The differences between BSD and Linux notwithstanding, this has made the Mac the machine of choice for a huge community of web and open source developers. We can even use tools like VMware, Virtualbox, Docker, and Kubernetes to mimic our target deployment environments. Windows has only fairly recently gotten similarly good at this stuff, with the addition of WSL. I have significant concerns that those tools will take quite some time to migrate to and mature on ARM, and any period of time where you can’t do that on the latest and greatest Macs is going to send many devs running to Windows, Ubuntu, or some other Linux.

Bring back “Houdini!”

http://www.edibleapple.com/2009/12/09/blast-from-the-past-a-look-back-at-apples-dos-compatibility-cards/

Well, it’s one reason. Another is that all Android apps execute via a Java-like virtual machine. This has the benefit of allowing apps to run on phones with very different CPU architectures, but that comes with a substantial performance penalty.

I think @JakeRobb makes a very good point about Windows Subsystem for Linux becoming a factor for certain kinds of users leaving macOS behind.

However, Linux for ARM has also improved a lot, the cloud providers (Amazon in particular) have started to support more workloads on ARM targets, and hence support for ARM-based containerisation might improve, but might still be problematic if x86 is still the de facto standard.

Maybe hybrid designs are in the way, with ARM as extension cards… or Intel! (Intel outside ). We’ll see!

). We’ll see!

Or a hybrid approach, where a handful of custom instructions are added to the ARM core to facilitate efficient emulation. When writing emulators (in the 1980’s, so I’m not sure how relevant these observations are to the modern x86 instruction set) I was often struck by how much of a bottleneck could be created by having to perform just one or two primitives in software that weren’t well-supported by the host instruction set.

Couldn’t agree more.

The article just grazes that point here

And IMHO that sentence (along with the preceding paragraph) misses the point entirely. It’s not about Windows users with Mac hardware.

It’s about the Mac in general transitioning from being an very open and versatile platform adaptable to in principle almost any use (yep, that’s where we ended up once we had left Classic and PPC behind) to a closed platform more and more locked down to a decreasing number of allowed apps by registered and pre-approved developers, tailored to scenarios that have increasingly more to do with entertainment and for-sale media, than with actual work as in the creation of original hardware and software, scientific endeavor, engineering, etc.

That flexible and versatile Mac came with things like out of the box X11 compatibility, GNU tools (incl. absolute essentials like gcc et al.), easy dual-boot capabilities for Linux and Windows, EFI support, virtualization to allow for essentially anything you could desire, being able to recompile and run a myriad of open source tools from the *nix world. That Mac offered tons of stuff that didn’t directly have anything to do with Apple. But Apple realized there was value in allowing for industry standards. Those that were interested loved that you could do all of that on your Mac. And they gave up having to operate three separate boxes. After all, they had a Mac that did all of it. OTOH, those that didn’t care never had to notice it was there in the first place.

It’s not about Windows. It’s about becoming less of a tool to do “computing” and more of a locked down gadget tailored to do exactly what Apple thinks we ought to be doing with the tools Apple thinks we ought to be using. I was hoping the Mac would stay this versatile tool and Apple could have the iPhone be the gadget it totally controls. Those happy to lead their lives on a phone could happily continue to do that, while those of us who need a real computer to get our work done could continue to use a Mac to do just that. But so far, and to my surprise considering the volume of these rumors lately, I have really seen almost nothing related to the ARM transition to indicate that this level of openness and versatility on the Mac will be preserved. There could well be such a route. But so far hardly any words have been spent on that aspect. Because, again, it’s about far more than just Windows on Mac. I’m hoping we’ll soon learn more about that important aspect of such a transition.

Well said, @Simon. Obviously, we don’t know even if Apple is going to move to ARM, much as it seems likely, much less how this will affect the open-source underpinnings of macOS and the Mac experience. It’s unlikely to affect my personal use of the Mac, but I’m already seeing how Apple’s focus on certain markets to the exclusion of others is driving certain customers away.

My son Tristan is a rising senior at Cornell University, majoring in computer science with a focus on machine learning. The problem he faces is that the systems he needs to use rely on Nvidia GPUs, which aren’t compatible with the Mac basically at all anymore. He’s been trying to figure out how get the GPU he needs for ML work on a Mac, and basically just gave up. Now he’s speccing out what is essentially a gaming PC desktop with an Nvidia GPU (assuming he can ever buy one given that they’re always out of stock, and apparently Nvidia will be releasing a new generation in September anyway). He’ll keep using a Mac for everyday stuff, but he’s already thinking about downgrading his 2017 13-inch MacBook Pro to a new 2020 13-inch MacBook Air since the Mac’s performance is irrelevant if he’s mostly SSHing into a PC anyway.

What I also don’t know is if scientific and engineering groups that have historically relied on the Mac are making a stink about this sort of problem to Apple. We got the Mac Pro only because the high-end video and creative professional worlds made a big deal about how they could no longer use the Mac. And it certainly didn’t hurt that celebrities started to mock the butterfly keyboard. Apple does respond to pressure, albeit slowly and usually without any indication that changes are being made in advance.

I’m looking at this from a strictly business standpoint. Apple has always been an “if you build it, they will come” kind of company. The App Store and Services have been an increasingly successful and significant revenue stream since Steve Jobs first announced it.

Microsoft started selling ARM Surface machines about two years or so ago, and convinced some hardware manufacturers to build them too. And every one of these machines has been a disaster because MS decided that a major selling point would be that the ARM Surfaces would run existing Windows software and apps. They did not optimize their products to run on 64k machines, and did not encourage or incentivize developers to do so. So although the ARM Windows stuff has better battery life, is lighter weight, quieter, etc., everything runs significantly slower, is buggier and more likely to crash on them. So they weren’t, and aren’t, selling.

For years, Apple has been very vocal about wanting to integrate Mac and iOS more closely. I am sure that they have been, and are working with developers to make this transition work. Apple did so when they ditched RISC for Intel. I mentioned in another thread about how p.o.ed I was when it was announced that the brand new 9600, tan version, would not run OSX about a month after I bought it. And that a much cooler looking blue and white new version would have a G5 chip that could run it. I missed out on a lot of upgrades and new products. But I understood why Apple needed to make a change, and why backward compatibility would not be a primary concern for them.

Apple needs developers, especially since revenue from services are increasingly important to their bottom line:

“Apple’s services revenue in fiscal 2019 was $46.3 billion – up from $39.8 billion in fiscal 2018. This segment fared much better than Apple’s products revenue, which was dragged down by a slump in iPhone sales. Total products revenue fell from $226 billion in fiscal 2018 to $214 billion in fiscal 2019.”

And developers need Apple.

With all due respect, Apple could do this at any time, with or without changing CPU architectures.

It is definitely a concern - Apple has been locking down various parts of macOS for several years now - but I wouldn’t assume that using an ARM processor means Macs will just become a desktop iOS platform.

I think it is more likely that Apple will ship a large-format iPad if they want to sell that kind of a product.

I agree with you, @shamino.

Apple probably could do it at any time. But I would claim it would also be much more difficult to do that with the current Intel chipset. The current Mac is at its core still a “PC” based on Intel ISA despite added value from extras like T2. My actual concern here is that moving to a proprietary chipset makes it easier to lock down and rope off. And I’m afraid I would go so far as to say it in fact makes it more likely. I’d be overjoyed to eventually see that I was wrong here, but right now, judging by how Apple has acted these last few years I’m skeptical.

I just stumbled upon Steve Jobs’ WWDC 2005 explanation to developers of the transition process from Power PC chips to Intel. It took Apple five years, and he emphasized this was because they wanted developers to be confident that the process would be seamless for them, that their current apps would run on Intel chips. He stressed how critical power consumption would be to delivering more advanced products. Most important, he stressed that the transition would be gradual and would be phased in over two years. And he revealed that the new system was compiled to be “cross platform by design,” so that OS X apps could work seamlessly between Power PC and Intel Macs.

Tim Cook joined Apple in 1998 as Senior VP Of Worldwide Operations, and oversaw the entire seven year transition process. I’ll bet the farm that he’ll try to make the transition to ARM as seamless as possible as well.

And of course, Steve did his usual outstanding job with the presentation:

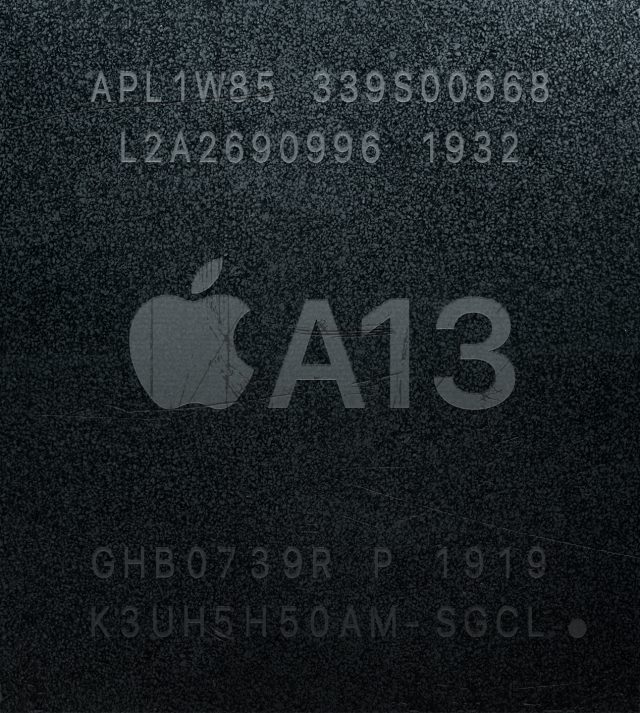

The tendency in every article or discussion about Apple switching to ARM is to look at the A series SoC designs. The A13 Bionic is the latest where you’ve got a processor that includes 2 high performance cores, 4 efficiency cores, 4 GPU cores, an 8 core neural engine for ML, an Apple Image processor (HW accelerated H.264/H.265), an LTE modem and the Secure Enclave, plus audio processing. Not to mention the highly advanced power management where each section can be powered down and up but also individual transistors as well. All this on a chip the size of your fingernail. Built from 7nm+ from TSMC.

TSMC is readying 5nm production in 2021. Both AMD and Apple are going to be releasing chips made in those TSMC fabs in the next year or two. Intel is nowhere near ready for 5nm they can barely produce 10nm.

Designing a laptop / desktop SoC is going to be considerably different than designing a mobile device SoC. Apple has the expertise and the ability to do it. But don’t base things on what they have done with the A series chips. But rather look at how fast they advanced creating those A series chips. The radical leaps in capabilities. The constraints on a laptop or desktop SoC are going to be far less difficult. There’s more power and more physical space at the least. The cores are going to be very small. Smaller than AMD / Intel because they don’t need to support all that extraneous legacy x86 junk.

Whatever Apple does, hold onto your seats because it’s going to blow you away when they announce it. They have an amazing opportunity to leap ahead in ways not seen before. If the results are stupendous there won’t be much complaining about ARM compatibility as everyone gleefully scrambles to port their stuff to the new normal.

I don’t know about engineering, but a lot of science has switched from powerful desktops to big or huge linux clusters. The profs in our theory group have gone from maxed out Mac Pros running huge Mathematica and Matlab models to MacBook Airs that talk to the clusters and are easier to carry around.

Interesting article, but one thing I wanted to bring up is that I think the point @das makes about switching foundries is over-simplified:

I certainly agree that flexibility in terms of sourcing chip production is something Apple would like. But moving from one chip manufacturer to another cannot quickly happen in response to a flood. As @stottm has pointed out, TSMC is world-leading in terms of its factories. And if something happened to one producing Apple’s chips, it’s not just a case of sending design files to another supplier. It’s unlikely that anyone else would have the capability.

That’s not so say supplier diversification wouldn’t be a benefit if Apple moves away from Intel. But it would be over a longer timescale. If Apple was unhappy with TSMC, for instance, they could invest in some other foundry and hope they can bring them up to a level suitable for manufacturing their chips. But this would likely take months/years.

I’m curious about this part of the article:

While I’m not well-versed in LLVM, I would be very curious about this. After all, Apple hired the creator of LLVM and has heavily invested in it. The ‘intermediate language’ is partially compiled but independent of the processor target.

There are even tools like McSema capable of “lifting” final binaries into the LLVM IR. (I’ve only used that for testing binaries, not re-targeting which seems possible.)

In short, I wonder if it’s technically possible to do exactly that - make many apps on the Mac App Store immediately available on ARM. (Or whatever Apple calls their new chip… maybe A14M for Mac?)

I am going to be (partially) generous to Apple here.

Apple could and indeed may make it possible to have the same apps and OS run on a ‘Mac’ with an ARM chip and a Mac with an Intel chip. Apple have managed such a transition before as others have referred to and Apple therefore have lots of experience doing this including ‘fat binaries’ and CPU translation engines and so on.

I will also say that such an ARM based Mac will have acceptable if not superior performance.

ARM systems are able to run Linux and will be able to run what looks like macOS and hence Unix. What is likely to be sacrificed is true Windows ala Boot Camp compatiblity although there might be virtualisation options.

I cannot see for other than a transition period Apple producing a Mac with both full-blown ARM and Intel chips. Apple might do this for one generation but not I feel for longer than that. Apple or someone might create a PCIe card with an Intel CPU with the ability to boot and run Windows, this would hark back to ancient times like when the Apple II had the option of a Z-80 CPU card.

What I do feel is that this will indeed be yet another step on the iOS-ification of the Mac and the further lock down of what you can do.

Whilst there is some argument supporting this in terms of enhancing security I feel it is the wrong direction for the wrong reasons. Linux and even gasp Windows has managed to improve security without going this route and arguably iOS still has plenty of security flaws despite being extremely locked down in comparison.

Sadly I feel it is more down to laziness and penny pinching. Apple have ‘in the name of security’ over the last few years increasingly been stripping out capabilities and especially ‘foreign’ code i.e. open source. This has been instead of using up-to-date releases of said open source projects. Indeed one could and should expect a company of Apple’s size and alleged abilities to be contributing fixes back to the open source community - a community Apple hugely benefited from for many, many years. Google a ‘smaller’ company do this, in fact we currently have the absurd solution that Google do a significant amount of bug finding for Apple.

Yes from Apple’s perspective your ‘average’ home user is not going to know what they are missing. Yes there are plenty of these types of customers. However whilst I am now long distant in time from the education world I feel that increasingly Macs and Apple devices are becoming less and less suitable for at least the higher education market and also for similar reasons business.

It is definitely becoming less and and less pleasant managing Macs.

It would be ironic in the extreme if ‘just’ after releasing the new Mac Pro 2019 it like the previous Mac Pro 2013 becomes the only iteration of its generation because it is obsoleted by the move to ARM.

No one else seems to mentioned the possibility and rumours do not suggest it but a different route Apple could have taken would have been to add an ARM chip as a co-processor to an Intel based Mac. Not purely for performance reasons but more like the T2 chip to add new capabilities. In particular this approach could have been used to add machine learning cores to the Mac and other similar Apple A series chip capabilities. This approach would have ‘enhanced’ the Mac rather than obsoleting it.

The T1 and T2 chips are in fact ARM chips: Apple silicon - Wikipedia

Beyond that, I wait to see what’s announced at WWDC.

FYI:

Regarding ARM based Mac Pros. The volume issue is real - cost is one thing, dedication of engineering resource another - but why would a Mac Pro need to use an Apple ARM chip? Why not “just” replace the Xeon with something like Fujitsu X64FX? It’s good enough for CRAY.

The chip foundry issue is the problem. Intel is barely capable of fab at 10nm while the A processors made by TSMC have been at 8nm for years. They did an early release of 5nm A14 chips to Apple, I heard they’re working on 4nm.

This is what happened to Motorola, and why Apple switched to Intel.

You can do this on ARM too. Well, maybe not VMWare, but Docker and Kubernetes work just fine on ARM (there are, to be sure, some images on Docker Hub that are x86 only, which is kind of annoying, but it’s usually easily fixable). Also, if you need it, Docker can actually run x86 images on an ARM system — or, as it happens, vice-versa — courtesy of qemu.

(I suspect VirtualBox might exist for ARM Linux too; I haven’t checked, mind.)

Strictly speaking I’m not sure that’s true. I think I remember reading that, in fact, the lesser known ARC platform is more popular, because it’s embedded everywhere. (ARC also has an interesting history, for those of a curious persuasion.)

Whatever, ARM is certainly a very popular platform.

I doubt it, actually. Ampere and Marvell (previously Cavium) are both shipping fast, high core count variants of 64-bit ARM that are certainly competitive in terms of overall performance with Intel’s Xeon chips. They might not be as fast per-core, but they make up for that with the large number of cores.

Apple’s 64-bit ARM cores are certainly faster than Marvell’s (I haven’t tried Ampere’s silicon, but I have run things on ThunderX2), and more on a par with Intel’s; if Apple wanted to make a high-end chip with a large number of cores, it could certainly do so, and the result would likely outrun the existing players in this space — not that that would bother them, because it seems unlikely that Apple will compete in the server market, which is what Ampere and Marvell are really aiming for.

For the high core count part of the market, this is the million dollar question, and I suspect we’ll see the answer fairly soon.

At least by some performance measures, current Graviton2 ARM chips outperform Intel’s Skylake and Cascade Lake processors on a per-core basis:

I take issue with some of the assumptions the authors make, but there’s no doubt that ARM-based chippery is capable of impressive performance.

I’d love to see a high core count CPU based on Apple’s performance cores; that would really be something.

That’s good to know. I wasn’t aware of QEMU. Seems promising!

A bit of digging shows that VirtualBox is x86-64 only (older versions supported IA32). It doesn’t seem likely to change, although I do expect something equivalent to show up eventually.

I keep hearing that ARM is really slow at emulating x86-64 due to some unspecified characteristic of its instruction set. That doesn’t really make sense to me on the surface, but the people saying it keep using that claim as a basis for a prediction that x86 apps will not function at all on ARM Macs. I can’t imagine that Apple would settle for that, shipping computers completely incompatible with all pre-existing software, but the people making the argument just stop there. “Emulation would be slow, so Apple won’t do it.” And then they move on, as if the issue is settled.

If the slowness really is an issue, and it is so slow that performance is unacceptable despite how much faster Apple’s ARM cores are than anything Intel has ever made, it seems to me that Apple would be well positioned to supplement the instruction set with a few custom opcodes that would eliminate the problem. With a Rosetta-like software binary translation engine aware of those opcodes, we’d be good to go. Or they could go farther and add a hardware translation layer, such that x86-64 code could execute natively without a software translator, but that seems unlikely to me. Just have the hardware folks focus on making the cores faster (and more efficient, of course).

I was around for both the PPC and x86 transitions. Both Classic and Rosetta were said to be slow relative to native implementations, but my personal upgrade path was such that the new Mac I was using was 3+ years newer than the old one, and that meant that my emulated experience was faster than the prior native one. I expect that most users will be in the same boat, and as such any loss in performance due to emulation will be irrelevant.

I’ve heard that claim too. It’s plausible that there is some problem there (e.g. emulating the fast inverse square root algorithm used on Intel chips on PPC was a nuisance; the Intel code will move from the FPU stack to an integer register, which on PPC necessitates flushing the data to memory and reading it back). I haven’t investigated in any detail whether or not there genuinely is a problem.

Even if it’s true, as you say, there are viable workarounds for Apple.

Everybody said the same thing when Java was invented. Then just-in-time compilers were invented that mostly (but not completely, of course) solved that problem.

It is very likely that Apple’s solution, whatever it is, will involve some kind of JIT-compilation of x86 code to ARM, much like the way Transitive’s tech (used by Rosetta) converted PPC code to x86.

This is more complicated and expensive to develop than a simple interpreter, but it’s not a groundbreaking new concept either. It’s well within Apple’s ability to develop (or license or acquire) this technology.

This I believe no longer holds nowadays. The performance jump people experienced in 1995 or 2006 when upgrading from 3+ year-old hardware was huge compared to what we see nowadays. The very reason people now upgrade less often.

Emulation will need to be extremely efficient for people’s workflow not to suffer in all but the cases where the system they’re upgrading from is very very old.

I do believe Apple can pull that off, as @Shamino indicates above, but I’m not talking any of that for granted. It will require lots of work.

“I wonder if it’s technically possible to do exactly that - make many apps on the Mac App Store immediately available on ARM”

Apple introduced BitCode in 2015, so I think it’s technically possible if Apple starts collecting the intermediate code when Mac apps are submitted to MAS. A more in-depth technical review is available here

The penultimate paragraph is worth noting.

Thank you to all for very interesting and insightful discussions.

So basically Apple went from CISC to RISC to CISC and now back to RISC.

I don’t really care much about Windows compatibility on Mac. I do care, however, about software feature compatibility. My fear is that software developers, who will now need to develop and maintain an x86 version for their Windows audience and an ARM version for their Mac audience, may not maintain both versions at the same pace. Office 365, Adobe and others…

I wouldn’t be too concerned about that. Developers haven’t written application software in assembly language for a very long time.

Apps are written in high-level languages like C, C++ and others. These languages are mostly portable across processor architectures. Apps need to be careful about things like word size and endianness, but these are issues that developers have had to deal with for a very long time.

Most portability issues these days revolve around the fact that different operating systems use different APIs to provide similar functionality. These issues exist today when trying to make a cross-platform app and those issues will remain the same if Macs should switch to yet another CPU architecture.

But even that isn’t the biggest issue. Quite a lot of cross-platform apps use a single code-base and a portability library (whether developed in-house or third-party) to target the different platforms. So the biggest portability concern will be waiting for the developer of the portability library to make a version for the new platform.

As you mentioned later, Dalvik, like any modern JVM, does a lot of JIT compilation and optimization, resulting in code that is just as fast, and sometimes faster, than equivalent code written natively for the underlying processor in a low-level language like C. I think you’ll find that this “substantial performance penalty” is actually quite trivial, especially for any app where it really matters.

The guys on the Accidental Tech Podcast interviewed Chris Lattner (creator of LLVM) a few years ago (relevant bit is around ten minutes in, but the whole interview is amazing), and he explained the situation in some detail. IIRC, one notable issue is that Intel and ARM don’t use the same endianness. LLVM is not sufficiently expressive to compensate for that. Doing some research now, it seems that ARM is bi-endian, which means it can switch on the fly, but that has to be supported in hardware.

I’d agree with that if Apple’s ARM chips from last year weren’t still faster than anything Intel had to offer, and likely to get even faster still, as TSMC is racing ahead with smaller fabrication processes while Intel has been stuck for years, and many of the world’s most talented microprocessor engineers and designers are flocking to Apple in order to work on the bleeding edge of their fields.

That, in its generality is of course not true. Just because the very best $2k iPad Pro beats a $1200 MBA in GeekBench does not mean any existing Axx can hold a candle to the kind of CPU performance we find in a modern MP or iMP.

I agree that Intel’s progress has become painfully slow and embarrassingly timid, and I also agree that Apple’s Axx show great potential. But any judgement as to whether Axx can actually serve across the entire Mac line due to alleged vastly superior performance is entirely premature until Apple actually starts talking about their plans, shows hardware and presents realistic benchmark data. IOW any of that talk before WWDC is IMHO really just religious at this point.

Intel and ARM (as deployed by Apple) are the same (little) endianness. [ARM was very bi-endian but the new ARM architecture is essentially little endian with the ability to handle big-endian data].

FWIW, just to be pedantic, Dalvik was replaced years ago (with Lollipop 5.0, which I believe was in 2014) with ART (Android Runtime). ART was offered as a preview with Dalvik with KitKat prior to Lollipop, but Dalvik was scrapped entirely with 5.0. ART is even better than Dalvik; apps are compiled into native code and run that way when they are installed instead of at run time.

I hear proponents of JIT compilers make this claim all the time, but I don’t believe it. It doesn’t make any sense how code compiled to byte-code and then JIT-compiled to native instructions could possibly be as efficient as the original source code compiled directly to native instructions.

How else do you explain the fact that Android phones seem to have processors that are much faster than Apple’s, but produce apps with comparable (and often lower) performance?

I refuse to believe that Apple’s hardware engineers are super geniuses capable of creating an ARM-based SoC massively superior to anything Qualcomm’s engineers are capable of producing. Maybe a little better, but not nearly enough to explain the discrepancy between hardware capabilities and user experiences.

This is new to me too.

Just to be clear, Android is still using a JVM-like environment where developers compile their apps to byte-code. The difference between Dalvik and ART you’re describing is simply a matter of when the compilation from byte-code to native code takes place - at installation time instead of at run-time.

Apologies, I overgeneralized. The A13 Bionic scores higher in single core (Geekbench score of 1327 from the 11 Pro) than any Intel chip Apple has ever shipped in a Mac (the i9-9900K in an iMac, scoring 1244).

I don’t see much point in comparing multi-core scores for this conversation. Apple can put as many of their cores as they wish into the custom-designed silicon that will presumably land in a Mac someday. It’s the speed of the individual cores that drives this conversation, and the existence of Intel chips that don’t suit Apple’s needs and therefore aren’t in any Macs isn’t particularly relevant (although it could become relevant if Intel made a particular set of decisions which they’ve never indicated any intention to make).

Cool, thanks for the additional context. I think I knew that at one point, but had completely forgotten.

Just wanted to emphasize that point. “Any Intel chip Apple has ever shipped.” HMMMM…