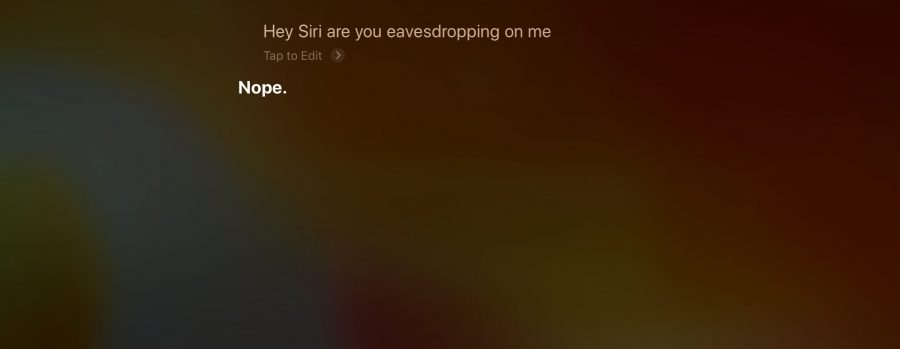

Screenshot by Josh Centers

Apple Workers May Be Listening to Your Siri Conversations

A whistleblower has revealed to The Guardian that, just like Amazon (see “Amazon Workers May Be Listening to Your Alexa Conversations,” 11 April 2019) and Google, Apple pays contractors to listen to Siri recordings for quality control. Because it’s easy to trigger Siri accidentally, especially with the Apple Watch and HomePod, contractors often hear private conversations, such as couples having sex, doctors conversing with patients, and even drug deals being carried out. Apple does not publicly document this practice and has increasingly traded on its progressive views regarding user privacy. TidBITS Security Editor Rich Mogull said: “Apple needs to end this practice. Now. It is antithetical to their core privacy principles. Especially using subcontractors.”

Well, they’re not doing anything to fix her when I tell her she’s being stupid!

I’d like to see all the voice assistant companies doing just this—trying to establish how accurate the assistant was by analyzing the user’s subsequent commands or exclamations (or obscenities). Perhaps they already are behind the scenes, but Siri, Alexa, and Google Assistant could still be apologetic when they screw up and the user yells at them.

I often expect a response when I yell at her, but I’ve never gotten one.

Diane

I’m sure that a contractor’s ears may be burning from time to time when I use Siri, especially on my Watch 3. (Unlike iPhone and my Macs, Watch begs for voice control.) But now that I know it could in fact be monitored, I have a dilemma: do I blow off the bit of pent up energy caused by a boneheaded Siri response, or do I now have to take moral injury into consideration?

One thing that could help is for Apple (and the other voice assistant companies) to tell their users to say something like “Siri that is stupid” and only then would the previous dialog be examined. This would go some ways to addressing the privacy concerns, while giving the quality folks better cues to find things that need fixing.