With the recent fuss about Apple contractors listening to some of our Siri conversations (see “Apple Suspends Siri’s “Response Grading” Eavesdropping,” 2 August 2019), I couldn’t help but wonder why we users can’t perform this task. If you’re anything like me, you already talk back to Siri when it makes mistakes or triggers unexpectedly.

Apple never said precisely what its contractors were doing, but TechCrunch’s Matthew Panzarino, who Apple favored with the exclusive explanation, described the “grading” process like this:

This takes snippets of audio, which are not connected to names or IDs of individuals, and has contractors listen to them to judge whether Siri is accurately hearing them—and whether Siri may have been invoked by mistake.

His description brings to mind Apple’s famous “1984” ad, and its endless rows of gray-clad workers, except they’re all wearing headphones, listening to Siri audio snippets and pressing one of three buttons for each: Correct, Incorrect, Inadvertent Invocation. It’s a dystopian image, and we can only hope the actual job is less soul-sucking.

Whether or not the scene I’m visualizing has any overlap with reality, I see no reason that we users can’t provide the feedback Apple needs to improve Siri’s accuracy. Here’s my proposal.

Opt-in, for Starters

Regardless of anything else, Apple would need to make this proposed Siri feedback program opt-in, by asking users if they want to enable Siri feedback during initial setup and via a toggle switch in Settings > Siri & Search.

That’s just polite, given that inadvertent invocations can record speech that the user never intended to be public. Apple has already said it will make the current human-driven grading program opt-in.

Feedback via Siri Shortcuts or Buttons

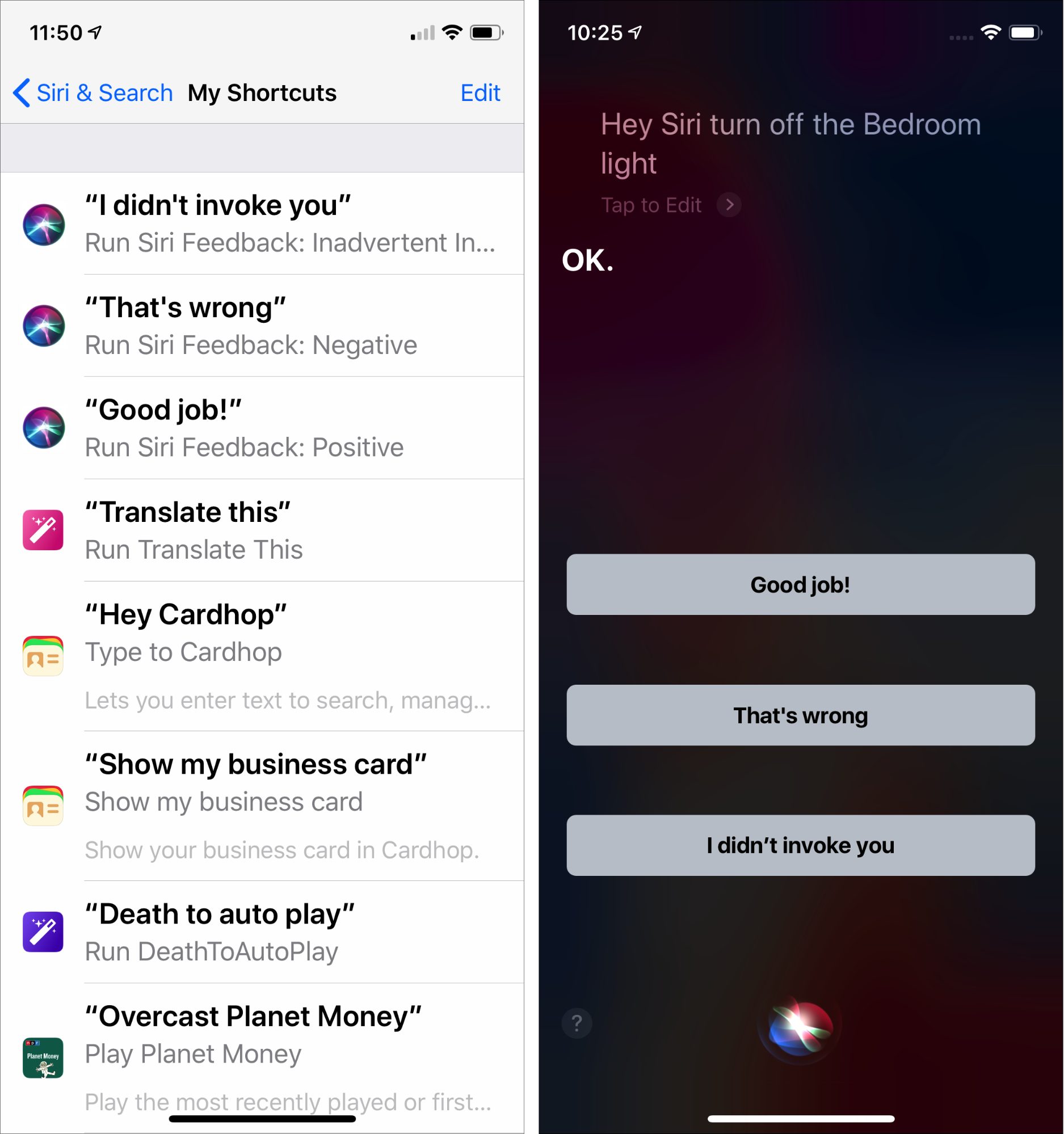

When enabled, the Siri feedback switch would create three Siri shortcuts with the default phrases of “Good job,” “That’s wrong,” and “I didn’t invoke you.” The Settings screen should provide usage instructions as well. You’d be able to give Siri feedback after any response, either via voice or with buttons for devices with screens.

As with other Siri shortcuts, you’d be free to change the phrase however you like—I imagine the inadvertent invocation would become “Shut up!” for many people and there would be more colorful replacements for “That’s wrong.”

Obviously, there should be no requirement that you provide feedback on any particular interaction with Siri, so the “Good job” response probably wouldn’t get that much use. After all, if Siri works properly, there’s little reason to offer praise—you’re not training a dog. I’d reserve such feedback for when Siri surprises me with an accurate response to a difficult query.

I suspect the other two corrections—for an incorrect response or an inadvertent trigger—would be far more commonly used. I already have trouble restraining myself from admonishing Siri when it misunderstands my commands, and it drives me nuts when Siri pipes up for no apparent reason whatsoever. OK, I admit it, I’d be among the people changing “I didn’t invoke you” to “Shut up!”

Plus, when you correct one of these Siri mistakes, Siri should apologize for messing up. A simple “I’m sorry” would go a long way toward reducing user annoyance.

Ideally, Apple would enhance Siri so it could keep listening for feedback and followup queries after it responds to you, allowing you to avoid prefacing the feedback phrase with “Hey Siri” or a button press. Google Home already does this with its Continued Conversation feature, which listens for 8 seconds after it responds, and Amazon’s Alexa listens for 5 seconds after responding with its Follow-Up Mode.

I don’t know if Apple could get the results it needs by just taking user reports at face value or if it would still need to send some percentage of reports to humans for additional verification.

Don’t Take That Tone with Me

So far, what I’ve described is largely mechanical, and well within the capabilities of both Apple and users. I’d happily start correcting Siri tomorrow if Apple added such a feature.

But I’d like to see Apple’s vaunted engineers go further and start recognizing tone of voice and emotional state. I assume that a machine-learning algorithm could be taught to distinguish between a user’s normal tone of voice and volume and when they speak more abruptly and loudly. I’m sure lots of us raise our voices in irritation when Siri interrupts a conversation or completely biffs what seems like a simple command.

“Hey Siri, play a Beatles song.”

“NO. Not Ann Peebles! The BEATLES!”

With such recognition—and assuming the Siri feedback switch is enabled—Apple could detect signals that Siri had messed up without any extra interaction from the user. The accuracy level would undoubtedly be lower because your irritation might have resulted from the cat having just brought in a dead mouse rather than anything Siri did, but it would still be better than nothing.

More Feedback for Less Cost

Matthew Panzarino said that Apple’s grading program likely evaluated fewer than 1% of Siri’s daily requests. In 2015, Apple noted Siri was handling 1 billion requests per week, which works out to 143 million requests per day. That number has undoubtedly skyrocketed since 2015, but let’s go with it.

How many people would Apple need to hire to process even 1 million requests per day—a round number in that “less than 1%” range? Assuming someone could process 3 requests per minute on average, and keep that up for a full 8-hour day, Apple would need at least 700 workers. So unless Apple is really processing far fewer requests than “less than 1%” implies, it seems likely that the company has been employing thousands of people to improve Siri’s accuracy.

Replace those people with millions of Siri users, and I’d suggest that Apple would get vastly more feedback that was potentially more accurate and would save boatloads of money. Besides, this is the sort of thing computers should do, not people. That job must eat your brain.

How about you? Would you sign up to give Siri feedback if that were an option? Let us know in the comments.

To complex. First, if Siri is wrong, people will usually issue a second command right after the first command.

Siri could use an immediate second (similar) command to understand that the first command failed.

Another is if someone edits something Siri did. For example:

We could still use an opt-in mechanism to send the information back to Apple, but we no longer have to manually grade Siri. Siri knows when she misbehaves.

We could use a similar mechanism for accidental invocations. If Siri is invoked accidentally, Siri should realize this when a follow up command is either nonsense with no follow up, or there is no other command issued. Siri could then just send the invoking sound (or mechanism) with nothing else back to Apple.

It would better protect privacy and give Apple a higher percentage of data that’s relevant to work with. The chaff would already be separated from the wheat.

In addition to what @david19 suggests, I’d like to simply be able to correct a single word while dictating to Siri. Often, when sending messages via Siri, it will get one word very wrong, and I have to dictate the entire message again (thankful for the “change” command!). Invariably, Siri will get it wrong again. Annnnnnd AGAIN. Whereas, if I could say “change Beckons to because” when it starts a message “Beckons you haven’t messaged me back, I’m going to assume you’re not home”, it sure would be easier on me (while driving, usually) and perhaps help Siri’s future accuracy (I never use “beckons”, but start messages with “Because” a lot).

Additionally, I’d like to be able to review my own prior Siri submissions. I am required to use a Bluetooth headset, due to hearing impairment… and yet I have zero idea how bad my headset might sound to Siri! I know they go bad, I’ve had them go bad, though friends and clients aren’t complaining about call quality as of late. Also, being in my car, with the AC going (iPhone magnetically attached to a mount right by an air vent), is that effecting Siri? Is the microphone on my iPhone obstructed or dirty? How would I know, I have no mechanism to test such physical inputs. (I have used speakerphone and Voice Memos to record myself; always seems crystal clear to me, but that’s usually under good environmental conditions.)

The fact that Apple saw fit to potentially allow others to hear my private requests but doesn’t see fit to provide me the tools to help myself is beyond disappointing. This company needs to re-discover the basis of “The Power To Be Your Best.”

I would opt out, for two reasons. The first is privacy concerns. Based on recent history, anything can be found out. The second reason is the same reason I took the dealer plates off my car the minute I got home. I’m not compensated for the service provided, whether it’s advertising or free tech support.

I understand we all would like to improve the technology we own and use. But Apple hasn’t offered compensation or anything else for the data it has already received from me, so the incentive to provide even more isn’t there. Even the (dubious) incentive of improved technology and software that I will undoubtedly eventually pay for in upgrades isn’t really enough.

I too have longed to be able to correct Siri. I’d like to be able to spell out a word it consistently mishears for example. I think a quickly delivered ‘No Siri’ should prompt a correction request.

That’s basically what I mean with the “That’s wrong” command. Obviously, you’ll issue another command after that to accomplish whatever the first one was intended to do, but you have to say something specific to mark the second command as a mistake. I often say one thing, particularly when starting music on the HomePod, and then change my mind and issue another command right away.

But that reminds me, I forgot to put in something about how Siri should apologize for mistakes. Off to add that.

If you edit within the Siri screen, I could see this working, but I often see Siri make mistakes that aren’t editable there. See:

That feels like a really hard machine-learning problem to me, since Siri has to accept a wide variety of things people say as commands. I can’t even imagine how you’d identify something as “nonsense.”

Now this is fascinating. Microsoft has now been caught having human listen to Cortana recordings, but there are more details about what the people are actually doing (and how much they make).

Errors could be detected with a time limit (say 5 seconds) and a comparison between the first and second commands. If the two commands are similar, you can assume the second command is a correction of the first. Or, maybe if cussing is involved in that second command.

I guess, we could start the second command as “Wrong Siri” rather than “Hey Siri”.

You’re right this may be difficult with third party apps and shortcuts, but most of work Siri does is with Apple apps, and Apple controls the OS. Apple should be able to tell if Siri creates an entry in your calendar or reminders, and that the user goes into calendar or reminders and edits the very entry that Siri added.

This would be the most difficult part. However, if I want to use Siri, and Siri fails, I will usually either correct Siri with a second command or manually do the task myself. If Siri is unable to understand a command, and I don’t either issue a new Siri command or do something on my phone, it’s a pretty good indication that I wasn’t trying to use Siri.

I’ve worked on a lot of programs, and one thing I’ve learned is that users won’t give you feedback even if it’s fairly simple to do. They have work to do, and they’re not going to interrupt their workflow to help you out.

Many hotels want you to rate your experience when you leave. They’ll give you a one or two question survey. All you have to do is select from 1 to 5 how they did. There’s even a box on the counter to put in that form. How many people actually fill that in?

Or, you call customer service, and there’s a recording that asks you to stay on the line after you finish with the customer service agent how they did. How many people stay on the line?

I worry that a program that depends upon users to let Apple know when Siri fails won’t work — even if it’s simply that you say Bad Siri! when Siri goofs.

It depends on how easy it is. There’s a company called FeedbackNow that has done really well with providing simple feedback buttons that let you rate things in the real world. I gave TSA in Newark a low rating on our recent flight to Switzerland since they were slow, confusing, and annoying. But the bathrooms in the Geneva airport got a good rating, and I remembered to take a photo that time. So if you make feedback systems easy enough, and available at the right time, I think people will use them.

So if you make feedback systems easy enough, and available at the right time, I think people will use them.

“That job must eat your brain.” But it’s a job.

I have learned to HATE Siri. She cannot understand me and I have no particular regional accent or difficulty with others understanding me. I end up swear like a sailor at Siri when she continues to botch my commands. ANYTHING that would make it work better for me would be an improvement!

If humans are eavesdropping on the other end, I hope that are at least making a note of every time I say “Siri, you’re being stupid” or “Siri, you’re not being helpful.”

I will often say “Thank you” to Siri if she does what I want, sometimes without even thinking about it. That could be your default “correct” phrase instead of “good job.”

My life!! Some days are wonderful.

Others are like you said, or even worse, when I say Hey Siri, remind me to check the lock when I get home….

… and she responds Ok I’ll remind you to check

It just goes downhill from there

Diane

I have a speech disability and, unfortunately, Siri doesn’t understand a word I say. It’s almost amusing when I think of how wrong Siri has gotten me at times or has totally failed to reply at all, it’s almost as if she was embarrassed by not understanding. It would be nice to be able to tell Siri what she (or it) got wrong but that HAS to be done in writing because otherwise she wouldn’t understand and it would be like the snake eating it’s own tail!

Funny thing, I do that, too!

My husband and I would definitely like to find a way to let Siri know when it has been improperly invoked by his voice. It is regularly invoked by my husband’s voice on both of our iPhones, and sometime on our iPads, too. It happens up close and far away, and sometimes even virtually. It happens when the things he says are not even close to, “Hey Siri!” It has to stop!

On the other hand, frequently, Siri can’t hear or understand me to save my life. I would love to be able to have the option to rate Siri’s accuracy (much like we are asked to rate the usefulness of voicemail transcription or Facebook translations) - on a case by case basis, not all the time.

It would be really great if Apple would obtain Nuance’s speech recognition software…

We have our ups and downs with Siri, too. One thing I have not found out till today is, why Siri doesn’t invoke, when my wife says Hey Siri, but always invokes, when I say Hey Siri. Could I train Siri to recognize different voices?

Could I train Siri to recognize different voices?

Does Siri has a voice recognition function, that it only invokes, when it detects it’s Masters voice ?

I thought Siri was specific to your voice on your phone? My SO and I don’t trigger each others phones.

Diane

Interesting thought. That would explain and raise 4 questions:

How does Siri learn my voice? I don’t recall any training session.

Could we actively train Siri to listen to my wife’s voice ? My wife’s iPhone is set up with my Apple ID, the only one we want to have.

Could I retrain Siri on my voice and how?

Does that imply that the voice recognition is Apple ID based, which means, on any shared device like iPad at home or home pod for that matter only one person in the household is able to trigger Siri?

There has long been some training of Siri for the Hey Siri feature, but I think that’s it.

There is a training session when you set up Hey Siri, where you say “Hey, Siri!” and a few other things several times.

Looks like Apple’s contractors were processing about two requests per minute. So Apple would need even more people.

So here’s my question about this idea: If Apple did this, would their competitors hire a bunch of cheap labor to input a bunch of inaccurate corrections in order to make Siri worse?

Fair question, and not one I’d considered. That said, there are lots of ways that one company could use another company’s public feedback mechanisms in a sort of denial-of-service way, and I haven’t heard of that happening before. It’s probably (a) not worth the effort and (b) a dangerous tactic that could result in problematic escalation.

I’m just wondering if this isn’t part of why it’s not already happening. This seems more vulnerable to such an attack than other mechanisms, as it’s unlikely that most submissions will be reviewed (or it kind of defeats the purpose).

I doubt we’ll ever know, but it seems like too juvenile of a behavior for real companies to engage in. The negative press to the attacker if it leaked (and it would leak, because of the low pay involved with the manual effort of polluting a data set) would vastly overshadow any possible benefits. And any company that thought its feedback mechanism was being polluted would just put mechanisms in place to eliminate the pollution or would ignore the feedback info entirely. So the only downside to the victimized company would be a slight cost or loss of legitimate feedback among the noise.

Voice Control in iOS 13 and Catalina will let you do this! From my early testing, you have to be dictating using Voice Control, not Siri dictation (from tapping the mic button on the keyboard).

I’ve got both betas. Can you tell us more and how to actually do it?

I know it is possible to do a lot more including corrections with Dragon Dictate but the learning curve is a bit steep. And it is expensive. But used by a professional every day the investment makes sense.

Turn on Voice Control in Settings > Accessibility > Voice Control in iOS, and in System Preferences > Accessibility > Voice Control in macOS.

Then, on the Mac, click the Commands button in that preference pane, and scroll down until you get to all the Text commands. There are a ton. I’ve had very little time testing, but from what I can tell, they work the same on both operating systems.

So you’d get a text area open while Voice Control was on, and then say something like:

Four score and seventeen years ago change seventeen to seven our fathers brought forth on this this continent comma

and so on and so on. My initial test of Voice Control was launching Text Edit, creating a new document, reading the first line of the Gettysburg Address into it, and then saving and naming the document, all with my voice. It was brilliant.

I’ve long said that what I want is a “Dammit, Siri!” command. Whenever you say “Dammit, Siri” it should figure out what the last thing it did was and never do that in response to that input ever again.

Perfect! The other night I was trying to text “you need a backup app” to my boyfriend (his NBC radar wasn’t working) and Siri replied “your message says “do you need a bath?”” - twice!!

Diane

You can actually use HS to turn Voice Control on and off.