Work with Text in Images with TextSniper and Photos Search

For as long as I can remember, there has been a categorical split between text and graphics. Speaking broadly, you can search, copy, edit, and otherwise manipulate text by the character, word, sentence, paragraph, or document. On the other hand, although graphics aren’t immutable, you can generally deal with them only at the pixel level (though some vector formats allow object-level manipulation).

But of course, it’s commonplace for text to appear within graphics files. It may be perfectly readable, but you can’t select it, copy it, or do anything else with it as text. Those characters are just collections of pixels.

I’ve started using a pair of apps that blur this text/graphic distinction in a helpful way. TextSniper, from Andrejs and Valerijs Boguckis, promises to perform optical character recognition (OCR) on anything around which you can draw a rectangle, in essence, letting you copy text from onscreen images of any sort. And Alco Blom’s Photos Search offers both Mac and iOS apps that perform OCR on text found in photos in your Photos library, enabling you to find images by the text they contain and copy that text out. Both work well, within the constraints of OCR engines, and provide welcome features.

TextSniper

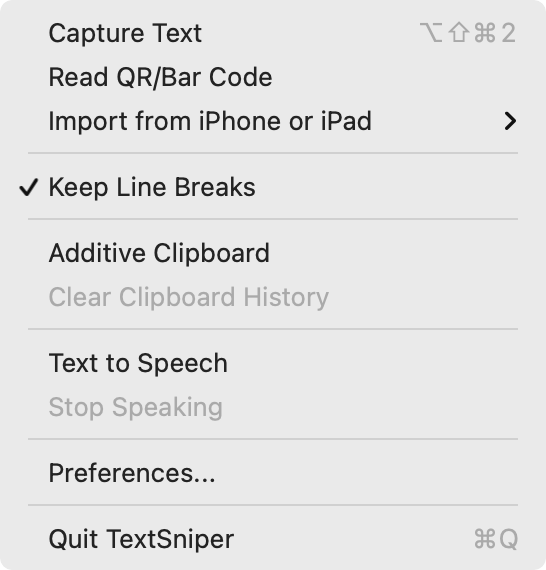

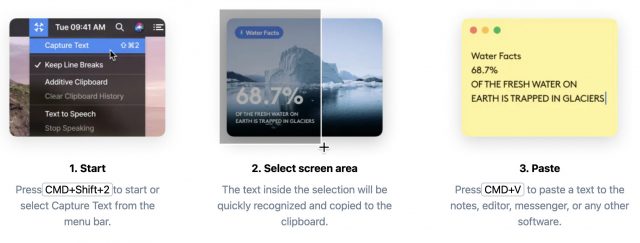

TextSniper is an elegant little utility for the Mac. Its core function, as I noted, is to perform OCR on any part of the Mac screen on which text appears, copying the selected text to the Clipboard for pasting wherever you want. You can think of it as taking a screenshot of just the text in an image. Choose Capture Text from its menu bar-collection of commands or press its keyboard shortcut, select an area of the screen that contains text, and then paste the text into a document. It works like a charm.

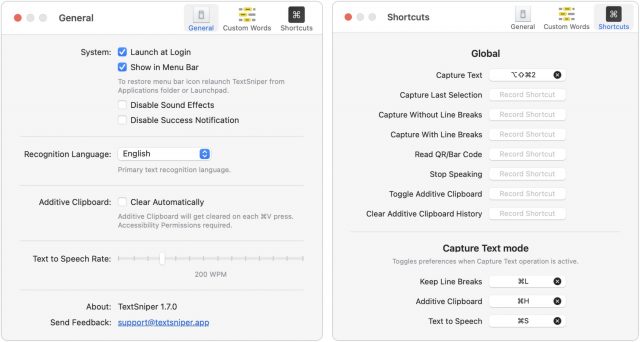

You don’t have to replace the contents of the Clipboard on every copy. An Additive Clipboard option lets you keep adding bits of text to the Clipboard, which could be particularly useful when extracting the text from a video or slide presentation. If you want more feedback about what you copied, a Text to Speech option reads the copied text. For those who work in fields with domain-specific jargon, there’s even a preference screen for adding custom words to the OCR engine’s standard lexicon.

You don’t have to replace the contents of the Clipboard on every copy. An Additive Clipboard option lets you keep adding bits of text to the Clipboard, which could be particularly useful when extracting the text from a video or slide presentation. If you want more feedback about what you copied, a Text to Speech option reads the copied text. For those who work in fields with domain-specific jargon, there’s even a preference screen for adding custom words to the OCR engine’s standard lexicon.

TextSniper offers a few conceptually related features as well. It can also read QR codes and barcodes, putting the detected text on the Clipboard. (I’d like to see an option to have it display a notification that, when clicked, opened detected URLs in your default Web browser, just as QR code scanning does on the iPhone.) It can also tap into macOS’s Continuity Camera feature to use an iPhone or iPad to take a photo, scan a document, or add a sketch (see “How to Take Photos and Scan Documents with Continuity Camera in Mojave,” 27 September 2018). You can invoke all these features with user-specified custom shortcuts.

Although TextSniper runs in both macOS 10.15 Catalina and macOS 11 Big Sur, it can recognize only English in Catalina. In Big Sur, it recognizes seven languages—English, French, German, Italian, Portuguese, Spanish, and Chinese—and the developers tell me that it may work with other Latin alphabet languages but have issues with accented characters. It works natively on M1-based Macs.

I’ll admit that it took me a while to break free of that age-old split between text and graphics, but if you ever find yourself retyping something or living with a screenshot when what you really want is the text inside, give TextSniper a try. It costs $6.99 for a single Mac or $9.99 for three Macs when purchased directly from the developer (TidBITS members save 25%), and it’s available in the $9.99-per-month Setapp subscription service. You can also get it for $9.99 from the Mac App Store.

Photos Search

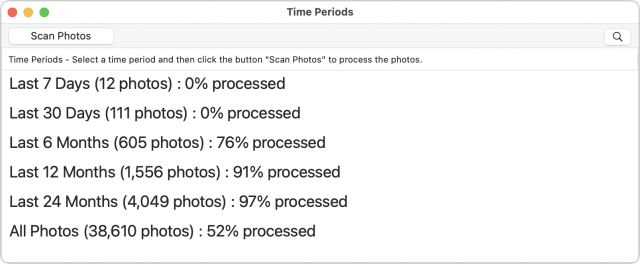

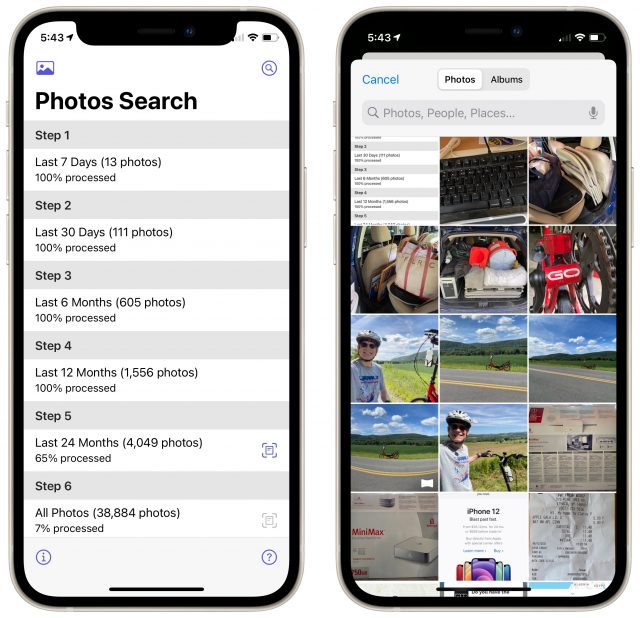

Alco Blom’s Photos Search relies on the same core concept as TextSniper, but it gives you a different superpower. Running in either macOS or iOS/iPadOS, Photos Search scans the contents of your Photos library and performs OCR on all the text it finds. Once it’s done—and it will take a long time to index a large library—you can search for photos that contain specified strings of text.

The interface for scanning different time periods is a little clunky, but it gives you control over when Photos Search hammers the CPU while doing OCR on images, particularly on the iPhone, where Photos Search can scan only while it’s the current app. Many people are likely to want to extract text from recent photos, so it makes sense to provide the option to scan just the last week or month of photos, which goes quickly. On the Mac, just select a time period and click Scan Photos.

In iOS, tap the icon to the right of a time period to scan all the images from that time period. You can also tap the icon at the top left of the screen to select and process a single image right away.

Once Photos Search has scanned a sufficient number of images, you can start searching for text in them. This turns out to be a remarkably useful feature. The first time I used it in the wild was when I was at a doctor’s appointment and was asked for my COVID-19 vaccination card. It’s an awkward size and would be difficult to replace, so I keep it in a safe place and just show people a photo of it. But when I took the photo, I didn’t think to title it, favorite it, or put it in a special album. I knew when my last shot was, roughly speaking, but rather than scroll through all my images since then, I used Photos Search to pull up all recent images containing the word “COVID.” It was magic. More generally helpful have been searches for text that appears in screenshots—I take a lot of screenshots—because screenshot text is so clear and easy for Photos Search to recognize.

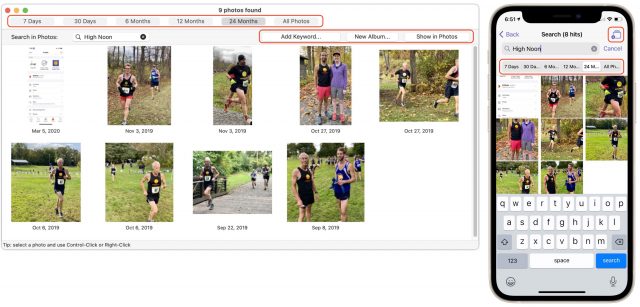

Not all searches work as well. For instance, I’ve taken many race photos of people on my High Noon Athletic Club wearing our new team jerseys. The club name is prominent on the jersey, but it’s curved over the sun logo, and many of the pictures are slightly fuzzy because the iPhone is weak at sports photography. Photos Search found no instances of “High Noon” on the new jerseys, but it did better with our old logo, where the High Noon words are straight. (That’s what it found in the screenshots below.) With the new logo, I can get somewhat better results by searching for only one of the two words. Alco Blom tells me that the inability to recognize curved text is a limitation of Apple’s Vision framework, so we hope Apple improves it in the future.

Not all searches work as well. For instance, I’ve taken many race photos of people on my High Noon Athletic Club wearing our new team jerseys. The club name is prominent on the jersey, but it’s curved over the sun logo, and many of the pictures are slightly fuzzy because the iPhone is weak at sports photography. Photos Search found no instances of “High Noon” on the new jerseys, but it did better with our old logo, where the High Noon words are straight. (That’s what it found in the screenshots below.) With the new logo, I can get somewhat better results by searching for only one of the two words. Alco Blom tells me that the inability to recognize curved text is a limitation of Apple’s Vision framework, so we hope Apple improves it in the future.

Even incomplete search results can help you jump to appropriate parts of your Photos library for manual browsing. If there are too many hits, you can also limit the results to specific time periods using buttons at the top.

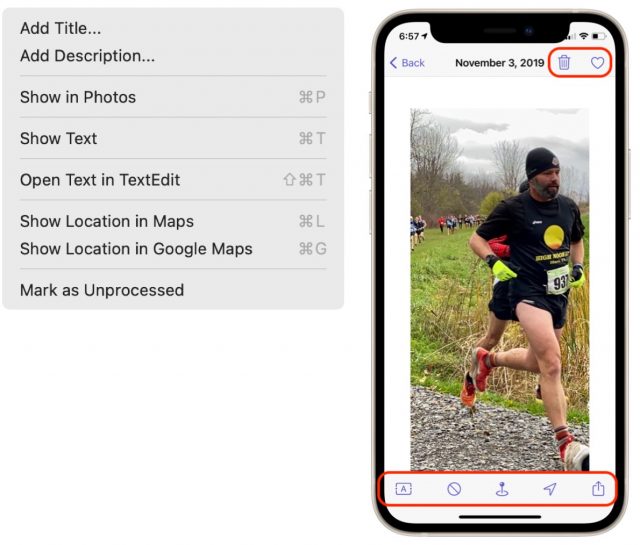

Once you’ve found images, double-click one to view it in Photos on the Mac or tap it to view it by itself in the iOS version. The Mac version offers buttons that let you add keywords to selected photos and put selected photos in a new album, whereas the iPhone version provides only a button to create a new album.

Using the Photo menu on the Mac, or the toolbar buttons at the bottom of the iPhone screen, you can avail yourself of other options. You can view (and copy) the recognized text, open it in a new TextEdit document (Mac only), or view the photo’s location in either Apple’s Maps or Google Maps. A Mark as Unprocessed option lets you remove an image from the index, which would enable you to rescan it after Apple updates the Vision framework, for instance. The iPhone version also lets you delete an image or mark it as a favorite using buttons at the top.

Other iOS-specific features include a pair of extensions that provide better integration with other apps. A Share extension lets you extract text from photos in other apps without adding them to your Photos library, and a Photos Editing extension allows you to extract the text from an image while editing it in Photos, saving you a trip to Photos Search.

Photos Search is available only from the Mac App Store for $12.99 for a bundle of the Mac and iPhone/iPad versions, or you can get the iOS version by itself from the App Store for $4.99.

What about Live Text?

The elephant in the room, of course, is Apple’s Live Text feature, promised for macOS 12 Monterey on M1-based Macs and iOS 15 on devices with at least an A12 Bionic chip (see “Ten Cool New Features Introduced at WWDC 2021,” 7 June 2021, and “The Real System Requirements for Apple’s 2021 Operating Systems,” 11 June 2021).

Will TextSniper and Photos Search be Sherlocked once Live Text becomes available? For those sticking with earlier versions of macOS or iOS, or with devices that don’t meet Live Text’s requirements, the answer is clearly no. TextSniper and Photos Search work well now and will continue to do even for those who don’t upgrade software or hardware soon.

Given that Live Text is still in beta, it’s hard to say how it will eventually compare to TextSniper and Photos Search. In theory, Live Text will let you select and copy text in images in Photos, Preview, Safari, and some other apps, so it could replace TextSniper in supported apps on the Mac. When Live Text worked, it was slightly more obvious to use than TextSniper—you just select text and copy it rather than taking a screenshot of sorts. However, in my quick testing, Live Text was persnickety to use in Safari, and the quality of its OCR wasn’t always as good as TextSniper’s.

Live Text also supposedly lets you search for recognized text, but this feature was so haphazard and failure-prone in Spotlight in beta 6 of iPadOS 15 on a 10.5-inch iPad Pro that I couldn’t effectively compare it to Photos Search. Searching for text in the Photos app itself didn’t work at all. On an M1-based MacBook Air running beta 5 of Monterey, Spotlight didn’t seem capable of searching for text within images in Photos, although it found text in a photo stored in Notes.

The jury must remain out on Live Text’s functionality until it ships, but my feeling is that TextSniper and Photos Search will continue to provide broader compatibility and more adjacent features regardless. So if you have ever wanted to work with text imprisoned within images, one or both of them may give you the power you need.

I use TextSniper — it is a tool I had wanted for a long time. Countless uses for researchers and developers (and who isn’t a researcher these days?) If the dev added statistics, I’d like to see them. An app like Timing could also be enhanced to give information about the context in which one tends to snip text.

Photo Search app is intriguing. Photos app has a setting to store images in iCloud and only download some of them. Don’t know how Photos Search would work in that case.

I thought Apple Photos did not have automation, but it does. Either that’s new or I overlooked it years ago. So I’ve asked my team at CogSci Apps to look at adding supporting to Hook for it (copying links to photos). Many of us have > 10k photos, so indexing/accessing is a big issue. And like you intimated in your article, who can bother adding meta-data to everything.

How does Search Photos compare to Orga?

Regarding iCloud : Photos Search will download the photo and process it locally. The photo’s image data will then be released from memory again. Thanks! - Alco Blom, Developer of Photos Search.

Apple Photos supports Apple Events very well and I use it to add keywords to the found photos, if you want. - Alco Blom

No idea, but I’ll take a look at Orga.

I also missed an app that’s akin to TextSniper, LiveScan from Gentleman Coders (the firm behind RAW Power). It runs on macOS and iOS, and does roughly the same sort of screenshotting approach for capturing text.

https://www.gentlemencoders.com/livescan/

I have TextSniper and find it very useful. I was astonished to see it reliably recognize labels on curved bottles.

There is (yet) another utility CleanShot that recently added text recognition. CleanShot is virtually a swiss army knife of screen capture functions and well worth your while to take a look at it.

Oh well, that’s embarrassing. I use CleanShot all the time but missed that an update had added text recognition. There may have to be a followup article.

This article convinced me to check out both of these apps. Thank you. By the way, there’s an easy way to keep (and find) your vaccination card on iPhone, just using Notes and the scanner function. Here are the details: Keep your vaccination card in Notes (Gordon Meyer)

Interesting.

FYI, in Europe, most national heath apps now have a digital copy to flash when needed to go in somewhere or download as PDF for when making bookings. Although, TBH, these are only really needed for certain places/uses. Because many countries are hitting ~70-80% double-shot adult vaccination levels, I think a lot of laxity is setting in.

The reason apps are adding OCR features is that Apple took the unusual step of making their OCR engine available to developers before adding the functionality to Apple’s own apps. It’s the Text Recognition portion of the Vision framework, described here:

https://developer.apple.com/documentation/vision/recognizing_text_in_images

For example, Nisus Writer Pro, my favorite word processor, added the ability to perform OCR on any image dragged into a document back in November 2020.

Closing the loop to say that we did find it is possible. So we’ve added a custom hook://photos URL scheme so people can create links to their photos, per Using Hook with Apple Photos App. We’ve given credit to the TidBits article and mentioned Photos Search Mac app. We’ll see if we can create links to Photos via the Photos Search Mac app (i.e., you’d invoke Hook in the app, and use Hook’s

Copy Linkfunction).Earlier in the year I came across TextBuddy for macOS – one of the features that had caught my eye was text extraction from images.

I’ve made limited use of utility in general, never mind this particular feature, so I can’t comment on how well it shapes up against the featured apps here but testing against some of the images in this article produced mixed results. The High Noon Athletic Club logo produced limited success, but other cleaner sources produced excellent results.