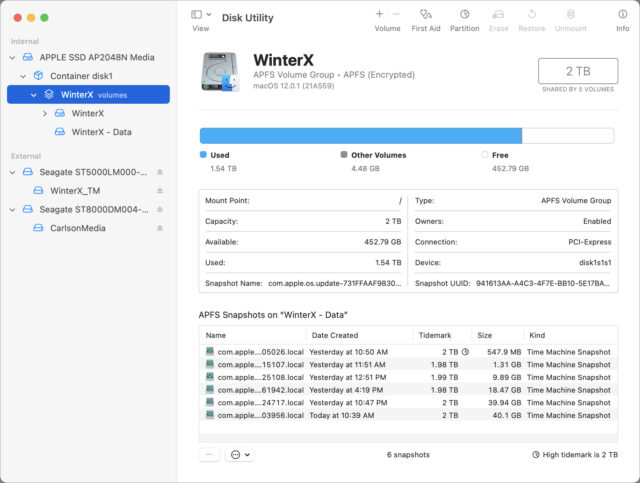

Disk Utility in macOS 12 Monterey Manages APFS Snapshots

Howard Oakley at the Eclectic Light Company points out that macOS 12 Monterey’s version of Disk Utility now supports viewing and managing APFS snapshots. APFS snapshots provide rolling copies of a drive’s state so you can easily restore in case something goes horribly wrong (see “What APFS Does for You, and What You Can Do with APFS,” 23 July 2018). Those snapshots are handy but haven’t been easy to work with in previous versions of macOS (see “How to Work with and Restore APFS Snapshots,” 9 May 2019). With Disk Utility in Monterey, you can mount a snapshot like an external drive and copy data from it, or even delete previous snapshots to save disk space. Oakley also points out some new command-line options for working with APFS snapshots.

I like this Howard Oakley.

Josh - can you write something about what are APFS snapshots and their benefits please?

Snapshots are nothing new to the industry. Various kinds of network servers have been using them for decades. The earliest example I’m personally familiar with was developed by NetApp for their file server appliances. Here’s a white paper that, in part, explains their implementation.

The general idea is that, when using a snapshot-capable file system, each disk block can belong to multiple files. There is a per-block reference count stored, so that when files are deleted, the block isn’t freed until all of the files using the block have been deleted.

This is used in conjunction with “copy-on-write” technology. When a disk block is shared by multiple files and one tries to write to the block, it is duplicated (and the file’s internal data updated to reflect that it is now using a different block) before the write operation.

These technologies (reference counting and copy-on-write) allow an operating system to do many useful things.

One of the most obvious is APFS’s ability to perform quick copies of files. As you may have noticed, duplicating a file in APFS using the Finder completes almost instantly, regardless of it’s size. This is because it’s not actually copying all the data to a new set of disk blocks. Instead, it creates a new file that uses all of the original file’s disk blocks for storage. Later on, if you modify the file, the system allocates new storage for those blocks that have changed, but everything unchanged remains shared with the original.

In addition to speeding up file-copy operations, it also saves space on your storage device, because it’s not keeping two copies of data for content that is known to be identical.

A snapshot is basically an extension of this concept. Instead of performing the above “fast copy” operation on a single file, it is performed on the entire volume’s directory structure. You end up with two directory trees, containing the same files and pointing to the same disk blocks. One of them remains writable, while the other is marked read-only.

Afterward, when files are created, deleted and modified, the volume’s writable directory (the active one) is modified as normal. Its disk blocks (and the files’ disk blocks) are duplicated using copy-on-write as necessary. The read-only tree never changes - it holds references to the disk blocks belonging to the original files as they were at the time the snapshot was created. It is 100% accurate read-only image of the directory and file state for the entire volume at the time the snapshot was created.

Later on, you can create more snapshots, which will preserve the directory and file data at additional points in time.

Of course, when snapshots are used, it means deleting a file doesn’t actually create free space on the volume, because the file’s disk blocks are still being used by any snapshots that were created after the file was created. And writing to a file, even without changing its size, may cause free space to be consumed if there is a snapshot holding references to its original content.

This is why you generally don’t want to keep snapshots around forever. After a while, you want to delete them. This will decrement the reference count for every disk block (both directory and file) used by the snapshot. Blocks that are no longer referenced (by the active directory or another snapshot) will be freed up and will be available for new data.

Snapshots are typically deleted either on a schedule, or when a volume starts running out of free space. Different operating systems have different algorithms for this.

There are many different ways to present snapshots to users.

One way is to present a GUI (like Time Machine) where you can open a folder and then view it as it was at different points in time.

Another way (e.g. the way NetApp does it) is to create a

.snapshotsdirectory in each user’s home directory. Within there are subdirectories with names like “weekly1”, “weekly2”, “hourly1”, “hourly2”, etc., each one containing a snapshot of that user’s home directory at various points in time.On a NetApp device, the system administrator configures a schedule for snapshot creation and deletion. For example, one server I used to use was configured to create “hourly” snapshots every 4 hours (retaining the most recent 5), “daily” snapshots every day at midnight (retaining the most recent 6) and “weekly” snapshots every Sunday at midnight (retaining the most recent 3).

Apple’s use for Time Machine on an APFS volume is to create a snapshot for each backup created, deleting them according to Time Machine’s schedule. This is (if nothing goes wrong), hourly backups for the past 24 hours, daily backups for the past month and weekly backups retained until the volume fills up (after which, the oldest are deleted in order to free up space for new files).

What are the benefits of snapshots and related technologies? There are a few:

Fast file copies. When duplicating files to a new location on the same volume, the data isn’t actually copied - it only increments the files’ reference counts, so it completes very quickly.

Deduplication. Two files that are copies of each other (on the same volume) share the same disk blocks, so there’s more free space available.

Very fast backups. When used by Time Machine or other kinds of backup software, you can create a snapshot very quickly - usually within seconds - that contains a preserved copy of the entire volume. You can then take all the time you need to back-up that snapshot’s contents to another storage device without worrying that some of the files may be changing (which could create a backup with inconsistent data).

Simpler incremental backups. If your backup device is an APFS clone of an original volume, you can create a snapshot for each backup you create and then copy over only the files that have changed. This is very efficient (no unnecessary duplication of storage for files that have not changed), and you don’t need to mess around with things like fragile networks of file links (as Time Machine does on HFS+ volumes) or specially-preserved databases of old files (as Carbon Copy Cloner does with its SafetyNet when snapshots are not used).

If you want/need to preserve the file system at some point in time (e.g. before a major app update), it is (or should be) quick and easy to manually create snapshots. They’re not a complete substitute for a backup (since they won’t protect against device failure), but you can use them to roll-back the entire storage volume to the snapshot’s state if necessary.

When used with a suitably convenient UI, you can implement something like Faronics Deep Freeze, to let you easily create and restore well-known (and supported) configurations. This is great for public-facing computers (e.g. store displays, hotel business centers, etc.), because you can quickly revert everything changed by the public (e.g. every day, or after each user session ends), no matter what the public may have changed.

macOS Big Sur (and later) uses snapshots to protect the System volume (containing the OS kernel and other related critical files). After installing the OS or an update, a snapshot is created. The snapshot is signed (a cryptographic hash of it is created, to allow detection of any changes to the snapshot) and is used as the System volume on subsequent boots. This ensures that only Apple can ever change the contents of the System volume and that corruption (or hacking) can be detected.

Howard’s a great guy! Jeff Carlson wrote about snapshots a few years back, and we linked that in the ExtraBIT.

A quick question regarding APFS file duplicating as described above.

I’ve been known to duplicate a file as a starting point for major changes to a document - say a book in InDesign where I may want to reuse the layout. Given an APFS ‘duplicate’ is still referencing the original file, does that mean the new file is hosed if the original file gets corrupted?

IOW, Book A is duplicated and renamed Book B. Some changes are made to Book B. Book A gets corrupted so is Book B now also corrupt?

Or are there sufficient protections in APFS for this not to occur?

This depends on the app’s behavior and what kind of corruption you’re talking about.

Some apps save changes by only writing the parts of the file that changed. Database-like files (especially ones where the content may be too big to fit in memory all at once) tend to work that way, with the app reading and writing only the parts that you are working with. For those apps, the working file and the duplicate will share the blocks that have no changes, but will have different blocks where they have changed.

Other apps (especially those that store your entire document in memory while you’re working, or that use compressed/encrypted file formats) overwrite the entire file whenever you click Save. For these apps, once you save changes to it, the working file and the duplicate will have separate sets of blocks, because everything got replaced during the save operation.

Regarding corruption, if it is caused by a bug in your apps writing bad data to the file, that won’t break the original. The OS will use copy-on-write so the new (bad) data will be written to separate blocks.

If, however, the corruption is caused by a bug in the APFS file system software itself or a hardware failure on your storage device, and that causes a shared block to get corrupted, then it will affect both files.

But I don’t think this should be an area of concern, beyond the concern we (should) always have. If there is a bug in the file system software or if you have a hardware failure, then there is potential for much more catastrophic failures - including loss of all your files on the device. Which is why it is still (and always will be) important to make backups to physically separate storage devices (e.g. Time Machine, clones, cloud-based backups, etc.) in addition to making local duplicates of files.

One additional point, just for clarification.

When you duplicate a file, APFS doesn’t track which is the “original” and which is the “duplicate”. They are simply two (or more) files sharing the same disk blocks. Modify shared blocks on one and they will be duplicated (copy-on-write). Modify shared blocks on the other and the same will happen.

It’s not like a version control system where an original file is stored along with a list of differences that are applied to it in order to construct a later version (e.g. as is done in many photo-management apps). With a system like that, corruption to the original would result in corruption to all the subsequent versions.

But with APFS file duplication, there is no chain of diffs. There are simply two files that happen to share common disk blocks for the content that has not yet been modified.

Are all the files in all Snapshots backed up with, say, CCC backups? Or just whatever is considered the current visible files? I’m seeing more rapid than usual growth in the size of CCC sparsebundles, and didn’t want to waste time investigating if this was natural growth due to snapshots.

And on a related note, is is worth deleting old snapshots so that free disk space doesn’t get used up to the point that it causes other problems? (Like installation issues, for example). Or is deleting them even possible? I see Disk First Aid spending a lot of time checking snapshots.

CCC does not clone your snapshots. Each time it runs, it backs up those files (from the working file system) that changed since the previous backup.

I assume that when you say “CCC sparsebundles” that you are backing up to a disk image on a remote server. This image will grow as needed to accommodate its contents.

CCC can be configured to create snapshots on an APFS destination volume. It will use them to preserve the state of prior backups (so you can view the results of any backup made to the device). It will auto-remove the oldest snapshots (only the ones it creates) when the destination volume fills up, in order to create enough free space for new backups.

CCC can also use its legacy “Safety Net” feature (a root-level directory containing old versions of files) on the destination instead of snapshots. When it is used, CCC will purge the Safety Net of the oldest files when space runs out on the destination volume.

Either way, if the destination is a disk image, CCC’s retention logic will delete old Safety Net files/snapshots only when the image’s free space gets too low. This will depend on how the image was created.

Regarding snapshots in general…

Snapshots that are automatically created by macOS (e.g. Time Machine on an APFS destination and TM’s local backups) will be automatically deleted, either on a schedule or when needed to create free space. They shouldn’t cause storage problems.

Snapshots that are created by other software are not (as far as I know) automatically deleted by macOS, but they should be deleted by the app that created them. For example, CCC auto-deletes its snapshots according to its (configurable) retention policy.

If you manually create a snapshot for some reason, it will only be deleted when you manually delete it.

In Monterey, the new Disk Utility update should let you manually delete snapshots. On prior versions of macOS, Disk Utility did not have this support, but you could do it with command-line tools or third-party GUI tools (including CCC).

Disk First Aid should inspect the snapshots. And they will take a while, because each one is a full copy of the volume’s directory tree. If your volume has 100 snapshots, then the time spent inspecting snapshots will be 100x the time spent on the active directory. It’s not a bug, but the way such a tool has to work.

See also: