#1513: Former Apple engineer on Apple’s privacy approach, mirror Apple TV to Mac, WWDC goes virtual, free ACES Conference Prequel, TidBITS 30th podcasts

Former Apple engineer David Shayer joins us again this week to share Apple’s approach to privacy, contrast it to another tech company, and explain why he trusts the COVID-19 exposure notification proposal from Apple and Google. If you’ve ever wanted to display your Apple TV on your Mac’s screen, Josh Centers tells you how to do it, along with what won’t work. In conference news, Apple announced that WWDC will start—virtually—on June 22nd, and a free prequel to the ACES Conference has expanded to serve small business owners beyond IT consultants. Finally, if you’d like a trip down memory lane, TidBITS publisher Adam Engst made the rounds of numerous podcasts recently to celebrate the 30th anniversary of TidBITS—listen in for great stories from the early days of the Mac. Notable Mac app releases this week include HandBrake 1.3.2, Retrospect 17.0.1, Bookends 13.4.1, Piezo 1.6.5, 1Password 7.5, and PopChar X 8.10.

Apple’s Virtual WWDC to Start June 22nd

Apple has announced the start date for its virtual Worldwide Developers Conference: 22 June 2020. That’s a bit later than usual, perhaps due to the difficulty of reshuffling the format and the added complication of the company’s employees working remotely. We’re lucky that Apple is holding WWDC at all—Google completely canceled its I/O conference after promising to hold it remotely, and Facebook replaced its F8 developer conference with “a series of updates throughout the year.”

Happily, no $1599 ticket is required—this year’s virtual conference will be open to all registered Apple developers. We anticipate that the keynote will be streamed for everyone to watch. Apple is encouraging all interested developers to install the Apple Developer app for iOS, iPadOS, and tvOS, which will offer more information and session videos as they become available.

Since there’s no point in offering student scholarships this year, Apple is instead holding a Swift Student Challenge. Interested students should build a Swift Playground that can be experienced in 3 minutes or less and submit it to Apple by 11:59 PM PDT on 17 May 2020. Winners will receive an exclusive WWDC20 jacket and pin set.

ACES Conference: The Prequel Free for All Small Business Owners

With my TidBITS Content Network hat on, I’ve attended the ACES Conference for IT consultants the past few years and was planning on jetting down to Atlanta for this year’s conference on May 19th and 20th. Given the coronavirus pandemic, that’s not happening—it has been postponed until October.

However, rather than merely shift the date, conference organizer Justin Esgar decided to squeeze today’s lemons into lemonade. The result is ACES Conference: The Prequel, a virtual event that will take place on the original conference dates, with an expanded focus on small business owners across all industries. The theme is topical and straightforward: How Your Business Is Going to Survive COVID-19. Topics will cover marketing, sales, finance, branding, mental health, and more.

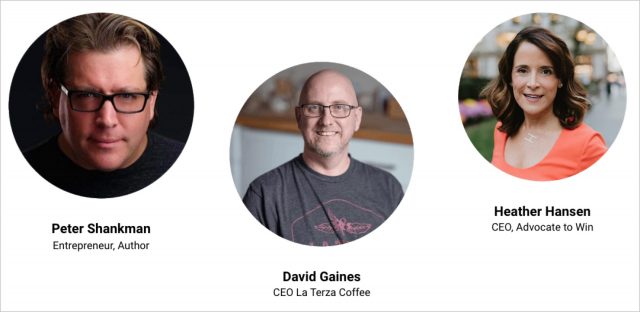

The best part? Everyone can register for ACES Conference: The Prequel for free. It includes 2 days of speakers and sessions, and you can drop in any time to join sessions live or watch recordings from earlier in the day. Speakers include author Peter Shankman, La Terza Coffee CEO David Gaines, and Advocate to Win CEO Heather Hansen, executive coach Jason Womack, and podcaster Josh Tapp, plus other entrepreneurs, business leaders, financial coaches, and other small business owners.

If you are an IT consultant, a $199 IT Consultant Pass includes bonus content and resources, breakout sessions and IT industry roundtable discussions that proved highly effective at last year’s event, and a $199 credit toward a ticket for the in-person ACES Conference scheduled for October 20–21.

10% of the revenue from paid tickets, plus donations raised at the event, will go to the Stupid Cancer charity to help adolescents and young adults battling cancer during these universally uncertain times.

(In the interests of full disclosure, I’ve spoken at and sponsored ACES in the past, and I’m on the ACES board of advisers, although it’s an entirely uncompensated position. And while I have no connection with Stupid Cancer, a dear friend is dealing with terminal pancreatic cancer right now, so I’m much more aware of just how a group like Stupid Cancer can help.)

Four TidBITS 30th Anniversary Podcasts

Perhaps my favorite part of big TidBITS anniversaries is that they’re great excuses to venture down memory lane and dredge out the best stories from the early days of TidBITS. Our recent 30th anniversary resulted in a slew of podcast invitations, all of which were tremendous fun. If you’ve got some time on your hands (and let’s face it, many people do right now) and want to relive some of the early days of the Mac world, I’d encourage you to give them a listen. There are surprisingly few overlaps in content.

- MacBreak Weekly: I always enjoy my sporadic virtual visits to the set of Leo Laporte’s MacBreak Weekly, and this show with Leo, Rene Ritchie, and my old friend and birthday buddy Andy Ihnatko was even more fun than usual. It’s the least focused on the TidBITS anniversary since MacBreak Weekly has so much other news to talk about, but there was still time to talk about content from our first issue, including the After Dark screensaver, the Outbound Macintosh portable, and refilling DeskWriter ink cartridges.

- The Talk Show: This was my first time as a guest on John Gruber’s podcast, and while it clocks in at almost 2 hours of talking, I tremendously enjoyed trading stories about the early days of the Mac and TidBITS with John. If you’ve been using the Mac since the late 1980s or early 1990s, try to find the time to listen to this conversation. We got into so many wonderful details about an era that was, as it turns out, more special than any of us could have realized then.

- Chit Chat Across the Pond Lite: Allison Sheridan and I have traveled in roughly similar circles for many years, but it wasn’t until we found ourselves sitting next to each other at last year’s MacTech Conference that we got a chance to connect for real. While this podcast discussion starts at the founding of TidBITS, it then ranges widely, touching on topics like my current favorite Apple hardware, problems with Apple’s annual operating system update schedule, and why I pay no attention to rumors anymore.

- MacVoices (Part 1 and Part 2): Chuck Joiner and I have been recording podcasts for many years, and I take inordinate pleasure in saying things to get a rise out of Chuck since his on-air persona is always so measured. We ended up talking about the publication Tonya and I worked on at Cornell when we were undergrads, how we’ve guaranteed that all TidBITS article links from the past continue to work, and why email is the cockroach of Internet technologies, among much else.

I hope you like listening to these conversations as much as I enjoyed having them. For others out there in the podcast world, I’m happy to chat, particularly if we can uncover stories or topics that have long been buried by the avalanche of time.

How to Mirror Your Apple TV to Your Mac for Screenshots or Presentations

A reader recently emailed me to ask how to mirror an Apple TV on a Mac. There are two main reasons you might want to do this: to capture screenshots or video for documentation, or so you can give a remote presentation that involves the Apple TV.

When I wrote the first edition of Take Control of Apple TV, I had to use an expensive and awkward Elgato capture box to take screenshots. Apple subsequently built this capability into QuickTime Player. With the Apple TV HD (also known as the fourth-generation Apple TV), you had to connect it to your Mac with a USB-C cable, but the Apple TV 4K lacks a USB-C port. Nowadays, all that’s necessary to capture the Apple TV’s output in QuickTime Player is that the Apple TV and the Mac be on the same Wi-Fi network.

Setup

Follow these steps:

- Open QuickTime Player.

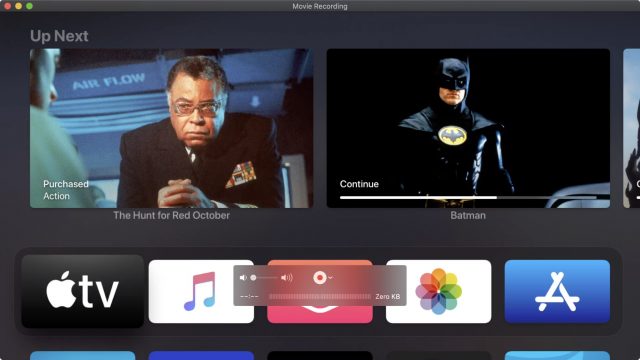

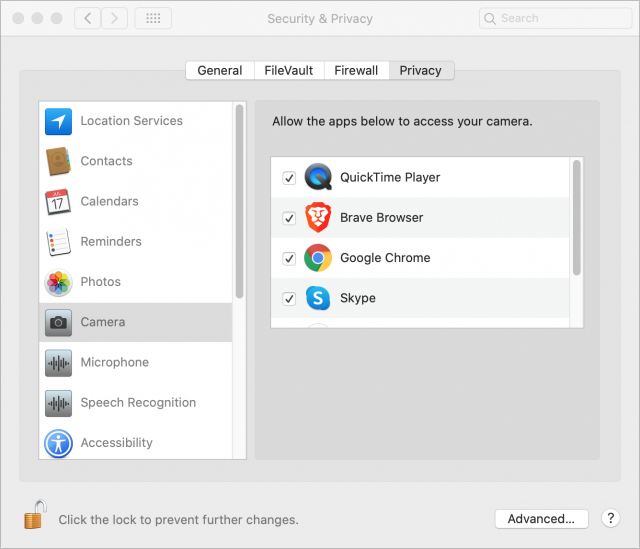

- Choose File > New Movie Recording. In macOS 10.14 Mojave and earlier, a window opens, showing your webcam by default. In 10.15 Catalina, you’ll need to give QuickTime Player permission to access the camera in System Preferences > Security & Privacy > Privacy > Camera.

- Put your mouse pointer in the window to reveal the controller.

- Click the arrow next to the record button.

- Choose your Apple TV from the menu.

- The first time you connect to your Apple TV, it displays a code that you have to enter on the Mac. If the Apple TV is in another room, this is a little tricky to do on your own because the request times out fairly quickly.

When you choose a “camera,” you also have the option to choose a “microphone,” and any Apple TV you have will also show up in that section. However, I’ve never successfully routed audio from an Apple TV to my Mac. If you’ve had better luck, let me know in the comments.

Usage

Once you’ve run through those steps, the Apple TV’s interface is mirrored to the QuickTime Player window, making it easy to capture a screenshot or, if you’re doing a presentation, to share like any other window on the Mac.

Happily, because the Siri Remote works over Bluetooth, you can use it to control the Apple TV in the QuickTime Player window even if you’re in another room. You could also use the Apple TV controls in Control Center on your iPhone.

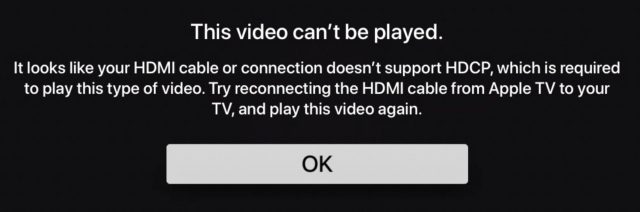

As you would expect, Apple won’t let you capture screenshots or video from movies or TV shows. Whenever you try to play a protected video in any app—Apple TV, Netflix, Amazon Prime, CBS All Access, etc.—QuickTime Player prevents such behavior by showing a black screen. (With purchased movies, you’ll get an HDCP error.) Navigate out of the video by pressing the Menu button, and you can once again use the Apple TV interface. Games and other non-video apps aren’t blocked in this way, nor is YouTube blocked.

However, QuickTime Player is a great way to capture screenshots and video of the Apple TV interface. You can use the standard macOS screenshot commands. To take a clean screenshot without the control bar or any Mac window trimmings in the shot, follow these steps:

- Resize the QuickTime Player window as you see fit.

- Move your mouse pointer outside of the QuickTime Player window.

- Press Command-Shift-4, followed by Space bar to specify window capture.

- Hover the camera pointer over the QuickTime Player window.

- Option-click to capture the window without its drop shadow.

If you’re helping someone learn how to use the Apple TV interface remotely or otherwise giving a presentation about it, you can share the QuickTime Player window just as you would any other Mac window using Skype, Zoom, or the like. Video won’t be smooth, but it will be more than sufficient to demo usage.

I discuss taking screenshots and many other tips in Take Control of Apple TV, which I recently overhauled to make it easier to follow and better fit Apple’s current TV strategy.

Former Apple Engineer: Here’s Why I Trust Apple’s COVID-19 Notification Proposal

We all use apps. We know they capture information about us. But exactly how much information? I’ve worked as a software engineer at Apple and at a mid-sized tech company. I’ve seen the good and the bad. And my experience at Apple makes me far more comfortable with the system Apple and Google have proposed for COVID-19 exposure notification. Here’s why.

Apple Respects User Privacy

When I worked on the Apple Watch, one of my assignments was to record how many times the Weather and Stocks apps were launched and report that back to Apple. Recording how many times each app is launched is simple. But reporting that data back to Apple is much more complex.

Apple emphasizes that its programmers should keep customer security and privacy in mind at all times. There are a few basic rules, the two most relevant of which are:

- Collect information only for a legitimate business purpose

- Don’t collect more information than you need for that purpose

That second one could use a little expansion. If you’re gathering general usage data (how often do people check the weather?), you can’t accidentally collect something that could identify the user, like the city they’re looking up. I didn’t realize how tightly Apple enforces these rules until I was assigned to record user data.

Once I had recorded how many times the Weather and Stocks apps were launched, I set up Apple’s internal framework for reporting data back to the company. My first revelation was that the framework strongly encouraged you to transmit back numbers, not strings (words). By not reporting strings, your code can’t inadvertently record the user’s name or email address. You’re specifically warned not to record file paths, which can include the user’s name (such as /Users/David/Documents/MySpreadsheet.numbers). You also aren’t allowed to play tricks like encoding letters as numbers to send back strings (like A=65, B=66, etc.)

Next, I learned I couldn’t check my code into Apple’s source control system until the privacy review committee had inspected and approved it. This wasn’t as daunting as it sounds. A few senior engineers wanted a written justification for the data I was recording and for the business purpose. They also reviewed my code to make sure I wasn’t accidentally recording more than intended.

Once I had been approved to use Apple’s data reporting framework, I was allowed to check my code into the source control system. If I had tried to check my code into source control without approval, the build server would have refused to build it.

When the next beta build of watchOS came out, I could see on our reporting dashboard how many times the Weather and Stocks apps were launched each day, listed by OS version. But nothing more. Mission accomplished, privacy maintained.

TechCo Largely Ignores User Privacy

I also wrote iPhone apps for a mid-size technology company that shall remain nameless. You’ve likely heard of it, though, and it has several thousand employees and several billion dollars in revenue. Call it TechCo, in part because its approach to user privacy is unfortunately all too common in the industry. It cared much less about user privacy than Apple.

The app I worked on recorded every user interaction and reported that data back to a central server. Every time you performed some action, the app captured what screen you were on and what button you tapped. There was no attempt to minimize the data being captured, nor to anonymize it. Every record sent back included the user’s IP address, username, real name, language and region, timestamp, iPhone model, and lots more.

Keep in mind that this behavior was in no way malicious. The company’s goal wasn’t to surveil their users. Instead, the marketing department just wanted to know what features were most popular and how they were used. Most important, the marketers wanted to know where people fell out of the “funnel.”

When you buy something online, the purchase process is called a funnel. First, you look at a product, say a pair of sneakers. You add the sneakers to your shopping cart and click the buy button. Then you enter your name, address, and credit card, and finally, you click Purchase.

At every stage of the process, people fall out. They decide they don’t really want to spend $100 on new sneakers, or their kids run in to show them something, or their spouse tells them that dinner is ready. Whatever the reason, they forget about the sneakers and never complete the purchase. It’s called a funnel because it narrows like a funnel, with fewer people successfully progressing through each stage to the end.

Companies spend a lot of time figuring out why people fall out at each stage in the funnel. Reducing the number of stages reduces how many opportunities there are to fall out. For instance, remembering your name and address from a previous order and auto-filling it means you don’t have to re-enter that information, which reduces the chance that you’ll fall out of the process at that point. The ultimate reduction is Amazon’s patented 1-Click ordering. Click a single button, and those sneakers are on their way to you.

TechCo’s marketing department wanted more data on why people fell out of the funnel, which they would then use to tune the funnel and sell more product. Unfortunately, they never thought about user privacy as they collected this data.

Most of the data wasn’t collected by code that we wrote ourselves, but by third-party libraries we added to our app. Google Firebase is the most popular library for collecting user data, but there are dozens of others. We had a half-dozen of these libraries in our app. Even though they provided roughly similar features, each collected some unique piece of data that marketing wanted, so we had to add it.

The data was stored in a big database that was searchable by any engineer. This was useful for verifying our code was working as intended. I could launch our app, tap through a few screens, and look at my account in the database to make sure my actions were recorded correctly. However, the database hadn’t been designed to compartmentalize access—everyone with any access could view all the information in it. I could just as easily look up the actions of any of our users. I could see their real names and IP addresses, when they logged on and off, what actions they took, and what products they paid for.

Some of the more senior engineers and I knew this was bad security, and we told TechCo management that it should be improved. Test data should be accessible to all engineers, but production user data shouldn’t be. Real names and IP addresses should be stored in a separate secure database; the general database should key off non-identifying user IDs. Data that’s not needed for a specific business purpose shouldn’t be collected at all.

But the marketers preferred the kitchen sink approach, hoovering up all available data. From a functional standpoint, they weren’t being entirely unreasonable, because that extra data allowed them to go back and answer questions about user patterns they hadn’t thought of when we wrote the app. But just because something can be done doesn’t mean it should be done. Our security complaints were ignored, and we eventually stopped complaining.

The app hadn’t been released outside the US when I worked on it. It probably isn’t legal under the European General Data Protection Regulation (also known as GDPR—see Geoff Duncan’s article, “Europe’s General Data Protection Regulation Makes Privacy Global,” 2 May 2018). I presume it will be modified before TechCo releases it in Europe. The app also doesn’t comply with the California Consumer Privacy Act (CCPA), which aims to allow California residents to know what data is being collected and control its use in certain ways. So it may be changing in a big way to accommodate GDPR and CCPA soon.

Privacy Is Baked into the COVID-19 Exposure Notification Proposal

With those two stories in mind, consider the COVID-19 exposure notification technology proposed by Apple and Google. This proposal isn’t about explicit contact tracing: it doesn’t identify you or anyone with whom you came in contact.

(My explanation below is based on published descriptions, such as Glenn Fleishman’s article, “Apple and Google Partner for Privacy-Preserving COVID-19 Contact Tracing and Notification,” 10 April 2020. Apple and Google have continued to tweak elements of the project; read that article’s comments for major updates. Glenn has also received ongoing briefing information from the Apple/Google partnership, and he vetted this retelling.)

The current draft of the proposal has a very Apple privacy-aware feel. Participation in both recording and broadcasting information is opt-in, as is your choice to report if you receive a positive COVID-19 diagnosis. Your phone doesn’t broadcast any personal information about you. Instead, it creates a Bluetooth beacon with a unique ID that can’t be tracked back to you. The ID is derived from a randomly generated diagnosis encryption key generated fresh every 24 hours and stored only on your phone. Even that ID isn’t trackable: it changes every 15 minutes, so it can’t be used by itself to identify your phone. Only the last 14 keys—14 days’ worth—are retained.

Your phone records all identifiers it picks up from other phones in your vicinity, but not the location where it recorded them. The list of Bluetooth IDs you’ve encountered is stored on your phone, not sent to a central server. (Apple and Google confirmed recently that they won’t approve any app that uses this contact-notification system and also records location.)

If you test positive for COVID-19, you then use a public health authority app that can interact with Apple and Google’s framework to report your diagnosis. You will likely have to enter a code or other information to validate the diagnosis. That prevents the apps being used for fake reporting, which would cause unnecessary trouble and undermine confidence in the system.

When the app confirms your diagnosis, it triggers your phone to upload up to the last 14 days of daily encryption keys to the Apple and Google-controlled servers. Fewer keys might be uploaded based on when exposure could have occurred.

If you have the service turned on, your phone constantly downloads any daily diagnosis keys that confirmed people’s devices have posted. Your phone then performs cryptographic operations to see if it can match derived IDs from each key against any Bluetooth identifiers captured during the same period covered by the key. If so, you were in proximity and will receive a notification. (Proximity is a complicated question, because of Bluetooth’s range and how devices far apart might measure as close together.) Even without an app installed, you will receive a message from the smartphone operating system; with an app, you receive more detailed instructions.

At no time does the server know anyone’s name or location, just a set of randomly generated encryption keys. You don’t even get the exact Bluetooth beacons, which might let someone identify you from public spaces. In fact, your phone never sends any data to the server unless you prove to the app that you tested positive for COVID-19. Even if a hacker or overzealous government agency were to take over the server, they couldn’t identify the users. Because your phone dumps all keys over 14 days old, even cracking your phone would reveal little long-term information.

In reality, there would be more than one server, and the process is more complicated. This is a broad outline that shows how Apple and Google are building privacy in from the very beginning to avoid the kinds of mistakes made by TechCo.

Apple claims to respect user privacy, and my experience indicates that’s true. I’m much more willing to trust a system developed by Apple than one created by any other company or government. It’s not that another company or government would be trying to abuse user privacy; it’s just that outside of Apple, too many organizations either lack the understanding of what it means to bake privacy in from the start or have competing interests that undermine efforts to do the right thing.

David Shayer was an Apple software engineer for 18 years. He worked on the iPod, the Apple Watch, and Apple’s bug-tracking system Radar, among other projects.