#1692: Stolen Device Protection, AI voice scams, Apple and the EU’s DMA, the Mac’s 40th anniversary

The big news making the rounds is Apple’s bitter announcement about how it will comply with the European Union’s Digital Markets Act. Josh Centers rejoins us to look at what it entails and Apple’s grudging reaction. On the practical side, Adam Engst explains iOS 17.3’s new Stolen Device Protection, how to turn it on, and who might not want to use it. Glenn Fleishman reminds us about the increasing prevalence of AI-driven phone scams and how you can ensure your family won’t fall prey. Finally, the Mac celebrated its 40th anniversary last week, so we link to a collection of sites offering trips down memory lane. Notable Mac app releases this week include 1Password 8.10.24, BusyCal 2024.1.1 and BusyContacts 2024.1.1, Fantastical 3.8.10 and Cardhop 2.2.15, Hazel 5.3.2, and Zoom 5.17.5.

Turn On Stolen Device Protection in iOS 17.3

As promised, Apple built the new Stolen Device Protection technology into the just-released iOS 17.3. It offers optional protection against a particularly troubling form of attack brought to light by reporters Joanna Stern and Nicole Nguyen of the Wall Street Journal in a handful of articles and videos (see our “iPhone Passcode Thefts” series).

In short, a thief would discover the victim’s iPhone passcode by shoulder surfing, surreptitious filming, or social engineering, then grab the iPhone and run. In some cases, criminals drugged, threatened, or attacked people to extract the passcode. Soon after, the thief would use the passcode to change the victim’s Apple ID password, lock them out of their account, and use apps and data on the iPhone to steal money, order goods, and generally wreak havoc.

The attacks worked because Apple had made resetting an Apple ID password easy for those who could only remember their passcodes. Many people forget their Apple ID passwords, so Apple decided it was worth trading some security for allowing people to recover from a forgotten password easily. It also undoubtedly reduced Apple’s customer service overhead by providing a self-service option for resetting Apple ID passwords. Unfortunately, whenever there’s a loophole or backdoor, criminals will eventually find it.

Happily, Apple now lets us eliminate that security hole with Stolen Device Protection for iPhone. It’s not available for the iPad or the Mac. Apple hasn’t explained why, of course, but there are two possibilities for the iPad. Apple may be planning to add the feature to the iPad in a future update—the iPad often lags behind the iPhone—or the company may feel that iPad users are unlikely to be targeted similarly. (Many of the reported iPhone passcode thefts took place in bars where victims weren’t paying close attention and may have been impaired by alcohol.) Macs seem even less likely to be targeted, given the additional difficulty of discovering a login password.

Stolen Device Protection Details

Here’s what happens when you turn on Stolen Device Protection. Everything works as before when you’re in a familiar location—home, work, or anywhere your iPhone has determined you use it frequently using the device-based Significant Locations system. You can change your Apple ID password, turn off Find My, access passwords in Keychain, and much more with no new requirements.

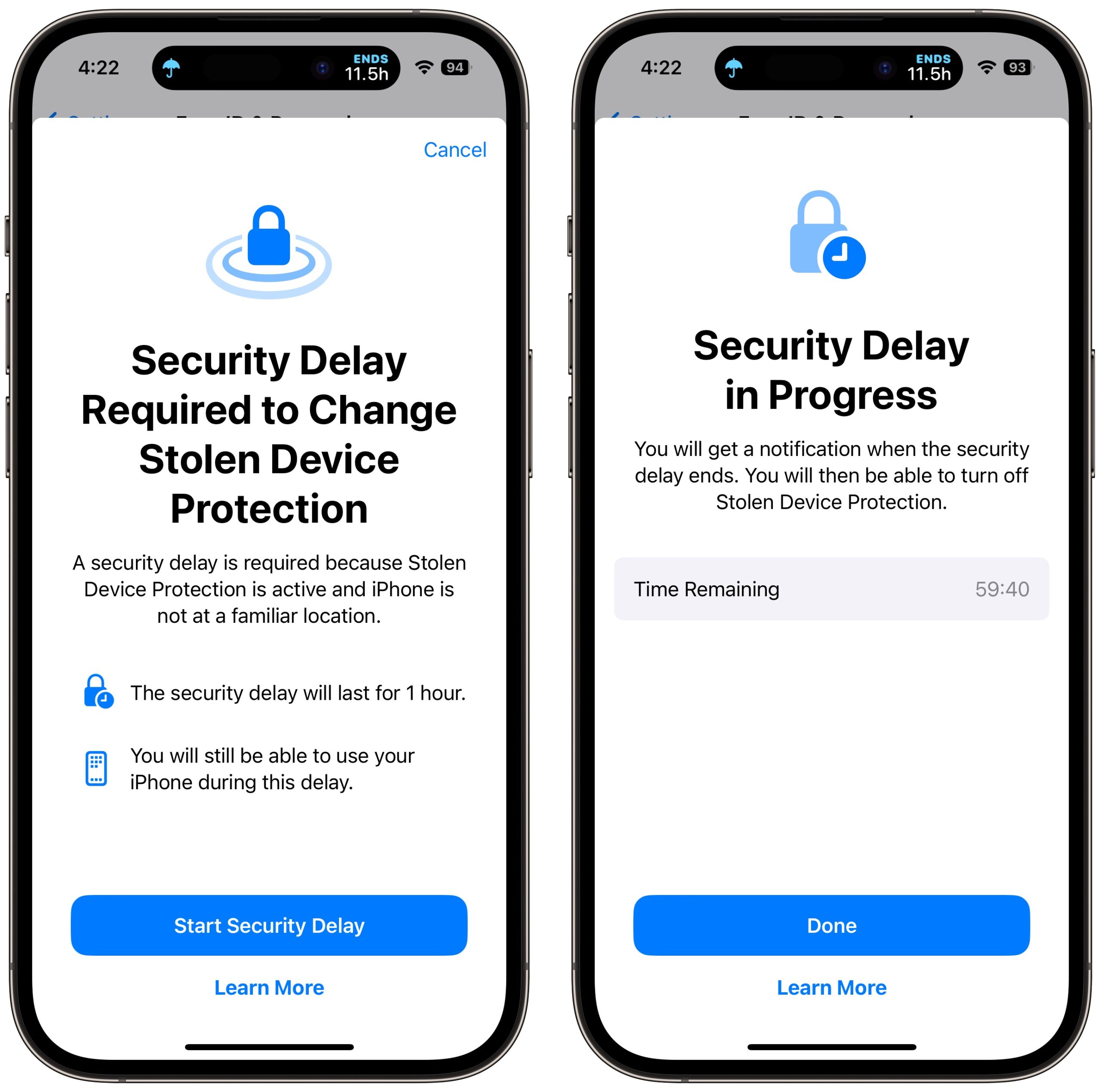

However, whenever you’re somewhere deemed unfamiliar, critical changes to your account or device require Face ID or Touch ID authentication, with no passcode alternative or fallback. The most important security actions also require a delay of an hour—shown with a countdown timer—before you perform a second biometric authentication. This delay reduces the chances of an attacker forcing you to authenticate with the threat of violence.

Apple says you must employ Face ID or Touch ID authentication in unfamiliar locations to:

- Use passwords or passkeys saved in Keychain

- Use payment methods saved in Safari (autofill)

- Turn off Lost Mode

- Erase all content and settings

- Apply for a new Apple Card

- View an Apple Card virtual card number

- Take certain Apple Cash and Savings actions in Wallet (for example, Apple Cash or Savings transfers)

- Use your iPhone to set up a new device (for example, Quick Start)

Notably, you can still use the iPhone passcode for in-person purchases made with Apple Pay, which remains a slight vulnerability. Apple likely felt that it would be too annoying to have a Face ID or Touch ID failure while attempting to pay for something at a store and not be able to fall back on the passcode.

You can also turn off Significant Locations with a passcode fallback after a biometric authentication failure, but all that does is eliminate familiar locations as a way of sidestepping biometrics. (Apple claims you must have Significant Locations enabled to use Stolen Device Protection, but that doesn’t seem to be true, and deactivating it doesn’t turn off the theft protection feature.)

Apple lays out which actions require the hour-long security delay and a second biometric authentication. These include when you want to:

- Change your Apple ID password (Apple notes this may prevent the location of your devices from appearing on iCloud.com for a while)

- Sign out of your Apple ID

- Update Apple ID account security settings (such as adding or removing a trusted device, Recovery Key, or Recovery Contact)

- Add or remove Face ID or Touch ID

- Change your iPhone passcode

- Reset All Settings

- Turn off Find My

- Turn off Stolen Device Protection

The security delay may end before the hour elapses if your iPhone detects that you’ve moved to a familiar location. In other words, you can short-circuit it by going home.

The fact that turning off Stolen Device Protection requires a security delay and biometric authentication means that you should be careful to turn it off before selling, giving away, or trading in your iPhone. Once it’s out of your physical control, it won’t be possible for anyone else to reset it.

Turn on Stolen Device Protection

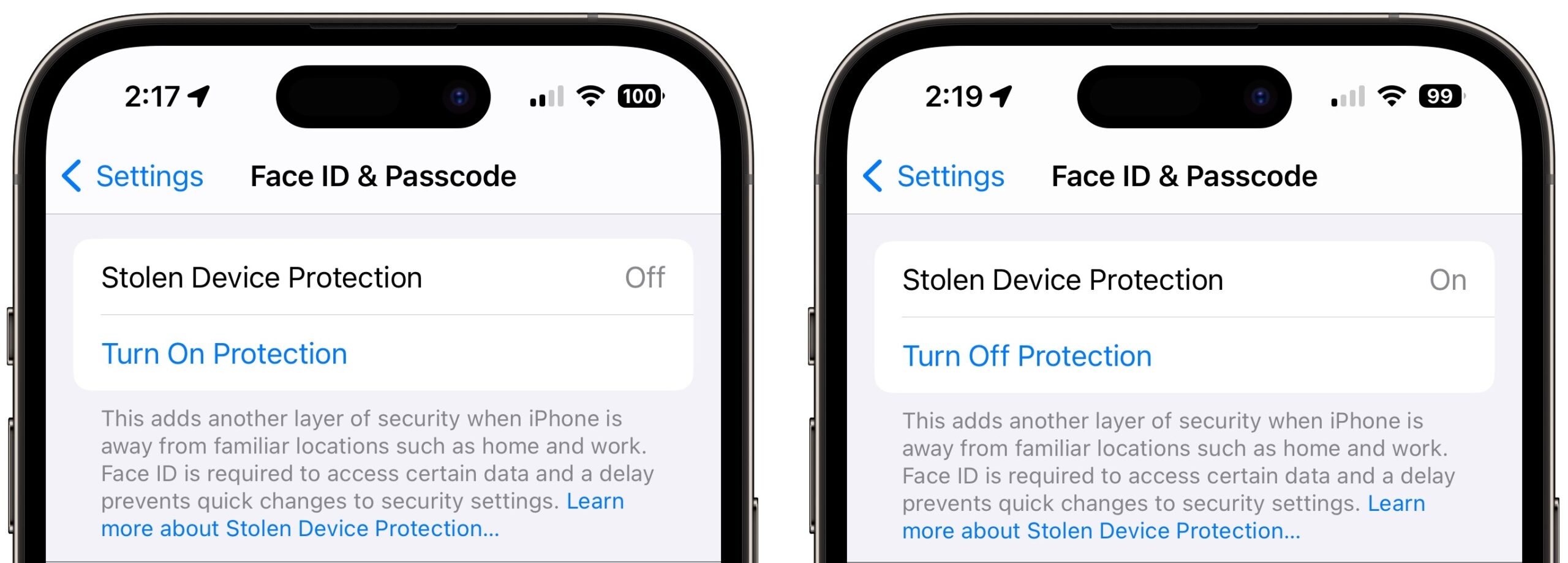

Turning Stolen Device Protection on is easy, and I recommend that everyone using Face ID or Touch ID do so. Go to Settings > Face ID/Touch ID & Passcode, enter your passcode, and tap Turn On Protection. (If it’s enabled, tap Turn Off Protection to remove its additional safeguards.)

Stolen Device Protection does have a handful of requirements. Apple says you must:

- Be using two-factor authentication for your Apple ID (at this point, nearly everyone is)

- Have a passcode set up for your iPhone

- Turn on Face ID or Touch ID

- Enable Find My

- Turn on Significant Locations (Settings > Privacy & Security > Location Services > System Services > Significant Locations), although this doesn’t seem to be required

Put bluntly, I can think of no good reason to avoid having all these required features enabled, anyway! With one exception, all increase your security with no privacy downside due to Apple’s careful design and end-to-end encryption. In particular, anyone who believes Apple’s biometric systems are less secure or private than a passcode is wrong and is putting themselves at risk.

The exception is Significant Locations because it displays the most recent significant location to anyone with the passcode. That makes possible—if not necessarily easy—the scenario of a thief learning your passcode, stealing your iPhone, and then going to the most recent significant location to turn off Stolen Device Protection. You would likely have more time to lock the iPhone remotely, however.

Some individuals have trouble with biometric authentication, Touch ID more so than Face ID. The inability of every iPhone user to rely on biometric authentication is one big reason why Apple made Stolen Device Protection optional. If you’re in that group, Stolen Device Protection would be problematic because it will require biometric authentication in unfamiliar locations. If you were on a trip, for instance, Stolen Device Protection and the inability to authenticate with Face ID or Touch ID would prevent you from using passwords in Keychain.

I turned on Stolen Device Protection and triggered it by turning off Significant Locations and trying to turn off various security settings. Each time, I was met with a warning dialog and a security delay. Turning the iPhone off and back on merely stopped the security delay, forcing me to restart it. When it finally expires—an hour is a long time when you’re testing!—iOS alerts you to that fact. You can then authenticate again and perform any of the previously restricted actions.

Let me leave you with one final piece of advice. It may take the criminal underworld some time before it’s common knowledge that iPhone passcode theft may no longer work, and of course, it will continue to work against those who don’t upgrade to iOS 17.3 and turn on Stolen Device Protection. So the best thing you can do to discourage possible iPhone thefts—even if they can’t ruin your digital life—is what I’ve been saying all along: Never enter your iPhone passcode in public.

How To Avoid AI Voice Impersonation and Similar Scams

AI voice impersonation puts a new twist on an old scam, and you and your family need to be prepared. Forewarned is forearmed when criminals can take snippets of online audio and use increasingly widely available tools to make a sufficiently convincing AI voice, bolstered by claims to be on a poor phone connection.

I encourage you to have conversations among your family, at least—but maybe also within your company or social groups—so everyone is aware that these kinds of scams are taking place. The best defense relies on a shared secret password or other information only you and the purported caller would know.

How the Scam Works

The kind of fraud related to AI voice impersonation is typically called the “grandparent scam.” It works like this: The phone rings in a grandparent’s home, usually early in the morning or late at night. They answer, and it’s one of their grandchildren saying they have been in an accident, arrested, or robbed. They need money—fast. The connection is often poor, and the grandchild is in distress.

“Is this really Paolo? It doesn’t sound quite like you.”

“Grandma, it’s me. I’m on that trip to Mexico I told you about, and thieves stole my wallet and phone! Can you wire me some money so I can get a new phone?”

The grandparent leaps to help by running to a Walmart or Western Union—or even withdrawing cash from a bank and handing it off to a “courier” who arrives at their home. And their money is off to a scammer.

The FBI says this particular scam first reared its head in 2008, likely because of the confluence of inexpensive calls from anywhere, the ease of transferring money worldwide instantly, and social media making it easier for fraudsters to discover facts about people that let them make plausible assertions.

Of course, this scam doesn’t always involve grandparents, and I don’t mean to imply that older people are more easily fooled. Scammers also target children, parents, distant relatives, friends, neighbors, and co-workers. Sometimes the caller alleges to be a police officer, a doctor, or, ironically, an FBI agent.

The key element of the scam is that a close family member, friend, or colleague is in dire need. The purported urgency and potential threat against the caller’s freedom or ability to return home override the normal critical thinking most people would bring to bear. The scammers also often call in the middle of the night when we’re less likely to be alert, or attempt to take advantage of cognitive declines or hearing problems in older family members.

The AI voice impersonation aspect adds a “future shock” element: none of us are prepared for a call from a thoroughly convincing version of someone we know well. It’s already happening. The FTC highlighted it in a post on March 2023. And you can find news accounts around the country, meaning it’s just a slice of the whole scam ham: the Washington Post on a Canadian fraud, March 2023; Good Morning America, June 2023; New Mexico, September 2023; and San Diego, November 2023; just for instance. Posts on forums abound, too, like Reddit.

Services arose last year that can produce a credible voice impression from fairly small amounts of audio, notably ElevenLabs. The AI voice is eerily accurate when seeded with a few minutes of audio. It doesn’t cost much to generate a voice clone, and many services have few or no safeguards to prevent misuse, a variety of which happened almost immediately. The FTC is soliciting proposals on how to identify and deter voice cloning fraud.

It’s unclear how the scammers acquired a recording of the person’s voice in some cases. Obviously, if someone is a podcaster or appears in YouTube or TikTok videos, those are likely sources. But it’s also plausible that a generic voice of the right age and accent could fool people. There’s an outlier that’s occurred, too: a caller, who had to be an AI, tried to scam a grandmother in Montreal in part by dropping in Italian nicknames, like calling her Nonna. That’s uncanny and would have required a high degree of research for a relatively small financial gain.

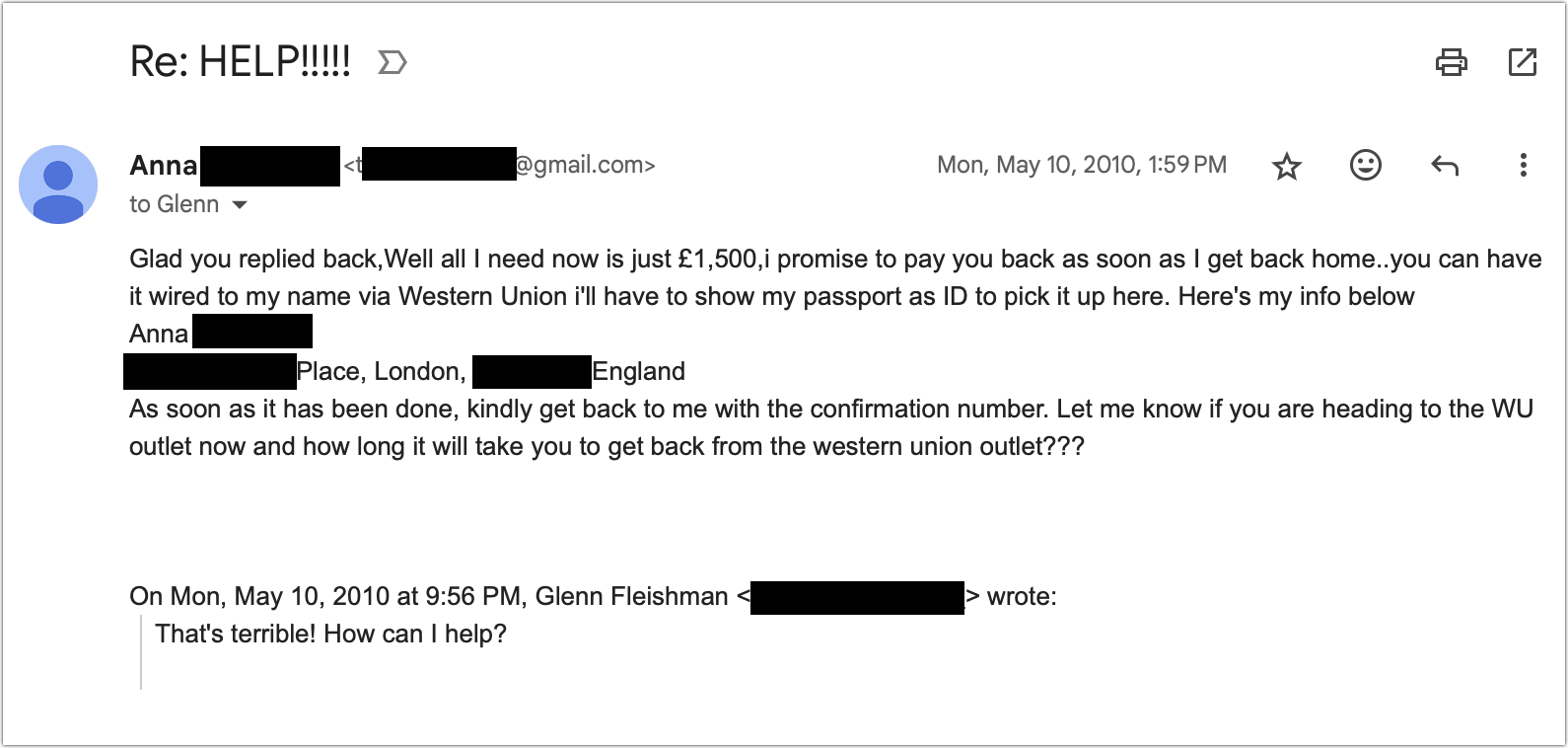

These scams can also come in by email, though they are often far less convincing due to less of a feeling of urgency, as well as issues with diction, spelling, and other written factors. I received this email in 2010 from my “friend” Anna. The real Anna was someone I knew and liked but wasn’t close enough to for her to ask me to borrow a pile of money, and she was well-spoken and careful with words in my interactions with her. The first email was brief; I replied and got what you see below. It’s not convincing—but that was before we had generative AI penning such letters.

The Robots Are Coming for Your Money

You can beat most of these scams with a shared password among family, friends, or co-workers. As with most aspects of verification, the process requires an out-of-band channel for information. Essentially, you want to provide a secret or details over a communication method that isn’t the same one you’re securing with that information. (We write about the out-of-band issue so much at TidBITS, we should form a musical group called “Out of Band”)

Your group should set up a password for situations where you need to confirm identities over the phone. Let’s say it’s “raspberry beret.” If you get a call from a loved one in trouble, you can say, “Look, there are scams, and we talked about this—what’s the password?” The password should be something familiar that’s easily remembered but not associated with you online. A family joke can be good. You don’t have to be strict about them getting it perfect—you’re not verifying their nuclear launch code authority.

If the caller can’t remember the password, dig deeper and ask for personal facts—they should be readily available to a person you know well. “Do you remember what Uncle Don told you?” “No, I don’t remember what he said.” “You don’t have an Uncle Don.” (As a result of researching this article, my family now has a password.)

You can also identify and defeat these scams through simple means:

- While stalling the caller (pretend to be sleepy or confused), surreptitiously text the person at their known phone number to confirm their situation.

- Say you’ll call them back, then call the person’s known phone number. A criminal will likely claim that their phone was stolen or not working. In many of the stories linked above, when the scam failed, it was because the victim placed a call or sent a text.

- Try to get them to switch to a video call “like you always do.” So far, AI isn’t good enough to provide live video impersonation of an arbitrary person.

- Call or text a relative or other person in common who might be more informed about the alleged caller’s location if they can’t be reached immediately.

- Call a police station where the person claims to be calling from.

I also hope all TidBITS readers know this, but police departments and lawyers never ask for funds to be wired through Western Union, Walmart or Amazon gift cards, Bitcoin, or the like; they don’t demand the money be sent immediately; they don’t make threats; and they don’t tell you not to call anyone to confirm the facts. While behavior like that should cause even the most trusting person to take notice, we’ll rarely be in these situations in our lives, so we can be taken unaware. It’s essential to consider the possibilities ahead of time to establish the pathways you’ll need to defeat scams under pressure.

I mentioned earlier that email can be much less convincing, using the example of my friend-of-a-friend’s hijacked email account and message. So I asked ChatGPT to compose an email to my grandmother telling her I was robbed in Mexico and my cameras were stolen. The first try was stilted, so I told it to use simpler, less formal language. On the second try, it came up with what could be a convincing message with no red flags:

Subject: Need Help Grandma! Got Robbed in Mexico 😟

Hey Grandma,

Hope you’re doing okay! So, guess what? I’m in Mexico right now, but something crazy happened. I got robbed! Yep, they took my cameras and some other stuff. But don’t worry, I’m totally fine, just a bit shaken up.

I really need your help with this. Can we talk about it soon?

Miss you and can’t wait to see you!

[Your Name]

No, ChatGPT, no! Generative AI assistance can easily eliminate the usual unconvincing use of language, idiom, and tone that characterize most “grandparent scam” attempts. Imagine an email interchange or live chat with someone who sounds like someone you know and love, trust, or work with, and who can answer a lot of basic questions. That’s why you must be ready with a shared password, backchannel confirmation, or video chat.

The EU Forces Open Apple’s Walled Garden

Apple has announced extraordinary changes to how iOS apps will be distributed in the European Union. In short, Apple’s App Store will no longer be the only source for iOS apps, and the company is making it known in the clearest possible terms that it’s not happy about it.

Apple’s changes were forced by the European Commission’s Digital Markets Act (DMA), passed by the European Parliament in 2022. Under the DMA, Apple is considered a “gatekeeper” of “core platform services.” As such, Apple cannot prevent its users from doing business with third parties on that platform, nor can Apple give preferential treatment to its own services.

To be fair, the DMA exists because the EU wants to protect its smaller businesses and users from abuses by entrenched tech giants running large online platforms. Whether the DMA’s requirements are the best way to accomplish those goals is a conversation worth having, but it’s undeniable that some large online platforms have behaved in anticompetitive and monopolistic ways that harm users and smaller businesses.

Here is a brief summary of what EU iPhone users can expect in the upcoming iOS 17.4 release, anticipated sometime in March 2024:

- Alternative app marketplaces: Developers will be able to create and run “alternative app marketplaces.” Don’t call them app stores! AltStore, a gray market alternative app store, has announced plans to launch an official version in the EU. We would be surprised if Apple-nemesis Epic Games doesn’t try to launch its own alternative store.

- Alternative payment providers: Developers will be able to offer in-app purchases without going through Apple’s system. They can either link to their own websites for payment or support alternative payment methods like PayPal.

- Browser choice: Safari will no longer be the default default Web browser. After installing iOS 17.4, users will have to pick a default browser from a list of options.

- WebKit-free web browsers: Apple has long allowed Web browsers other than Safari in the App Store and has even allowed you to set one as a default, but it was a somewhat meaningless choice since those browsers—like Chrome and Firefox—had to use the same WebKit rendering engine as Safari. So you were really just using Safari with a different skin. Google will now be able to provide an EU version of Chrome that uses its Blink rendering engine, and Firefox can ship a version with its Gecko engine.

- Contactless payments without the Wallet app: It will be possible for developers to access the iPhone’s NFC hardware directly to make contactless payments without going through Apple’s Wallet app. Additionally, Apple will provide an “interoperability request form” where developers can request access to other hardware and software features.

- Expanded data portability: EU users will have increased options on Apple’s Data and Privacy site to see their App Store data and export it to authorized third parties.

Note that these changes do not allow sideloading apps downloaded directly from websites, as Mac users have been accustomed to for decades. Every app must still be downloaded from an app marketplace, whether Apple’s App Store or someone else’s. However, you’ll download those alternative app marketplaces from the Web: “Marketplace apps may only be installed from the marketplace developer’s website.”

Also, as John Gruber of Daring Fireball points out, many of these changes, such as alternative app marketplaces and non-WebKit Web browsers—are coming only to the iPhone and not the iPad.

Apple Doth Protest

If this all seems very confusing, that’s at least partially intentional on Apple’s part. The company was emphatic about how it is complying with the new EU requirements only under duress.

Apple’s announcement is so bitter that we assume the PR team was biting into whole grapefruits while writing it. The press release laments:

The new options for processing payments and downloading apps on iOS open new avenues for malware, fraud and scams, illicit and harmful content, and other privacy and security threats.

The announcement also quotes Apple Fellow Phil Schiller, in his best “disappointed dad” voice:

The changes we’re announcing today comply with the Digital Markets Act’s requirements in the European Union, while helping to protect EU users from the unavoidable increased privacy and security threats this regulation brings. Our priority remains creating the best, most secure possible experience for our users in the EU and around the world.

Phil Schiller isn’t mad at you, EU. He’s just… disappointed.

For years, Apple has argued that its heavy-handed App Store approach was the only way to keep its platforms secure (while guaranteeing itself a nice cut of developer revenue in exchange for developing and maintaining the platform). But as Rich Mogull correctly foresaw in “Apple’s App Store Stubbornness May Be iOS’s Greatest Security Vulnerability” (8 April 2022), that same heavy-handed approach backfired and inspired the EU to go after Apple’s walled garden with a crowbar.

Apple may be exaggerating for effect, but it’s not wrong. These changes will make Apple’s platforms less secure going forward; it’s just a matter of to what degree, which is why the company is taking every conceivable measure to protect users.

How Apple Plans to Protect Users

Just because apps can be distributed outside Apple’s walled garden doesn’t mean they will escape Apple’s review.

iOS apps distributed outside of the App Store will be notarized by Apple, much like how apps are notarized in macOS. This step gives Apple a lever to prevent or ban misbehaving apps. However, there are a couple of key differences.

- While macOS offers a way to bypass notarization so you can install any app you’d like, Apple does not provide this as an option for iOS.

- Notarization under macOS is largely automatic, but the iOS process will involve some degree of human review.

That human reviews checks to “ensure apps are free of known malware, viruses, or other security threats, function as promised, and don’t expose users to egregious fraud.” However, according to John Gruber, who has had multiple briefings with Apple, apps distributed through app marketplaces will not be rejected for content. For example, while Apple will never allow adult-rated apps in the App Store, they will be permissible in app marketplaces.

Besides a baseline app review, iOS will present an app installation sheet that summarizes basic information about the app when you install an app from an app marketplace. Also, Apple will perform background malware scans when installing such apps.

Additionally, alternative app marketplaces are subject to stringent “ongoing requirements.” Not just anyone can open an app marketplace. Among other things, developers need a healthy line of credit:

In order to establish adequate financial means to guarantee support for developers and customers, marketplace developers must provide Apple a stand-by letter of credit from an A-rated (or equivalent by S&P, Fitch, or Moody’s) financial Institution of €1,000,000 prior to receiving the entitlement. It will need to be auto-renewed on a yearly basis.

Of course, Apple touts these many security measures with yet another bitter disclaimer:

However, Apple has less ability to address other risks — including apps that contain scams, fraud, and abuse, or that expose users to illicit, objectionable, or harmful content. In addition, apps that use alternative browser engines — other than Apple’s WebKit — may negatively affect the user experience, including impacts to system performance and battery life.

And developers may have a bitter pill to swallow if they decide to accept the new path the EU has forged for them.

Thorny Questions for Developers

Apple is giving developers two mutually exclusive choices. They can take the blue pill and keep things largely as they are now. Developers distribute their apps only through the App Store, use Apple as their payment processor, rely only on the WebKit rendering engine, and pay Apple a straight 30% or 15% commission.

However, developers who choose the red pill open a whole new world of alternative app marketplaces, third-party payment processors, and other freedoms, but they also face a complicated new business reality. And there is no going back:

Developers who adopt the new business terms at any time will not be able to switch back to Apple’s existing business terms for their EU apps. Apple will continue to give developers advance notice of changes to our terms, so they can make informed choices about their businesses moving forward.

If developers choose the new business terms, they can keep their apps on the App Store in addition to alternative app marketplaces and pay lower commissions of either 10% or 17%. That sounds good, but the math gets more complicated. If developers also want to use Apple’s payment processor, they will pay an additional 3% fee. Additionally, if an app is a hit, with one million or more first annual installs in the EU, the developer will pay a Core Technology Fee of €0.50 for each additional install per Apple account (which includes new downloads, re-downloads, and updates) once every 12 months. Free apps from nonprofit organizations, academic institutions, and government entities are exempt from this fee.

The new business terms also allow developers to opt out of Apple’s systems in the EU entirely and pay the company only the Core Technology Fee, but then they’re on their own for distribution, payments, and other liabilities associated with EU law.

Apple has created a fee calculator to help with the complicated math, but developers may not like the results. Developer Nikita Bier ran some numbers and estimates that some developers may only keep a small fraction of their revenue or even owe Apple money.

Relaxed Game Streaming and Sign In with Apple Rules

There are changes for everyone outside the EU as well.

First, Apple now allows game-streaming apps in the App Store. Services like Xbox Cloud Gaming and Nvidia’s GeForce Now let you play hardware-intensive games without high-end hardware. The game’s code is processed on a remote server, and the video is streamed to your device. This move likely means many more gaming options will soon be in the App Store. (Hopefully, some of these services will come to the Apple TV.)

Second, developers can now offer apps that feature “mini-apps, mini-games, chatbots, and plug-ins” for an additional in-app purchase.

Third, in the past, if an app offered the option to sign in with a Google account or other third-party authentication service, it also had to present the option to use Apple’s service. That’s technically no longer the case. Developers can also now offer “third-party or social login services” in their apps without being forced to include the Sign In with Apple option, but they must offer “an equivalent privacy-focused login service instead.” That may be a tough hurdle to clear for many developers, so the previous policy may effectively remain.

The EU as App Store Guinea Pig

Apple is overwhelmingly clear about the extent to which it feels these requirements are wrong-headed and will hurt the user experience. The company likely fears, and rightly so, that other jurisdictions around the world will demand similar concessions. After all, the infrastructure is already in place. Or—even worse–other countries might demand different concessions.

The EU has made itself a testbed for this type of governmental intervention. While Apple is begrudgingly complying, it clearly hopes—and is doing its best to ensure—that developers stick with the status quo. Apple’s announcement takes pains to highlight every possible negative of these changes, including security risks, fraud concerns, offensive content, user confusion, and reduced battery life. And you can be sure Apple will be quick to point out every such failure and explain how it could have been prevented if the EU hadn’t tied its hands.