#1487: iOS and iPadOS 13.1.3, Catalina Supplemental Update, why iOS 13 and Catalina are so buggy, USB storage in iOS 13, Sidecar in Catalina

The updates keep coming. Apple has now updated iOS and iPadOS to version 13.1.3 and issued a supplemental update for macOS 10.15 Catalina to squash bugs in those releases. If you have upgraded, Josh Centers answers your questions about using USB storage with iOS 13, and Julio Ojeda-Zapata explains how to use Catalina’s Sidecar feature, which turns your iPad into a secondary Mac screen. Finally, former Apple software engineer David Shayer joins us this week to explain why Apple’s new operating systems are so buggy. Notable Mac app releases this week include BBEdit 13.0.1, Microsoft Office for Mac 16.30, Tinderbox 8.1, Audio Hijack 3.6.1, and DEVONagent 3.11.2.

iOS 13.1.3, iPadOS 13.1.3, and Catalina Supplemental Update Tackle Bugs

Apple has released iOS 13.1.3, iPadOS 13.1.3, and macOS Catalina 10.15 Supplemental Update to address a wide range of bugs that have plagued the new operating systems. These are purely bug-fix releases without any new features or even CVE entries.

iOS 13.1.3

The iOS 13.1.3 update offers fixes for:

- iPhones not ringing or vibrating when receiving a call

- Not being able to open meeting invitations in Mail

- Incorrect information being displayed in the Health app after clocks adjust for daylight saving time

- Apps and Voice Memo recordings not downloading after restoring from an iCloud backup

- Apple Watches not pairing successfully or failing to receive notifications

- Bluetooth unexpectedly disconnecting on some vehicles

- Connection problems for Bluetooth headsets and hearing aids

- Launch performance problems with Game Center apps

You can get the update, which weighs in at 110 MB on an iPhone X, in Settings > General > Software Update, through iTunes in macOS prior to 10.15 Catalina, or in the Catalina Finder.

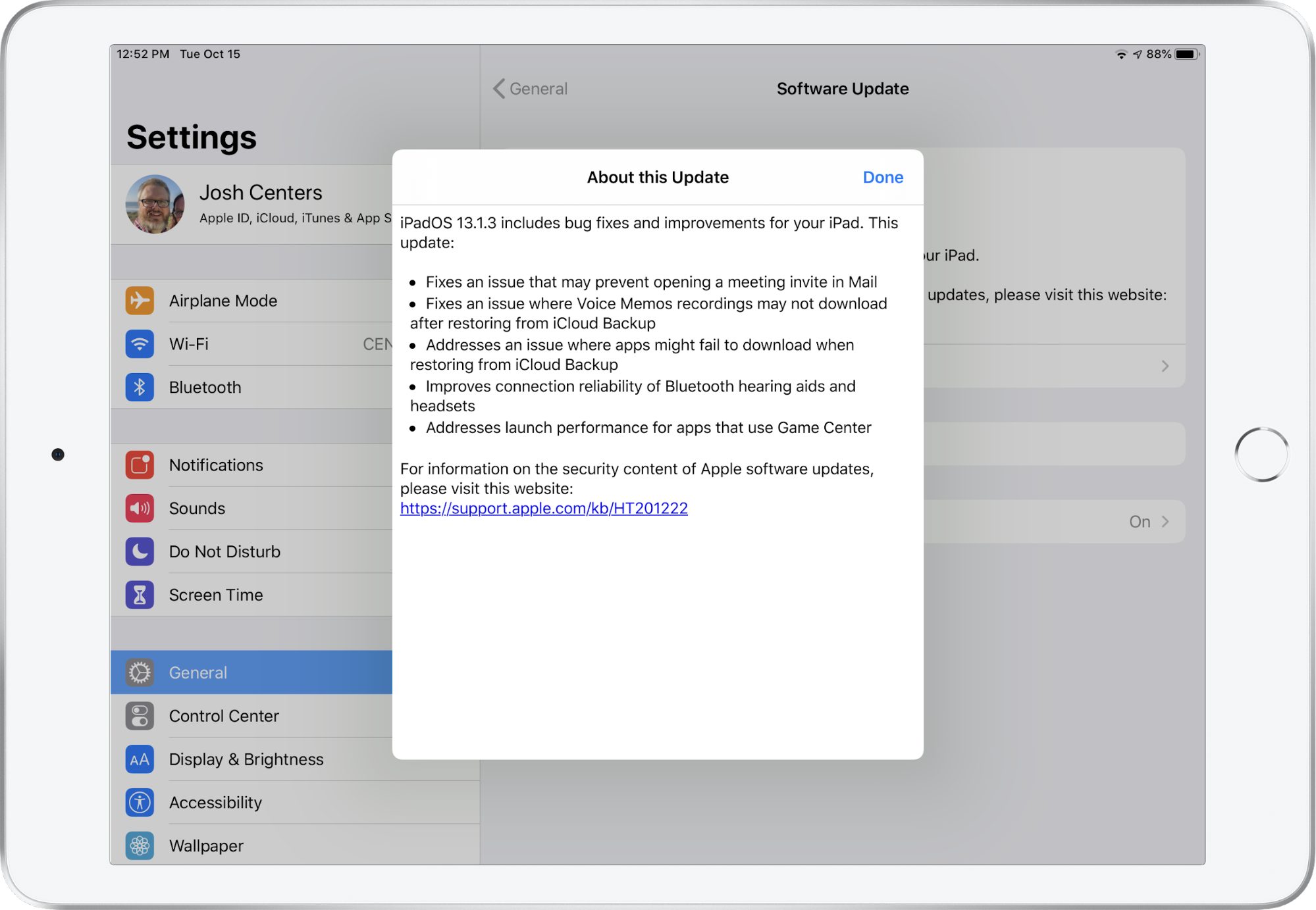

iPadOS 13.1.3

The iPadOS 13.1.3 update features a subset of the iOS 13.1.3 fixes:

- Meeting invitations should open in Mail more reliably

- Apps and Voice Memo recordings now download properly after restoring from an iCloud backup

- Improved Bluetooth headset and hearing aid reliability

- Faster launch performance for Game Center apps

You can get the update, which is a 73.9 MB download on a 10.5-inch iPad Pro, in Settings > General > Software Update, through iTunes in macOS prior to 10.15 Catalina, or in the Catalina Finder.

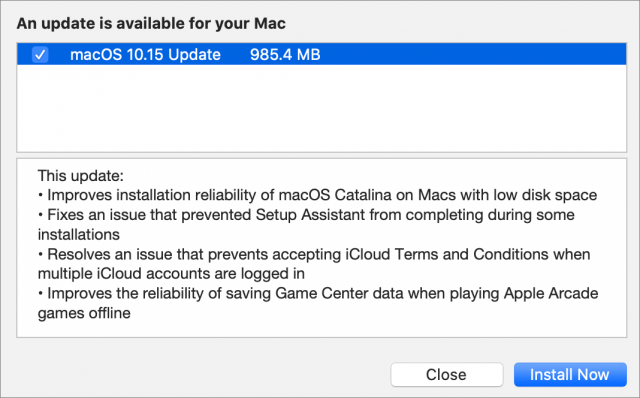

macOS Catalina Supplemental Update

The macOS Catalina Supplemental Update also addresses a handful of notable problems. It provides:

- Improved installation reliability when installing Catalina on a Mac with low disk space

- A fix for an issue that prevented Setup Assistant from completing—quite a few people ran into this bug

- A fix for an iCloud bug that prevented you from accepting the Terms and Conditions when multiple accounts were logged in

- Enhanced reliability of saving Game Center data when playing Apple Arcade games offline.

You can install the 985.4 MB update from System Preferences > Software Update. If you’re still running 10.14 Mojave or an earlier version, we’re standing by our recommendation that you hold off upgrading to Catalina for now.

Six Reasons Why iOS 13 and Catalina Are So Buggy

iOS 13 and macOS 10.15 Catalina have been unusually buggy releases for Apple. The betas started out buggy at WWDC in June, which is not unexpected, but even after Apple removed some features from the final releases in September, more problems have forced the company to publish quick updates. Why? Based on my 18 years of experience working as an Apple software engineer, I have a few ideas.

Overloaded Feature Lists Lead to Schedule Chicken

Apple is aggressive about including significant features in upcoming products. Tight schedules and ambitious feature sets mean software engineers and quality assurance (QA) engineers routinely work nights and weekends as deadlines approach. Inevitably some features are postponed for a future release, as we saw with iCloud Drive Folder Sharing.

In a well-run project, features that are lagging behind are cut early, so engineers can devote their time to polishing the features that will actually ship. But sometimes managers play “schedule chicken” since no one wants to admit in the departmental meeting that their part of the project is behind. Instead, they hope someone else working on another aspect of that feature is running even later, so they reap the benefit of the feature being delayed without taking the hit of being the one who delayed it. But if no one blinks, engineers continue to work on a feature that can’t possibly be completed in time and that eventually gets pushed off to a future release.

Apple could address this scheduling problem by not packing so many features into each release, but that’s just not the company culture. Products that aren’t on a set release schedule, like the AirPods or the rumored Bluetooth tracking tiles, can be delayed until they’re really solid. But products on an annual release schedule, like iPhones and operating systems, must ship in September, whatever state they’re in.

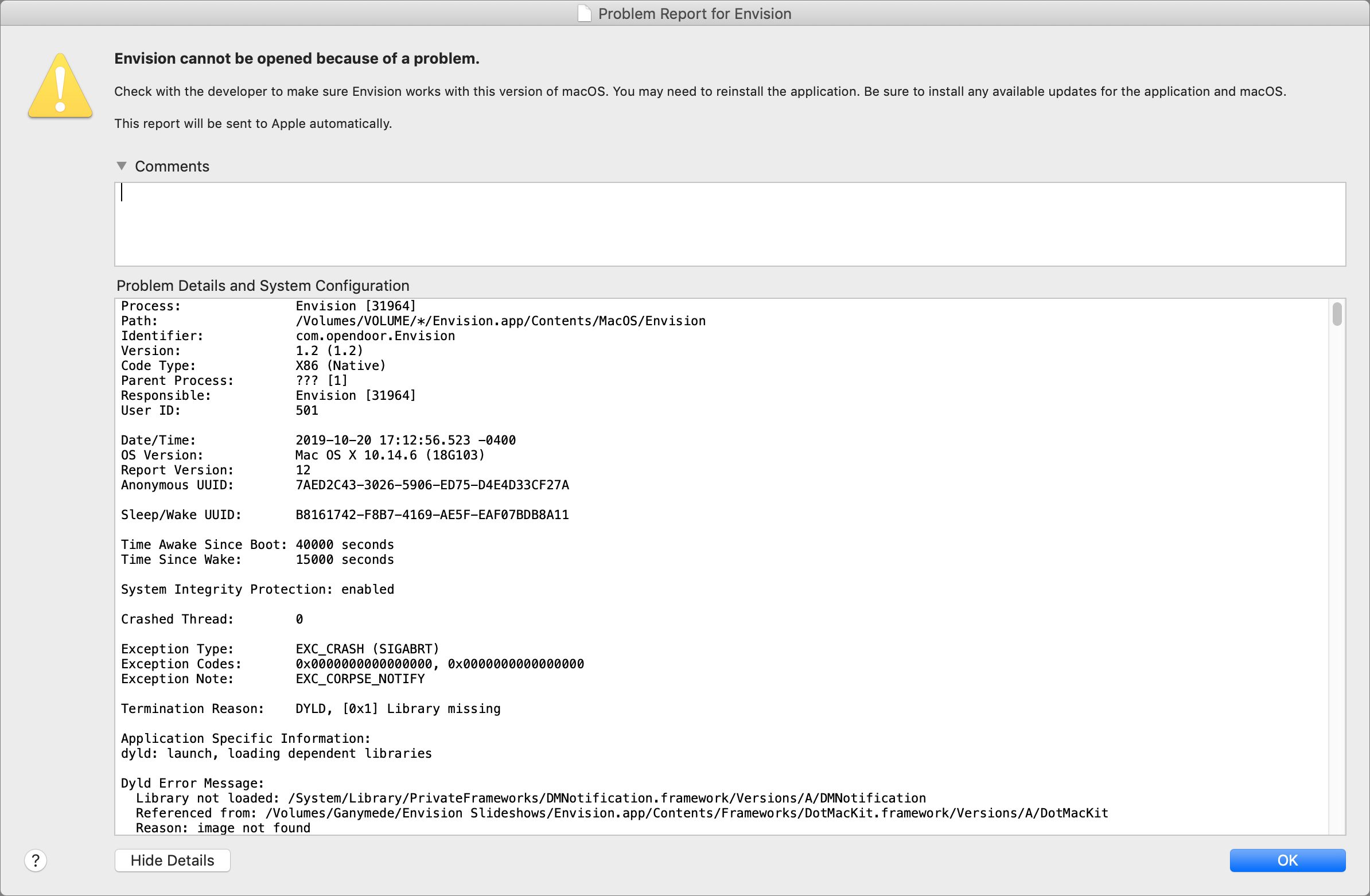

Crash Reports Don’t Identify Non-Crashing Bugs

If you have reporting turned on (which I recommend), Apple’s built-in crash reporter automatically reports application crashes, and even kernel crashes, back to the company. A crash report includes a lot of data. Especially useful is the stack trace, which shows exactly where the code crashed, and more importantly, how it got to that point. A stack trace often enables an engineer to track down the crash and fix it.

Crash reports are uniquely identified by the stack trace. The same stack trace on multiple crash reports means all those users are seeing the same crash. The crash reporter backend sorts crash reports by matching the stack traces, and those that occur most often get the highest priority. Apple takes crash reports seriously and tries hard to fix them. As a result, Apple software crashes a lot less than it used to.

Unfortunately, the crash reporter can’t catch non-crashing bugs. It’s blind to the photos that never upload to iCloud, the contact card that just won’t sync from my Mac to my iPhone, the Time Capsule backups that get corrupted and have to be restarted every few months, and the setup app on my new iPhone 11 that got caught in a loop repeatedly asking me to sign in to my iCloud account, until I had to call Apple support. (These are all real problems I’ve experienced.)

Apple tracks non-crashing bugs the old-fashioned way: with human testers (QA engineers), automated tests, and reports from third-party developers and Apple support. Needless to say, this approach is as much an art as it is a science, and it’s much harder both to identify non-crashing bugs (particularly from reports from Apple support) and for the engineers to track them down.

Less-Important Bugs Are Triaged

During development, Apple triages bugs based on the phase of the development cycle and the bug severity. Before alpha, engineers can fix pretty much any bug they want to. But as development moves into alpha, and then beta, only serious bugs that block major features are fixed, and as the ship date nears, only bugs that cause data loss or crashes get fixed.

This approach is sensible. As an engineer, every time you change the code, there’s a chance you’ll introduce a new bug. Changes also trigger a whole new round of testing. When you’re close to shipping, a known bug with understood impact is better than adding a fix that might break something new that you’d be unaware of.

Bugs that generate a lot of Apple Store visits or support calls usually get fixed. After all, it costs serious money to pay enough support reps to help lots of users. It’s much cheaper to fix the bug. When I worked on Apple products, we’d get a list of the top bugs driving Apple Store visits and support calls, and we were expected to fix them.

Unfortunately, bugs that are rare or not terribly serious—those that cause mere confusion instead of data loss—are continually pushed to the back burner by the triage system.

Regressions Get Fixed. Old Bugs Get Ignored.

Apple is lousy at fixing old bugs.

Apple pays special attention to new products like the iPhone 11, looking for serious customer problems. It jumps on them quickly and generally does a good job of eradicating major issues. But any bugs that are minor or unusual enough to survive this early scrutiny may persist forever.

Remember what I said about changes causing new bugs? If an engineer accidentally breaks a working feature, that’s called a regression. They’re expected to fix it.

But if you file a bug report, and the QA engineer determines that bug also exists in previous releases of the software, it’s marked “not a regression.” By definition, it’s not a new bug, it’s an old bug. Chances are, no one will ever be assigned to fix it.

Not all groups at Apple work this way, but many do. It drove me crazy. One group I knew at Apple even made “Not a Regression” T-shirts. If a bug isn’t a regression, they don’t have to fix it. That’s why the iCloud photo upload bug and the contact syncing bug I mentioned above may never be fixed.

Automated Tests Are Used Sparingly

The software industry goes through fads, just like the fashion industry. Automated testing is currently fashionable. There are various types of automated testing: test-driven design, unit tests, user-driven testing, etc. No need to go into the details here, except to say that, apart from a few specific areas, Apple doesn’t do a lot of automated testing. Apple is highly reliant on manual testing, probably too much so.

The most significant area of automated testing is battery performance. Every day’s operating system build is loaded onto devices (iPhones, iPads, Apple Watches, etc.) that run through a set of automated tests to ensure that battery performance hasn’t degraded. (Of course, these automated tests look only at Apple code, so real-world interactions can—and often do—result in significant battery performance issues that have to be tracked down and fixed manually.)

Beyond batteries, a few groups inside Apple are known for their use of automated tests. Safari is probably the most famous. Every code check-in triggers a performance test. If the check-in slows Safari performance, it’s rejected. More automated testing would probably help Apple’s software quality.

Complexity Has Ballooned

Another complication for Apple is the continually growing complexity of its ecosystem. Years ago, Apple sold only Macs. Processors had only one core. A program with 100,000 lines of code was large, and most were single-threaded.

A modern Apple operating system has tens of millions of lines of code. Your Mac, iPhone, iPad, Apple Watch, AirPods, and HomePod all talk to each other and talk to iCloud. All apps are multi-threaded and communicate with one another over the (imperfect) Internet.

Today’s Apple products are vastly more complex than in the past, which makes development and testing harder. The test matrix doesn’t just have more rows (for features and OS versions), it also has more dimensions (for compatible products it has to test against). Worse, asynchronous events like multiple threads running on multiple cores, push notifications, and network latency mean it’s practically impossible to create a comprehensive test suite.

Looking Forward

In an unprecedented move, Apple announced iOS 13.1 before iOS 13.0 shipped, a rare admission of how serious the software quality problem is. Apple has immense resources, and the company’s engineers will tame this year’s problem.

In the short term, you can expect more bug fix updates on a more frequent schedule than in past years. Longer-term, I’m sure that the higher-ups at Apple are fully aware of the problem and are pondering how best to address it. Besides the fact that bugs are expensive, both in support costs and engineer time, they’re starting to become a public relations concern. Apple charges premium prices for premium products, and lapses in software quality stand to hurt the company’s reputation.

David Shayer was an Apple software engineer for 18 years. He worked on the iPod, the Apple Watch, and Apple’s bug-tracking system Radar, among other projects.

USB Storage with iOS 13: The FAQ

Of all the email I’ve received about iOS 13 from readers of Take Control of iOS 13 and iPadOS 13, questions about using external USB drives with the Files app have been the most frequent. Here are answers to common questions I’ve received, and to other questions I expect many users to have.

Can I use external USB drives with an iPhone, or does the feature work only on the iPad?

Although Apple has marketed this feature primarily in relation to the iPad—specifically the iPad Pro—it works just the same in iOS 13 on an iPhone as it does in iPadOS.

What types of storage devices can iOS 13 read?

iOS 13 can read any standard USB storage device as long as it has been formatted with a compatible file system and has sufficient power provided (see the next two points). In short, most storage devices should work.

Niles Mitchell made a series of YouTube videos in which he connects obscure storage devices—including an Iomega Zip disk!—to an iPhone running iOS 13.

How do I connect a USB storage device to my iPhone or iPad?

It depends. Most iOS 13-compatible devices have a Lightning port, while 2018 iPad Pro models have a USB-C port:

- Lightning options: If your device has a Lightning port, you’ll need a Lightning-to-USB adapter. I strongly recommend Apple’s Lightning to USB 3 Camera Adapter because it supports USB 3 and offers a Lightning passthrough port for power, which will be necessary for some devices (see “Buy the Best Lightning to USB Adapter for iOS 13,” 12 August 2019). If you have the older USB 2 adapter without power passthrough, you can use a powered USB hub to power your storage devices. You can also buy Lightning-based thumb drives that eliminate the need for the adapter and passthrough power.

- USB-C options: Your best bet for USB-C-equipped iPads is either a USB-C–based thumb drive or one of the multitude of USB-C hubs that offer a USB-A port.

What file systems does iOS support?

As far as I can find, Apple doesn’t document what file systems iOS 13 can use: not in the support documents, nor in the most recent iPhone and iPad user guides. So I took matters into my own hands, repeatedly erasing and reformatting a thumb drive and plugging it into my iPhone to see if it would work. Long story short: iOS can read all non-encrypted file systems supported by the Mac’s Disk Utility.

How should you format a storage drive for use with iOS? Here are my recommendations:

- MS-DOS (FAT): FAT is the most compatible file system if you need to share your storage drive between iOS, macOS, Windows, and Linux. However, it comes with some irritating limitations: files must be smaller than 4 GB, filenames must be eight characters or less, and all filenames must be in capital letters with no spaces.

- exFAT: exFAT is a newer form of FAT and has fewer limitations. It’s a good choice for portability between iOS, macOS, and Windows. Linux can also use exFAT, though you’ll have to install some system extensions. (Microsoft has promised exFAT support in the Linux kernel but has provided no firm commitment to when that will happen.)

- Mac OS Extended (Journaled): The classic Mac file system, also known as HFS+, works fine if you plan to share a drive only between iOS and macOS.

- APFS: There isn’t much point to formatting a drive as APFS unless you’re planning to boot from it or want to play with containers and volumes.

How do I access my USB storage from Files?

On the iPad in landscape orientation, the drive appears in the sidebar automatically.

On an iPhone or an iPad in portrait orientation, tap the Browse icon on the bottom of the screen to jump to the Browse screen, which lists all of your locations.

How do I copy files to and from USB storage?

The easiest method is to tap and hold a file until the contextual menu appears and choose Copy. Then navigate to the destination, tap and hold a blank spot in the directory, and choose Paste from the contextual menu. To move a file, choose Move instead of Copy and choose a destination from the browser.

On the iPad, you can use drag-and-drop to copy the file where it needs to go. The easiest way is to split the Files window, pull up the location in the split, and then drag the file from the original window (see “Here’s What Sets iPadOS Apart from iOS,” 25 September 2019). You might find this handier than the above method if you have a lot of files to copy.

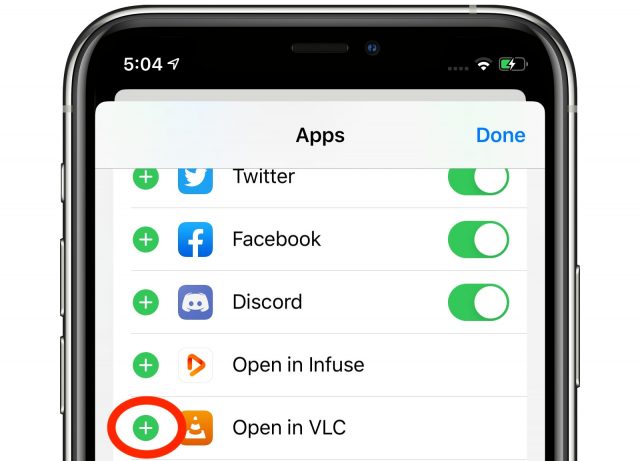

Can I play media from USB external storage?

Yes, you can, which is an effective way to store movies without taking up valuable on-device space. I tested media playback with the open-source VLC, but other apps might work too. Tap and hold a media file until the contextual menu appears, tap Share, and then tap VLC or your desired app. VLC appears in the second row of the activity view—you may have to swipe left and tap More to reveal it.

If you plan to do this regularly, you can pin VLC to the Files activity view. On the rightmost screen pictured above, you can tap Edit in the upper-right corner and then tap the plus button to the left of Open in VLC.

When you tap the VLC icon in the Files activity view, be patient since it may take a few seconds before the video or other media file starts playing. I found that sometimes it didn’t play on the first try, requiring a second pass at opening the file in VLC.

Do I have to eject a drive before removing it, like on the Mac?

No, and in fact, that’s not even an option. Just use common sense and don’t pull a drive when it’s reading data or having data written to it.

Can I ask another question?

Absolutely. Post it in the comments and I’ll do my best to answer.

Catalina’s Sidecar Turns an iPad into a Second Mac Monitor

One marquee feature in macOS 10.15 Catalina is Sidecar, which enables the use of an iPad as a second Mac monitor. That is handy for extending the Mac desktop to gain added workspace, or for mirroring a Mac’s desktop on a second screen to share information with others more conveniently.

Turning an iPad into a secondary display is not a new idea. Third-party software, such as Duet Display and Air Display, has turned iPads into Mac monitors for years. AstroHQ even came up with a hardware approach, in the form of its $69.99 Luna Display dongles for Mac, to provide better performance and reliability (see “Luna Display Turns an iPad into a Responsive Mac Screen,” 7 December 2018).

AstroHQ recently lamented that it had been “Sherlocked” by Apple and Sidecar, but insisted it was still in the game. Indeed, the Luna can do things Sidecar cannot. Most recently, AstroHQ announced a Mac-to-Mac mode for Luna Display that can turn one Mac into a second display for another. That’s something TidBITS has been interested in for years—see “Build Your Own 23-inch MacBook” (5 February 2007) and “Tools We Use: Teleport” (27 August 2007)—and we hope to evaluate Luna Display’s Mac-to-Mac mode soon.

Apple’s Sidecar feature, though, is free (assuming users already have all the hardware pieces). The fact that it’s built into macOS means it may relegate some third-party competitors to niche status (or the pursuit of other markets, such as Windows and Android.)

With Sidecar, Apple mostly delivers on its promise to readily transform an iPad into a Mac screen with little fuss and a grab bag of unique capabilities. Be warned, though, that Sidecar is still a bit rough around the edges, so expect to see glitches.

Attaching the Sidecar

Sidecar isn’t for everyone, thanks to stringent hardware requirements. You need a Mac with a Skylake processor or newer running Catalina—this leaves out plenty of older Catalina-ready Macs—along with an Apple Pencil-compatible iPad, which also must be running iPadOS 13.

Sidecar works over a wired or wireless connection. Tethering the tablet to the Mac via a USB-C or Lightning cable provides the best performance and reliability. The wireless option isn’t bad, though—when it works at all.

For a wireless connection, your Mac and iPad must be using the same iCloud account. Both devices must have Bluetooth, Wi-Fi, and Handoff (in Settings > General > Handoff) enabled. In wireless mode, Sidecar uses Bluetooth for initial iPad detection and a point-to-point hookup for data transfers once connected, with an effective range of about 10 feet (3 meters).

If the wireless mode doesn’t work for you, try a physical cable. I resorted to this approach on a number of occasions when the wireless option inexplicably failed me. The USB-C or Lightning cable must connect directly to a Mac, not indirectly via a hub.

Regardless of the connection method, to begin using Sidecar, click the Display icon in the menu bar, and choose your iPad from the menu. The Mac’s screen will blink momentarily, and then the iPad’s screen will be replaced by an extension of the Mac desktop, just as you’d expect.

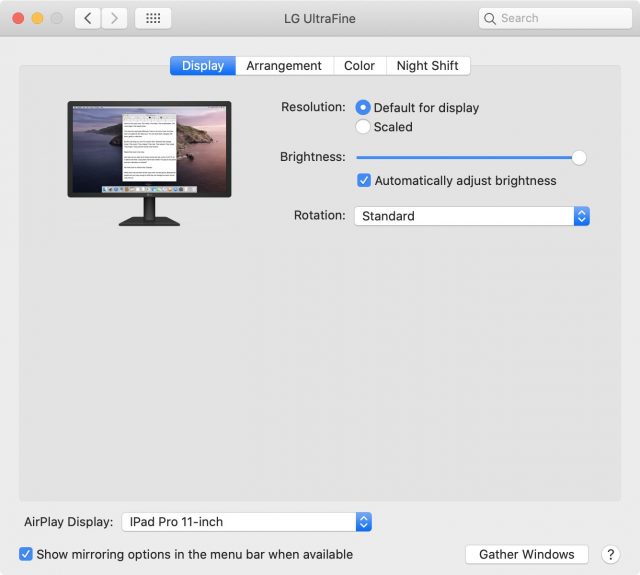

If that doesn’t work, open System Preferences > Display, and make sure that your iPad is the designated AirPlay display via the pop-up menu at the bottom.

Once everything is set up properly, you can use the iPad with your Mac just as you would any dual-display setup. For instance, in System Preferences > Display, you can turn screen mirroring on and off or adjust how your Mac and iPad displays are arranged in relation to each other.

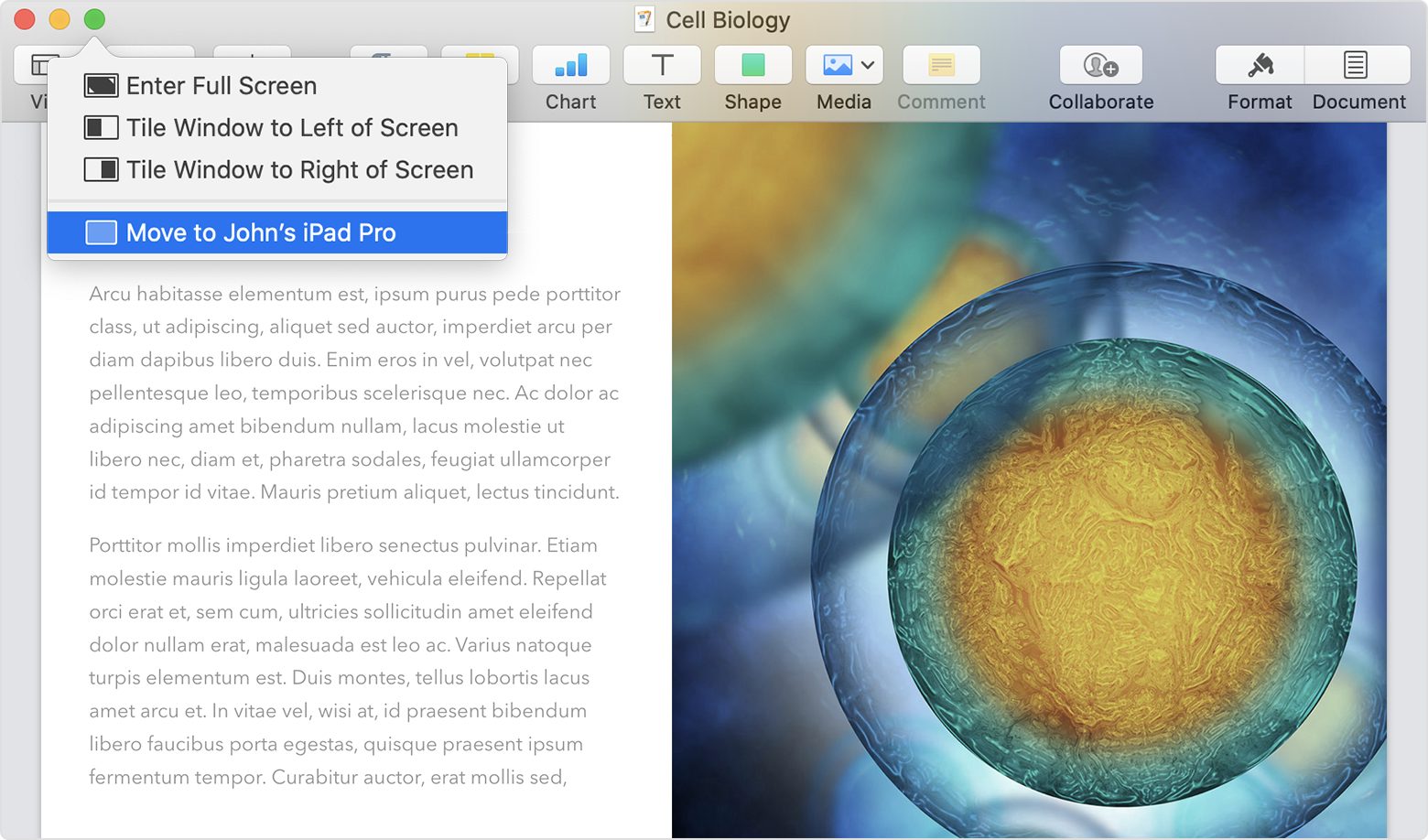

Now you can decide which apps appear on the iPad screen. This can be as simple as dragging windows from one display to the other. Better yet, click the Window menu available in many apps, and you’ll see an option to move windows with one click: Move to iPad or Move Window Back to Mac, depending on which device contains the window. Some apps lack a Window menu, but similar window-moving options appear when you hover the Mac’s pointer over the window’s green zoom button.

Special Sidecar Features

Sidecar offers several unique features that make it interesting if a bit quirky. For instance, the iPad Sidecar’s tablet interface displays two optional control strips—one running horizontally on the iPad display, the other vertically—that respond to finger control.

The horizontal strip, called the Touch Bar, should be instantly familiar to anyone who has used the physical Touch Bar on a MacBook Pro, and it looks and works much the same. Different control options appear depending on which app you are using, just as on the MacBook Pro. The physical Touch Bar has received lukewarm reviews, and I’m not sure the Sidecar equivalent will be any more popular, but some people might like it.

The vertical strip, called the Sidebar, does a number of things:

The top two buttons show or hide the Mac menu bar on the iPad screen when a Mac app is in full-screen mode and shift the Dock between the Mac and iPad screens.

The top two buttons show or hide the Mac menu bar on the iPad screen when a Mac app is in full-screen mode and shift the Dock between the Mac and iPad screens.- In the middle of the strip is a quartet of modifier keys: Command, Option, Control, and Shift. These are mostly for specialized users, like artists who need to engage a modifier key with one hand while using an Apple Pencil with the other. Apple provides an example:

While sculpting a model in ZBrush, an artist can use the modifier keys in the sidebar to zoom, rotate, and pan around their model as they draw with Apple Pencil. Double-tapping a modifier key will keep it active, allowing more prolonged work without the need to hold it down. An additional tap will deactivate the key.

- At the bottom of the Sidebar are an Undo button, a Keyboard button to show and hide a small floating keyboard (which appears to be the stock iPadOS 13 virtual mini-keyboard), and a Disconnect button to end a Sidecar session.

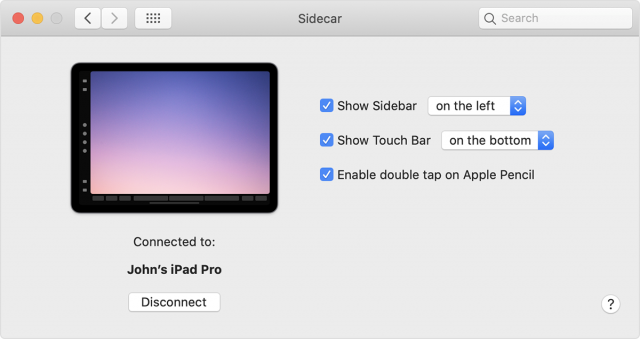

You can configure these control strips in System Preferences > Sidecar. It lets you toggle the Sidebar and Touch Bar on or off. It also enables you to move the Sidebar to the left or right of the screen, and put the Touch Bar on the top or bottom. The pane also lets you initiate or end a Sidecar session, as an alternative to using the Display menu in the menu bar or the Disconnect button on the Sidebar.

Honestly, I never found the Sidebar or Touch Bar particularly useful, and mostly kept them hidden to maximize the usable screen real estate on the iPad.

Using the Apple Pencil

Sidebar and Touch Bar aside, you largely can’t use the iPad via finger input during a Sidecar session, which is confusing since the iPad is fundamentally a touch-driven device. For the most part, you’ll use the mouse cursor on the iPad screen, like any other display.

There are a few ways of interacting with Mac apps on Sidecar using your fingers. Use vertical two-finger swipes to scroll through long Web pages and documents. When you are working in text documents, you can use iPadOS 13’s three-finger actions to copy (pinch), cut (pinch twice), paste (pinch out), undo (swipe left or double-tap) and redo (swipe right), much as you would using native iPad apps.

Sadly, inconsistent fingers-on-iPad responsiveness kept me from relying on these actions in Sidecar as routine alternatives to Mac mouse and keyboard input.

If you have an Apple Pencil, you can also use it to tap Mac interface elements on the iPad screen and to control pointer and cursor positioning. Swiping with the Apple Pencil selects text.

As with finger input, I found Apple Pencil support to be unreliable. Sometimes it worked, but it often failed, for reasons I couldn’t discern. I gave up and relied on my Mac input devices most of the time.

Sidecar also supports several everyday tasks via Continuity features, which work independently from Sidecar. One of these, Continuity Sketch, lets you sketch with an Apple Pencil on your iPad and insert the sketch into a Mac document. Similarly, Continuity Markup lets you sign Mac documents, correct papers, or circle details in images using an Apple Pencil on the iPad.

More sophisticated Apple Pencil actions are possible when using Sidecar with a variety of third-party apps—including productivity apps, such as BusyCal, Evernote and PDFpen, as well as apps for drawing, design, and photo or video editing, such as Adobe Illustrator, Affinity Photo, Final Cut Pro and Pixelmator Pro. Note the ZBrush example above.

A double-tap on the side of the Apple Pencil 2 engages particular features in some apps. If this is the case with apps you are using, enable double-tap functionality via the Sidecar pane in System Preferences. Otherwise, keep it deactivated.

Other Features

Here are a few other Sidecar features and quirks to keep in mind:

- Sidecar and iPadOS: Sidecar doesn’t prevent you from using iPadOS when Sidecar is engaged. Swipe up from the bottom of the iPad screen, and the Home screen reappears, with a Sidecar icon on the far right of the iPad’s Dock. Tap the icon to re-engage Sidecar. You can even have iPadOS Slide Over windows floating atop Sidecar—just swipe left from the right side of the iPad screen. (Apple claims you can position Sidecar and an iPad app next to each other via Split View, but I couldn’t get this to work.)

- Sidecar with other external displays: Sidecar supports only one iPad at a time. However, it has no problem working in tandem with a conventional secondary monitor that is also hooked up to the Mac, for a total of three active screens operating as a single, extended display.

- Limited display settings: You can’t change display scaling and color profiles on the iPad. My 11-inch iPad Pro’s screen is stuck at a pixel-doubled resolution of 1116-by-756 on the Mac (which doesn’t look bad), compared to the IPad’s native resolution of 2388-by-1668 pixels.

- Sidecar and external keyboards: Although it’s possible to use Sidecar’s cramped on-screen keyboard, it’s not suitable for extended interaction, and the full system keyboard is not available. You can use an external keyboard, such as Apple’s Smart Keyboard for the iPad, perhaps when moving away from the Mac and using your iPad and Sidecar on the couch. Prepare for wonkiness, though. I’ve already noted issues with finger and Apple Pencil screen interaction that would cripple such work sessions even with an attached keyboard. Also, Command+Q does not work with a physical keyboard. Command+Tab during a Sidecar session does not bring up the Mac’s app switcher, as you might expect, but the iPad app switcher. Command+Space won’t open Spotlight on the Mac, which would make the most sense during a Sidecar session, but iPadOS search.

Sidecar Upshot

Sidecar does not yet match third-party alternatives feature for feature, as Luna Display maker AstroHQ takes pains to note. But the fact that Sidecar is free and built into the latest version of macOS and iPadOS means it automatically has a broad audience.

Apple has aspired to make Sidecar dead simple to use, which adds to its appeal. It largely succeeds in its goal of transforming an iPad into a Mac screen.

But, as I discovered, the technology is still glitchy and unreliable. Feel free to give it a try, but temper your expectations for now.